Java

Java

javaTutorial

javaTutorial

Starting from scratch: Springboot guide to quickly build kafka integrated environment

Starting from scratch: Springboot guide to quickly build kafka integrated environment

Starting from scratch: Springboot guide to quickly build kafka integrated environment

Springboot Integrated Kafka Overview

Apache Kafka is a distributed streaming service that allows you to produce, consume and store data with extremely high throughput. It is widely used to build a wide variety of applications such as log aggregation, metric collection, monitoring, and transactional data pipelines.

Springboot is a framework for simplifying Spring application development. It provides out-of-the-box autowiring and conventions to easily integrate Kafka into Spring applications.

Build the environment required for Kafka to integrate Springboot

1. Install Apache Kafka

- Download the Apache Kafka distribution.

- Unzip the distribution and start the Kafka service.

- Check the Kafka service log to make sure it is running normally.

2. Install Springboot

- Download the Springboot distribution.

- Extract the distribution and add it to your system's path.

- Create a Springboot application.

Code Example

1. Create Springboot application

public class SpringbootKafkaApplication {

public static void main(String[] args) {

SpringApplication.run(SpringbootKafkaApplication.class, args);

}

}2. Add Kafka dependency

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-kafka</artifactId>

</dependency>3. Configure Kafka producer

@Bean

public ProducerFactory<String, String> senderFactory() {

Map<String, Object> config = new LinkedHashMap<>();

config.put(ProducerConfig.BOOTSTRAP_ certification_URL_setConfig, "kafka://127.0.0.1:9092");

config.put(ProducerConfig.KEY_SERIALIZER_setClass_Config, StringDeserializer.class);

config.put(ProducerConfig.KEY_SERIALIZER_setClass_Config, StringDeserializer.class);

return new SimpleKafkaProducerFactory<>(config);

}4. Configure Kafka consumer

@Bean

public ConcurrentKafkaListenerContainerFactory<String, String> kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, String> factory = new ConcurrentKafkaListenerContainerFactory<>();

factory.setBrokerAddresses("127.0.0.1:9092");

factory.setKeyDeserializer(new StringDeserializer());

factory.setKeyDeserializer(new StringDeserializer());

return factory;

}5. Create Kafka producer service

@Service

public class ProducerService {

@Autowired

private KafkaTemplate<String, String> kafkaTemplate;

public void sendMessage(String message) {

kafkaTemplate.send("test-kafka", message);

}

}6. Create Kafka consumer service

@Service

public class ReceiverService {

@KafkaListener(topics = "test-kafka", id = "kafka-consumer-1")

public void receiveMessage(String message) {

System.out.println("Message received: " + message);

}

}Test

- Start the Kafka service.

- Start the Springboot application.

- Use ProducerService to send a message.

- Check the Kafka service log to make sure it has received the information correctly.

- Check the Springboot application log to make sure it has consumed the information correctly.

Summary

This article demonstrates how to use Springboot to integrate Kafka into a Spring application. We first gave an overview of Kafka and Springboot, and explained how to build the environment required for Kafka to integrate Springboot. Next, we provide a detailed Springboot application example that demonstrates how to use Springboot to produce and consume Kafka information.

The above is the detailed content of Starting from scratch: Springboot guide to quickly build kafka integrated environment. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to implement real-time stock analysis using PHP and Kafka

Jun 28, 2023 am 10:04 AM

How to implement real-time stock analysis using PHP and Kafka

Jun 28, 2023 am 10:04 AM

With the development of the Internet and technology, digital investment has become a topic of increasing concern. Many investors continue to explore and study investment strategies, hoping to obtain a higher return on investment. In stock trading, real-time stock analysis is very important for decision-making, and the use of Kafka real-time message queue and PHP technology is an efficient and practical means. 1. Introduction to Kafka Kafka is a high-throughput distributed publish and subscribe messaging system developed by LinkedIn. The main features of Kafka are

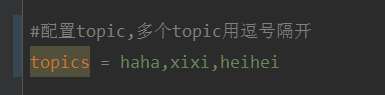

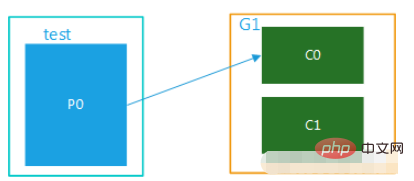

How to dynamically specify multiple topics with @KafkaListener in springboot+kafka

May 20, 2023 pm 08:58 PM

How to dynamically specify multiple topics with @KafkaListener in springboot+kafka

May 20, 2023 pm 08:58 PM

Explain that this project is a springboot+kafak integration project, so it uses the kafak consumption annotation @KafkaListener in springboot. First, configure multiple topics separated by commas in application.properties. Method: Use Spring’s SpEl expression to configure topics as: @KafkaListener(topics="#{’${topics}’.split(’,’)}") to run the program. The console printing effect is as follows

How SpringBoot integrates Kafka configuration tool class

May 12, 2023 pm 09:58 PM

How SpringBoot integrates Kafka configuration tool class

May 12, 2023 pm 09:58 PM

spring-kafka is based on the integration of the java version of kafkaclient and spring. It provides KafkaTemplate, which encapsulates various methods for easy operation. It encapsulates apache's kafka-client, and there is no need to import the client to depend on the org.springframework.kafkaspring-kafkaYML configuration. kafka:#bootstrap-servers:server1:9092,server2:9093#kafka development address,#producer configuration producer:#serialization and deserialization class key provided by Kafka

How to build real-time data processing applications using React and Apache Kafka

Sep 27, 2023 pm 02:25 PM

How to build real-time data processing applications using React and Apache Kafka

Sep 27, 2023 pm 02:25 PM

How to use React and Apache Kafka to build real-time data processing applications Introduction: With the rise of big data and real-time data processing, building real-time data processing applications has become the pursuit of many developers. The combination of React, a popular front-end framework, and Apache Kafka, a high-performance distributed messaging system, can help us build real-time data processing applications. This article will introduce how to use React and Apache Kafka to build real-time data processing applications, and

Five selections of visualization tools for exploring Kafka

Feb 01, 2024 am 08:03 AM

Five selections of visualization tools for exploring Kafka

Feb 01, 2024 am 08:03 AM

Five options for Kafka visualization tools ApacheKafka is a distributed stream processing platform capable of processing large amounts of real-time data. It is widely used to build real-time data pipelines, message queues, and event-driven applications. Kafka's visualization tools can help users monitor and manage Kafka clusters and better understand Kafka data flows. The following is an introduction to five popular Kafka visualization tools: ConfluentControlCenterConfluent

Comparative analysis of kafka visualization tools: How to choose the most appropriate tool?

Jan 05, 2024 pm 12:15 PM

Comparative analysis of kafka visualization tools: How to choose the most appropriate tool?

Jan 05, 2024 pm 12:15 PM

How to choose the right Kafka visualization tool? Comparative analysis of five tools Introduction: Kafka is a high-performance, high-throughput distributed message queue system that is widely used in the field of big data. With the popularity of Kafka, more and more enterprises and developers need a visual tool to easily monitor and manage Kafka clusters. This article will introduce five commonly used Kafka visualization tools and compare their features and functions to help readers choose the tool that suits their needs. 1. KafkaManager

Sample code for springboot project to configure multiple kafka

May 14, 2023 pm 12:28 PM

Sample code for springboot project to configure multiple kafka

May 14, 2023 pm 12:28 PM

1.spring-kafkaorg.springframework.kafkaspring-kafka1.3.5.RELEASE2. Configuration file related information kafka.bootstrap-servers=localhost:9092kafka.consumer.group.id=20230321#The number of threads that can be consumed concurrently (usually consistent with the number of partitions )kafka.consumer.concurrency=10kafka.consumer.enable.auto.commit=falsekafka.boo

The practice of go-zero and Kafka+Avro: building a high-performance interactive data processing system

Jun 23, 2023 am 09:04 AM

The practice of go-zero and Kafka+Avro: building a high-performance interactive data processing system

Jun 23, 2023 am 09:04 AM

In recent years, with the rise of big data and active open source communities, more and more enterprises have begun to look for high-performance interactive data processing systems to meet the growing data needs. In this wave of technology upgrades, go-zero and Kafka+Avro are being paid attention to and adopted by more and more enterprises. go-zero is a microservice framework developed based on the Golang language. It has the characteristics of high performance, ease of use, easy expansion, and easy maintenance. It is designed to help enterprises quickly build efficient microservice application systems. its rapid growth