Python crawls music URLs in qq music and batch downloads

This article mainly introduces how to use Python to crawl qq music The article provides a detailed introduction and sample code for music URLs and related information on batch downloading. I believe it has certain reference value for everyone. Friends who need it can take a look below.

Preface

There is still a lot of music on qq music. Sometimes I want to download good music, but every time I download it on the web page, I have to log in annoyingly. So, here comes the qqmusic crawler. At least I think the core of the for loop crawler is to find the URL of the element to be crawled (don’t laugh at me if I’m wrong)

The implementation is as follows

#Find the url:

This url is not as easy to find as other websites. It makes me very tired. The key is that there is a lot of data. I picked out the useful data from so much data and finally combined it into the real music. Here are some intermediate URLs I compiled yesterday:

#. url1: https://c.y.qq.com/soso/fcgi-bin/client_search_cp?&lossless=0&flag_qc=0&p=1&n=20&w=雨die

#url2: https://c.y.qq.com/base /fcgi-bin/fcg_music_express_mobile3.fcg?&jsonpCallback=MusicJsonCallback&cid=205361747&[songmid]&C400+songmid+.m4a&guid=6612300644

#url3:http://dl.stream.qqmusic.qq.com/[filename] ?vkey=[vkey] (where vkey replaces the string unique to the music)

requests(url1)

is searched by The list obtains the songmid and mid of each music (according to the author's observation, these two values are unique to each music). With these two values, the complete specific value of url2 is obtained.

#requests(url2)

Get the vkey value of each music in the search results. After the author’s observation, the filename is C400songmid.m4a, and then the specific value of url3 is determined. And url3 is the real url of the music. Since the author did not study the other parameters of this url thoroughly enough, a maximum of 20 music urls are returned each time. With the url, you can enjoy Tencent's music to your heart's content.

Here is the code block of srcs:

import requests import urllib import json word = '雨蝶' res1 = requests.get('https://c.y.qq.com/soso/fcgi-bin/client_search_cp?&t=0&aggr=1&cr=1&catZhida=1&lossless=0&flag_qc=0&p=1&n=20&w='+word) jm1 = json.loads(res1.text.strip('callback()[]')) jm1 = jm1['data']['song']['list'] mids = [] songmids = [] srcs = [] songnames = [] singers = [] for j in jm1: try: mids.append(j['media_mid']) songmids.append(j['songmid']) songnames.append(j['songname']) singers.append(j['singer'][0]['name']) except: print('wrong') for n in range(0,len(mids)): res2 = requests.get('https://c.y.qq.com/base/fcgi-bin/fcg_music_express_mobile3.fcg?&jsonpCallback=MusicJsonCallback&cid=205361747&songmid='+songmids[n]+'&filename=C400'+mids[n]+'.m4a&guid=6612300644') jm2 = json.loads(res2.text) vkey = jm2['data']['items'][0]['vkey'] srcs.append('http://dl.stream.qqmusic.qq.com/C400'+mids[n]+'.m4a?vkey='+vkey+'&guid=6612300644&uin=0&fromtag=66')

With srcs, downloading is naturally no problem. Of course, you can also copy the src to the browser to download the singer and song title. You can also use large Python to download in batches, which is nothing more than a loop, similar to the method we used to download sogou

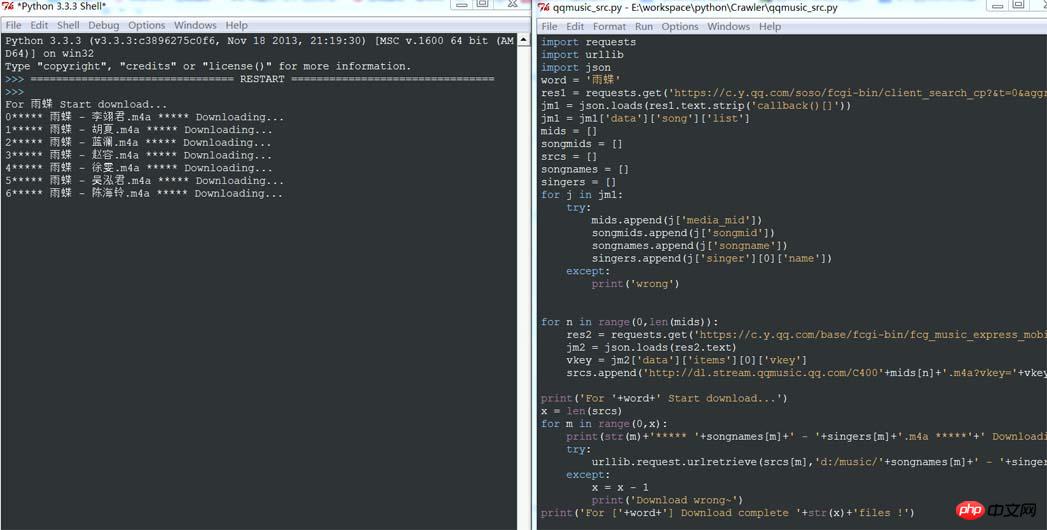

pictures: (author's py version: python3.3.3)

print('For '+word+' Start download...') x = len(srcs) for m in range(0,x): print(str(m)+'***** '+songnames[m]+' - '+singers[m]+'.m4a *****'+' Downloading...') try: urllib.request.urlretrieve(srcs[m],'d:/music/'+songnames[m]+' - '+singers[m]+'.m4a') except: x = x - 1 print('Download wrong~') print('For ['+word+'] Download complete '+str(x)+'files !')

The above two pieces of code are written in the same py file and can be run to download the music corresponding to the keyword

#Running effect:

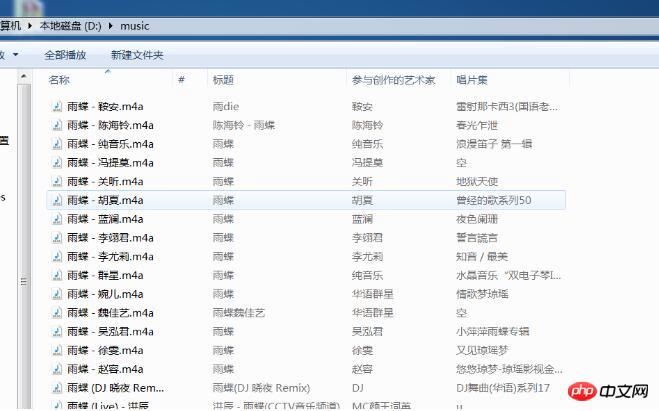

Download starts , below... Go to the download directory and take a look:

Download starts , below... Go to the download directory and take a look:

music has been successfully downloaded. . .

music has been successfully downloaded. . .

At this point, the description of the idea and implementation of qqmusic’s url crawler program is complete.

#Usage:musicplayer Students who have made shells should be able to use it. In fact, the original intention of doing this is to serve my HTML-based musicplayer. But now I am stuck in the link of js calling py. I will look for it again. If you understand, please let me know. Thank you very much!

The above is the detailed content of Python crawls music URLs in qq music and batch downloads. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to solve the permissions problem encountered when viewing Python version in Linux terminal?

Apr 01, 2025 pm 05:09 PM

How to solve the permissions problem encountered when viewing Python version in Linux terminal?

Apr 01, 2025 pm 05:09 PM

Solution to permission issues when viewing Python version in Linux terminal When you try to view Python version in Linux terminal, enter python...

How to avoid being detected by the browser when using Fiddler Everywhere for man-in-the-middle reading?

Apr 02, 2025 am 07:15 AM

How to avoid being detected by the browser when using Fiddler Everywhere for man-in-the-middle reading?

Apr 02, 2025 am 07:15 AM

How to avoid being detected when using FiddlerEverywhere for man-in-the-middle readings When you use FiddlerEverywhere...

How to efficiently copy the entire column of one DataFrame into another DataFrame with different structures in Python?

Apr 01, 2025 pm 11:15 PM

How to efficiently copy the entire column of one DataFrame into another DataFrame with different structures in Python?

Apr 01, 2025 pm 11:15 PM

When using Python's pandas library, how to copy whole columns between two DataFrames with different structures is a common problem. Suppose we have two Dats...

How does Uvicorn continuously listen for HTTP requests without serving_forever()?

Apr 01, 2025 pm 10:51 PM

How does Uvicorn continuously listen for HTTP requests without serving_forever()?

Apr 01, 2025 pm 10:51 PM

How does Uvicorn continuously listen for HTTP requests? Uvicorn is a lightweight web server based on ASGI. One of its core functions is to listen for HTTP requests and proceed...

How to teach computer novice programming basics in project and problem-driven methods within 10 hours?

Apr 02, 2025 am 07:18 AM

How to teach computer novice programming basics in project and problem-driven methods within 10 hours?

Apr 02, 2025 am 07:18 AM

How to teach computer novice programming basics within 10 hours? If you only have 10 hours to teach computer novice some programming knowledge, what would you choose to teach...

How to solve permission issues when using python --version command in Linux terminal?

Apr 02, 2025 am 06:36 AM

How to solve permission issues when using python --version command in Linux terminal?

Apr 02, 2025 am 06:36 AM

Using python in Linux terminal...

How to handle comma-separated list query parameters in FastAPI?

Apr 02, 2025 am 06:51 AM

How to handle comma-separated list query parameters in FastAPI?

Apr 02, 2025 am 06:51 AM

Fastapi ...

How to get news data bypassing Investing.com's anti-crawler mechanism?

Apr 02, 2025 am 07:03 AM

How to get news data bypassing Investing.com's anti-crawler mechanism?

Apr 02, 2025 am 07:03 AM

Understanding the anti-crawling strategy of Investing.com Many people often try to crawl news data from Investing.com (https://cn.investing.com/news/latest-news)...