Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

PHP and phpSpider: How to deal with IP bans from anti-crawler websites?

PHP and phpSpider: How to deal with IP bans from anti-crawler websites?

PHP and phpSpider: How to deal with IP bans from anti-crawler websites?

PHP and phpSpider: How to deal with IP bans from anti-crawler websites?

Introduction:

In the process of web crawling or data collection, we often encounter some websites that adopt anti-crawler strategies and block IP addresses that frequently initiate access requests. This article will introduce how to use PHP and the phpSpider framework to deal with this IP blocking strategy and provide code examples.

- The principle and response strategy of IP banning

The principle of website banning of IP is generally based on the access frequency of IP address or the matching of given rules. To deal with this blocking strategy, we can take the following methods: - Use proxy IP: By using proxy IP, each request will be accessed through a different IP, thereby avoiding being banned by the website. This is a relatively simple and straightforward method. We can use the Proxy plug-in in the phpSpider framework to achieve this function. The sample code is as follows:

<?php

require 'vendor/autoload.php';

use phpspidercorephpspider;

use phpspidercoreequests;

// 设置代理ip

requests::set_proxy('http', 'ip地址', '端口号');

// 设置用户代理,模拟真实浏览器行为

requests::set_useragent('Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3');

// 其他请求设置...

$configs = array(

'name' => '代理ip示例',

'log_show' => true,

'user_agent' => 'Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)',

'domains' => array(

'example.com',

),

'scan_urls' => array(

'http://example.com/',

),

'list_url_regex' => array(

"http://example.com/list/d+",

),

'content_url_regex' => array(

"http://example.com/content/d+",

),

// 其他爬虫配置...

);

$spider = new phpspider($configs);

$spider->start();- Use IP proxy pool: Maintain a stable Available IP proxy pool, access by randomly selecting different proxy IPs to reduce the risk of being banned. We can use third-party IP proxy services or build our own IP proxy pool. The sample code is as follows:

<?php

require 'vendor/autoload.php';

use phpspidercorephpspider;

use phpspidercoreequests;

// 获取IP代理

function get_proxy_ip()

{

// 从代理池中随机选择一个IP

// ... 从代理池获取代理IP的代码

return $proxy_ip;

}

// 设置代理IP

requests::set_proxy('http', get_proxy_ip());

// 其他请求设置...

$configs = array(

// 爬虫配置

// ...

);

$spider = new phpspider($configs);

$spider->start();- Adjust request frequency: If the reason for being banned is sending requests frequently, you can adjust the frequency of requests and increase the interval between requests to avoid sending a large number of requests in a short period of time. . The sample code is as follows:

<?php

require 'vendor/autoload.php';

use phpspidercorephpspider;

use phpspidercoreequests;

// 设置请求间隔时间

requests::set_sleep_time(1000); // 1秒

// 其他请求设置...

$configs = array(

// 爬虫配置

// ...

);

$spider = new phpspider($configs);

$spider->start();- Use the phpSpider framework to implement anti-crawler strategies

phpSpider is a PHP Web crawler framework that simplifies the development process of web crawlers and provides some commonly used functions plugin. When crawling websites that need to deal with anti-crawlers, we can implement corresponding strategies by using the functions provided by the phpSpider framework. The following are some common functional plug-ins and sample codes: - Useragent plug-in: Set a disguised Useragent header information to simulate browser requests, which can avoid being recognized as a crawler by the website. The sample code is as follows:

<?php

require 'vendor/autoload.php';

use phpspidercorephpspider;

use phpspidercoreequests;

use phpspidercoreselector;

// 设置Useragent

requests::set_useragent('Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.3');

// 其他请求设置...

$configs = array(

// 爬虫配置

// ...

);

$spider = new phpspider($configs);

$spider->start();- Referer plug-in: Set a valid Referer value to simulate which page the user jumps from, which can sometimes bypass some anti-crawler detection. The sample code is as follows:

<?php

require 'vendor/autoload.php';

use phpspidercorephpspider;

use phpspidercoreequests;

// 设置Referer

requests::referer('http://www.example.com');

// 其他请求设置...

$configs = array(

// 爬虫配置

// ...

);

$spider = new phpspider($configs);

$spider->start();Summary:

This article introduces how to deal with the IP banning strategy of anti-crawler websites in PHP and phpSpider frameworks. By using proxy IP, IP proxy pool, adjusting request frequency and other methods, you can effectively avoid the risk of being banned. At the same time, the phpSpider framework provides some functional plug-ins, such as Useragent plug-in and Referer plug-in, which can help us better simulate browser behavior and further respond to anti-crawler strategies. I hope this article will be helpful to developers of web crawlers and data collection.

The above is the detailed content of PHP and phpSpider: How to deal with IP bans from anti-crawler websites?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

7 PHP Functions I Regret I Didn't Know Before

Nov 13, 2024 am 09:42 AM

7 PHP Functions I Regret I Didn't Know Before

Nov 13, 2024 am 09:42 AM

If you are an experienced PHP developer, you might have the feeling that you’ve been there and done that already.You have developed a significant number of applications, debugged millions of lines of code, and tweaked a bunch of scripts to achieve op

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

Visual Studio Code, also known as VS Code, is a free source code editor — or integrated development environment (IDE) — available for all major operating systems. With a large collection of extensions for many programming languages, VS Code can be c

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

JWT is an open standard based on JSON, used to securely transmit information between parties, mainly for identity authentication and information exchange. 1. JWT consists of three parts: Header, Payload and Signature. 2. The working principle of JWT includes three steps: generating JWT, verifying JWT and parsing Payload. 3. When using JWT for authentication in PHP, JWT can be generated and verified, and user role and permission information can be included in advanced usage. 4. Common errors include signature verification failure, token expiration, and payload oversized. Debugging skills include using debugging tools and logging. 5. Performance optimization and best practices include using appropriate signature algorithms, setting validity periods reasonably,

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

A string is a sequence of characters, including letters, numbers, and symbols. This tutorial will learn how to calculate the number of vowels in a given string in PHP using different methods. The vowels in English are a, e, i, o, u, and they can be uppercase or lowercase. What is a vowel? Vowels are alphabetic characters that represent a specific pronunciation. There are five vowels in English, including uppercase and lowercase: a, e, i, o, u Example 1 Input: String = "Tutorialspoint" Output: 6 explain The vowels in the string "Tutorialspoint" are u, o, i, a, o, i. There are 6 yuan in total

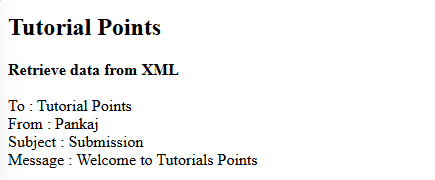

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

This tutorial demonstrates how to efficiently process XML documents using PHP. XML (eXtensible Markup Language) is a versatile text-based markup language designed for both human readability and machine parsing. It's commonly used for data storage an

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Static binding (static::) implements late static binding (LSB) in PHP, allowing calling classes to be referenced in static contexts rather than defining classes. 1) The parsing process is performed at runtime, 2) Look up the call class in the inheritance relationship, 3) It may bring performance overhead.

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are the magic methods of PHP? PHP's magic methods include: 1.\_\_construct, used to initialize objects; 2.\_\_destruct, used to clean up resources; 3.\_\_call, handle non-existent method calls; 4.\_\_get, implement dynamic attribute access; 5.\_\_set, implement dynamic attribute settings. These methods are automatically called in certain situations, improving code flexibility and efficiency.