Technology peripherals

Technology peripherals

AI

AI

360 Zhou Hongyi: The development of AI is an inevitable trend, and we must fully master the core technology of large models

360 Zhou Hongyi: The development of AI is an inevitable trend, and we must fully master the core technology of large models

360 Zhou Hongyi: The development of AI is an inevitable trend, and we must fully master the core technology of large models

On May 31, 360 Smart Life officially launched the 360 Intelligent Brain Vision large model and a variety of new AI hardware products, and announced that 360 Smart Life has officially entered the SMB market. After the meeting, Zhou Hongyi, founder of 360 Group, accepted interviews from the media on some hot topics related to large models in recent days.

Zhou Hongyi believes that the hallucination problem is currently the biggest bottleneck for large-scale models, but this problem is both its flaw and its feature. "There is an essential difference between large models and search. Search is simply copying knowledge. Large models try to understand all knowledge and "swallow" them, but this may cause details to be ignored."

He explained that the current large models can be used for some entertainment applications. For example, it can be used to write stories similar to "Monkey King vs. Ultraman". At this stage, adapting large-scale models to professional fields such as law, education, and healthcare is not feasible. Our goal is to transform the search engine into a knowledge base to solve the problem of knowledge ambiguity. When it comes to questions about knowledge accuracy, we can verify and revise answers through searches. ”

Zhou Hongyi believes that the visual large model released last night can be regarded as a large model vertical to the category. "To put it simply, the current large visual model is based on the large language model, from the initial understanding of language to the deeper interpretation of 'language images'."

At the same time, he also admitted that compared with other large models, the difference between 360 Intelligent Brain and other models is not that big. "360 Intelligent Brain has two characteristics. The first is the training data. 360 has screened out a lot of high-quality data to support the large model; the second is that after we change the search interface, we can achieve knowledge and timeliness through search. Enhancement, so that the most common hallucination problem of large models can be avoided.”

Regarding the real usage scenarios of the visual large model, Zhou Hongyi said that in the future, the visual large model can be combined with cameras to be applied to the fields of car navigation and security. In the field of security, he gave an example. For example, if a child stands on a high cabinet, the potential danger can be read through a large visual model and an alarm can be issued.

In the field of car navigation, through large visual models, potential dangers can be discovered during the car navigation process, and alarm processing can be performed while retaining the video, thereby reducing the probability of the driver being in danger. "For example, in a security incident that occurred on the streets of Shanghai two days ago, if the car behind had the support of a large visual model, it could identify the abnormality of the car in front. Then, it could automatically save the video and upload the alarm at the same time deal with."

At the end of the interview, Zhou Hongyi made some of his own opinions on some security issues related to the AI era caused by "AI face-changing" some time ago. He emphasized that AI security issues should be taken seriously, and 360 has established an internal AI security team to focus on solving security issues in this field. He added: "AI professionals will need to conduct secondary verification in AI-generated works in the future, such as adding fingerprint or voiceprint recognition and other measures." ”

"AI is an industrial revolution, and its development is an inevitable trend. We cannot stop eating because it has some security problems. What 360 needs to do now is to minimize this security problem. It must not only solve network security , we must also solve data security and artificial intelligence security. At the same time, 360 must also maximize the research and development of large models. Because large models are the pinnacle of digitalization and an industrial revolution-level change, who does not master the core technology of large models? , who do not have practical application scenarios for large models, and thus are eliminated by the industry. Therefore, 360 must not only set up a dedicated research team to conduct research, but also find a better and safer solution through continuous attempts.” Zhou Hongyi said.

The above is the detailed content of 360 Zhou Hongyi: The development of AI is an inevitable trend, and we must fully master the core technology of large models. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Big model app Tencent Yuanbao is online! Hunyuan is upgraded to create an all-round AI assistant that can be carried anywhere

Jun 09, 2024 pm 10:38 PM

Big model app Tencent Yuanbao is online! Hunyuan is upgraded to create an all-round AI assistant that can be carried anywhere

Jun 09, 2024 pm 10:38 PM

On May 30, Tencent announced a comprehensive upgrade of its Hunyuan model. The App "Tencent Yuanbao" based on the Hunyuan model was officially launched and can be downloaded from Apple and Android app stores. Compared with the Hunyuan applet version in the previous testing stage, Tencent Yuanbao provides core capabilities such as AI search, AI summary, and AI writing for work efficiency scenarios; for daily life scenarios, Yuanbao's gameplay is also richer and provides multiple features. AI application, and new gameplay methods such as creating personal agents are added. "Tencent does not strive to be the first to make large models." Liu Yuhong, vice president of Tencent Cloud and head of Tencent Hunyuan large model, said: "In the past year, we continued to promote the capabilities of Tencent Hunyuan large model. In the rich and massive Polish technology in business scenarios while gaining insights into users’ real needs

Bytedance Beanbao large model released, Volcano Engine full-stack AI service helps enterprises intelligently transform

Jun 05, 2024 pm 07:59 PM

Bytedance Beanbao large model released, Volcano Engine full-stack AI service helps enterprises intelligently transform

Jun 05, 2024 pm 07:59 PM

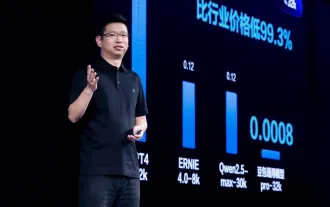

Tan Dai, President of Volcano Engine, said that companies that want to implement large models well face three key challenges: model effectiveness, inference costs, and implementation difficulty: they must have good basic large models as support to solve complex problems, and they must also have low-cost inference. Services allow large models to be widely used, and more tools, platforms and applications are needed to help companies implement scenarios. ——Tan Dai, President of Huoshan Engine 01. The large bean bag model makes its debut and is heavily used. Polishing the model effect is the most critical challenge for the implementation of AI. Tan Dai pointed out that only through extensive use can a good model be polished. Currently, the Doubao model processes 120 billion tokens of text and generates 30 million images every day. In order to help enterprises implement large-scale model scenarios, the beanbao large-scale model independently developed by ByteDance will be launched through the volcano

Using Shengteng AI technology, the Qinling·Qinchuan transportation model helps Xi'an build a smart transportation innovation center

Oct 15, 2023 am 08:17 AM

Using Shengteng AI technology, the Qinling·Qinchuan transportation model helps Xi'an build a smart transportation innovation center

Oct 15, 2023 am 08:17 AM

"High complexity, high fragmentation, and cross-domain" have always been the primary pain points on the road to digital and intelligent upgrading of the transportation industry. Recently, the "Qinling·Qinchuan Traffic Model" with a parameter scale of 100 billion, jointly built by China Vision, Xi'an Yanta District Government, and Xi'an Future Artificial Intelligence Computing Center, is oriented to the field of smart transportation and provides services to Xi'an and its surrounding areas. The region will create a fulcrum for smart transportation innovation. The "Qinling·Qinchuan Traffic Model" combines Xi'an's massive local traffic ecological data in open scenarios, the original advanced algorithm self-developed by China Science Vision, and the powerful computing power of Shengteng AI of Xi'an Future Artificial Intelligence Computing Center to provide road network monitoring, Smart transportation scenarios such as emergency command, maintenance management, and public travel bring about digital and intelligent changes. Traffic management has different characteristics in different cities, and the traffic on different roads

Uncovering the NVIDIA large model inference framework: TensorRT-LLM

Feb 01, 2024 pm 05:24 PM

Uncovering the NVIDIA large model inference framework: TensorRT-LLM

Feb 01, 2024 pm 05:24 PM

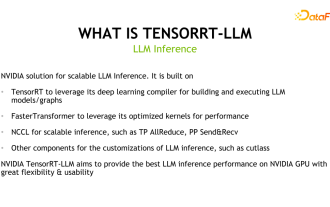

1. Product positioning of TensorRT-LLM TensorRT-LLM is a scalable inference solution developed by NVIDIA for large language models (LLM). It builds, compiles and executes calculation graphs based on the TensorRT deep learning compilation framework, and draws on the efficient Kernels implementation in FastTransformer. In addition, it utilizes NCCL for communication between devices. Developers can customize operators to meet specific needs based on technology development and demand differences, such as developing customized GEMM based on cutlass. TensorRT-LLM is NVIDIA's official inference solution, committed to providing high performance and continuously improving its practicality. TensorRT-LL

Benchmark GPT-4! China Mobile's Jiutian large model passed dual registration

Apr 04, 2024 am 09:31 AM

Benchmark GPT-4! China Mobile's Jiutian large model passed dual registration

Apr 04, 2024 am 09:31 AM

According to news on April 4, the Cyberspace Administration of China recently released a list of registered large models, and China Mobile’s “Jiutian Natural Language Interaction Large Model” was included in it, marking that China Mobile’s Jiutian AI large model can officially provide generative artificial intelligence services to the outside world. . China Mobile stated that this is the first large-scale model developed by a central enterprise to have passed both the national "Generative Artificial Intelligence Service Registration" and the "Domestic Deep Synthetic Service Algorithm Registration" dual registrations. According to reports, Jiutian’s natural language interaction large model has the characteristics of enhanced industry capabilities, security and credibility, and supports full-stack localization. It has formed various parameter versions such as 9 billion, 13.9 billion, 57 billion, and 100 billion, and can be flexibly deployed in Cloud, edge and end are different situations

Advanced practice of industrial knowledge graph

Jun 13, 2024 am 11:59 AM

Advanced practice of industrial knowledge graph

Jun 13, 2024 am 11:59 AM

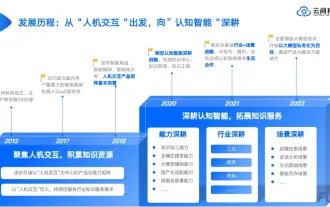

1. Background Introduction First, let’s introduce the development history of Yunwen Technology. Yunwen Technology Company...2023 is the period when large models are prevalent. Many companies believe that the importance of graphs has been greatly reduced after large models, and the preset information systems studied previously are no longer important. However, with the promotion of RAG and the prevalence of data governance, we have found that more efficient data governance and high-quality data are important prerequisites for improving the effectiveness of privatized large models. Therefore, more and more companies are beginning to pay attention to knowledge construction related content. This also promotes the construction and processing of knowledge to a higher level, where there are many techniques and methods that can be explored. It can be seen that the emergence of a new technology does not necessarily defeat all old technologies. It is also possible that the new technology and the old technology will be integrated with each other.

New test benchmark released, the most powerful open source Llama 3 is embarrassed

Apr 23, 2024 pm 12:13 PM

New test benchmark released, the most powerful open source Llama 3 is embarrassed

Apr 23, 2024 pm 12:13 PM

If the test questions are too simple, both top students and poor students can get 90 points, and the gap cannot be widened... With the release of stronger models such as Claude3, Llama3 and even GPT-5 later, the industry is in urgent need of a more difficult and differentiated model Benchmarks. LMSYS, the organization behind the large model arena, launched the next generation benchmark, Arena-Hard, which attracted widespread attention. There is also the latest reference for the strength of the two fine-tuned versions of Llama3 instructions. Compared with MTBench, which had similar scores before, the Arena-Hard discrimination increased from 22.6% to 87.4%, which is stronger and weaker at a glance. Arena-Hard is built using real-time human data from the arena and has a consistency rate of 89.1% with human preferences.

GPT Store can't even open its doors. How dare this domestic platform take this path? ?

Apr 19, 2024 pm 09:30 PM

GPT Store can't even open its doors. How dare this domestic platform take this path? ?

Apr 19, 2024 pm 09:30 PM

Pay attention, this man has connected more than 1,000 large models, allowing you to plug in and switch seamlessly. Recently, a visual AI workflow has been launched: giving you an intuitive drag-and-drop interface, you can drag, pull, and drag to arrange your own workflow on an infinite canvas. As the saying goes, war costs speed, and Qubit heard that within 48 hours of this AIWorkflow going online, users had already configured personal workflows with more than 100 nodes. Without further ado, what I want to talk about today is Dify, an LLMOps company, and its CEO Zhang Luyu. Zhang Luyu is also the founder of Dify. Before joining the business, he had 11 years of experience in the Internet industry. I am engaged in product design, understand project management, and have some unique insights into SaaS. Later he