Technology peripherals

Technology peripherals

It Industry

It Industry

TSMC receives a large number of AI chip foundry orders to respond to the generative AI trend

TSMC receives a large number of AI chip foundry orders to respond to the generative AI trend

TSMC receives a large number of AI chip foundry orders to respond to the generative AI trend

News on May 23, after the generative artificial intelligence chat robot ChatGPT caused a sensation, many technology giants have joined the generative AI competition. Companies such as Google have released their competing products and continue to upgrade their artificial intelligence chat robots and Large language models.

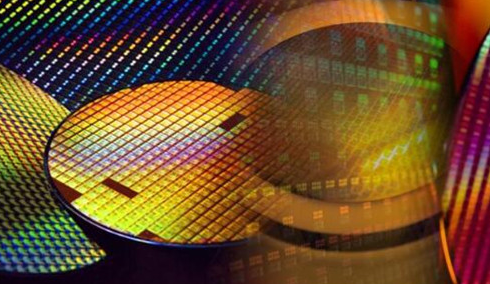

With the rise of generative artificial intelligence research and development and application, the demand for related chips will further increase, and chip manufacturers will also benefit. According to media reports, as a leading company in the field of wafer foundry, TSMC has won many AI chip foundry contracts.

According to industry insiders, TSMC has successfully secured a large number of AI chip foundry orders. Because of the massive growth in demand for generative AI applications, TSMC used its 7nm and below process to win these key orders.

According to ITBEAR technology information reports, generative artificial intelligence has promoted Nvidia’s high-performance GPUs to become an important part of chip demand. According to reports, OpenAI used 10,000 GPUs when training ChatGPT, and after its launch, it further increased the use of 25,000 Nvidia GPUs to meet server demand.

Market sources recently revealed that orders for Nvidia’s A100 and H100 high-performance GPU products for data centers are increasing, and correspondingly it has increased its wafer production at TSMC.

Driven by the rapid development of generative artificial intelligence technology, technology giants are actively competing in the fields of R&D and application, which has promoted the increase in chip demand. This will further promote the development of the chip foundry industry and bring more business opportunities and profit margins to foundry manufacturers such as TSMC.

The rise of generative AI has brought infinite possibilities to the field of artificial intelligence. In the future, with the continuous breakthroughs in technology and the expansion of application scenarios, we have reason to look forward to the arrival of more innovations and progress.

The above is the detailed content of TSMC receives a large number of AI chip foundry orders to respond to the generative AI trend. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1243

1243

24

24

New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%

Nov 14, 2023 pm 03:21 PM

New title: NVIDIA H200 released: HBM capacity increased by 76%, the most powerful AI chip that significantly improves large model performance by 90%

Nov 14, 2023 pm 03:21 PM

According to news on November 14, Nvidia officially released the new H200 GPU at the "Supercomputing23" conference on the morning of the 13th local time, and updated the GH200 product line. Among them, the H200 is still built on the existing Hopper H100 architecture. However, more high-bandwidth memory (HBM3e) has been added to better handle the large data sets required to develop and implement artificial intelligence, making the overall performance of running large models improved by 60% to 90% compared to the previous generation H100. The updated GH200 will also power the next generation of AI supercomputers. In 2024, more than 200 exaflops of AI computing power will be online. H200

MediaTek is rumored to have won a large order from Google for server AI chips and will supply high-speed Serdes chips

Jun 19, 2023 pm 08:23 PM

MediaTek is rumored to have won a large order from Google for server AI chips and will supply high-speed Serdes chips

Jun 19, 2023 pm 08:23 PM

On June 19, according to media reports in Taiwan, China, Google (Google) has approached MediaTek to cooperate in order to develop the latest server-oriented AI chip, and plans to hand it over to TSMC's 5nm process for foundry, with plans for mass production early next year. According to the report, sources revealed that this cooperation between Google and MediaTek will provide MediaTek with serializer and deserializer (SerDes) solutions and help integrate Google’s self-developed tensor processor (TPU) to help Google create the latest Server AI chips will be more powerful than CPU or GPU architectures. The industry points out that many of Google's current services are related to AI. It has invested in deep learning technology many years ago and found that using GPUs to perform AI calculations is very expensive. Therefore, Google decided to

The next big thing in AI: Peak performance of NVIDIA B100 chip and OpenAI GPT-5 model

Nov 18, 2023 pm 03:39 PM

The next big thing in AI: Peak performance of NVIDIA B100 chip and OpenAI GPT-5 model

Nov 18, 2023 pm 03:39 PM

After the debut of the NVIDIA H200, known as the world's most powerful AI chip, the industry began to look forward to NVIDIA's more powerful B100 chip. At the same time, OpenAI, the most popular AI start-up company this year, has begun to develop a more powerful and complex GPT-5 model. Guotai Junan pointed out in the latest research report that the B100 and GPT5 with boundless performance are expected to be released in 2024, and the major upgrades may release unprecedented productivity. The agency stated that it is optimistic that AI will enter a period of rapid development and its visibility will continue until 2024. Compared with previous generations of products, how powerful are B100 and GPT-5? Nvidia and OpenAI have already given a preview: B100 may be more than 4 times faster than H100, and GPT-5 may achieve super

Kneron launches latest AI chip KL730 to drive large-scale application of lightweight GPT solutions

Aug 17, 2023 pm 01:37 PM

Kneron launches latest AI chip KL730 to drive large-scale application of lightweight GPT solutions

Aug 17, 2023 pm 01:37 PM

KL730's progress in energy efficiency has solved the biggest bottleneck in the implementation of artificial intelligence models - energy costs. Compared with the industry and previous Nerner chips, the KL730 chip has increased by 3 to 4 times. The KL730 chip supports the most advanced lightweight GPT large Language models, such as nanoGPT, and provide effective computing power of 0.35-4 tera per second. AI company Kneron today announced the release of the KL730 chip, which integrates automotive-grade NPU and image signal processing (ISP) to bring safe and low-energy AI The capabilities are empowered in various application scenarios such as edge servers, smart homes, and automotive assisted driving systems. San Diego-based Kneron is known for its groundbreaking neural processing units (NPUs), and its latest chip, the KL730, aims to achieve

AI chips are out of stock globally!

May 30, 2023 pm 09:53 PM

AI chips are out of stock globally!

May 30, 2023 pm 09:53 PM

Google’s CEO likened the AI revolution to humanity’s use of fire, but now the digital fire that fuels the industry—AI chips—is hard to come by. The new generation of advanced chips that drive AI operations are almost all manufactured by NVIDIA. As ChatGPT explodes out of the circle, the market demand for NVIDIA graphics processing chips (GPUs) far exceeds the supply. "Because there is a shortage, the key is your circle of friends," said Sharon Zhou, co-founder and CEO of Lamini, a startup that helps companies build AI models such as chatbots. "It's like toilet paper during the epidemic." This kind of thing. The situation has limited the computing power that cloud service providers such as Amazon and Microsoft can provide to customers such as OpenAI, the creator of ChatGPT.

NVIDIA launches new AI chip H200, performance improved by 90%! China's computing power achieves independent breakthrough!

Nov 14, 2023 pm 05:37 PM

NVIDIA launches new AI chip H200, performance improved by 90%! China's computing power achieves independent breakthrough!

Nov 14, 2023 pm 05:37 PM

While the world is still obsessed with NVIDIA H100 chips and buying them crazily to meet the growing demand for AI computing power, on Monday local time, NVIDIA quietly launched its latest AI chip H200, which is used for training large AI models. Compared with other The performance of the previous generation products H100 and H200 has been improved by about 60% to 90%. The H200 is an upgraded version of the Nvidia H100. It is also based on the Hopper architecture like the H100. The main upgrade includes 141GB of HBM3e video memory, and the video memory bandwidth has increased from the H100's 3.35TB/s to 4.8TB/s. According to Nvidia’s official website, H200 is also the company’s first chip to use HBM3e memory. This memory is faster and has larger capacity, so it is more suitable for large languages.

Microsoft is developing its own AI chip 'Athena'

Apr 25, 2023 pm 01:07 PM

Microsoft is developing its own AI chip 'Athena'

Apr 25, 2023 pm 01:07 PM

Microsoft is developing AI-optimized chips to reduce the cost of training generative AI models, such as the ones that power the OpenAIChatGPT chatbot. The Information recently quoted two people familiar with the matter as saying that Microsoft has been developing a new chipset code-named "Athena" since at least 2019. Employees at Microsoft and OpenAI already have access to the new chips and are using them to test their performance on large language models such as GPT-4. Training large language models requires ingesting and analyzing large amounts of data in order to create new output content for the AI to imitate human conversation. This is a hallmark of generative AI models. This process requires a large number (on the order of tens of thousands) of A

Samsung and Naver jointly develop generative AI and AI chips

May 25, 2023 am 11:58 AM

Samsung and Naver jointly develop generative AI and AI chips

May 25, 2023 am 11:58 AM

South Korea's two major technology giants, Samsung Electronics and Naver Corporation, have agreed to jointly develop a generative artificial intelligence enterprise platform to compete with global artificial intelligence tools such as ChatGPT. In their artificial intelligence collaboration, South Korea's largest online search engine service provider Naver will obtain semiconductor-related data from Samsung to create a generative artificial intelligence, which will then be further upgraded by Samsung. Once developed, the Korean-language AI tool will be used by Samsung's Device Solutions (DS) unit, which includes its semiconductor business, people familiar with the matter said. The two partners aim to launch the AI tool as early as October. After field testing, Samsung plans to bring use of generative AI tools to enterprises