Technology peripherals

Technology peripherals

AI

AI

The Chinese Academy of Sciences Software released a new CV model ViG that surpasses ViT in performance. Will it become a representative of graph neural networks in the future?

The Chinese Academy of Sciences Software released a new CV model ViG that surpasses ViT in performance. Will it become a representative of graph neural networks in the future?

The Chinese Academy of Sciences Software released a new CV model ViG that surpasses ViT in performance. Will it become a representative of graph neural networks in the future?

Is the network structure of computer vision about to undergo another innovation?

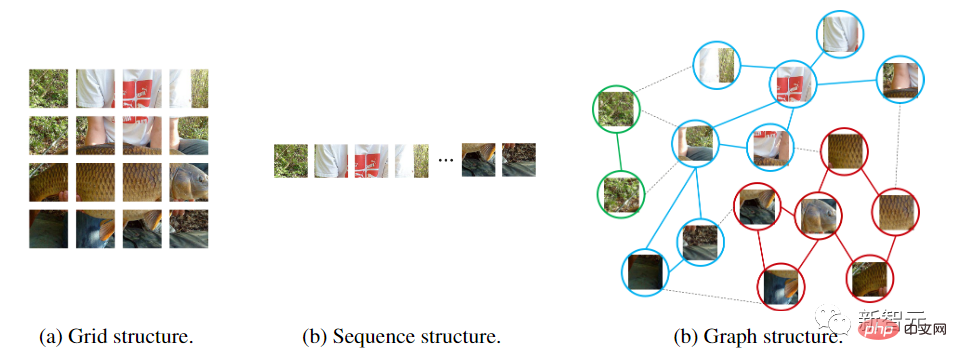

From convolutional neural networks to visual Transformers with attention mechanisms, neural network models treat the input image as a grid or patch sequence, but this method cannot capture the changes or complexity. object.

For example, when people observe a picture, they will naturally divide the entire picture into multiple objects, and establish spatial and other positional relationships between the objects. In other words, the entire picture is very important to the human brain. It is actually a graph, and objects are nodes on the graph.

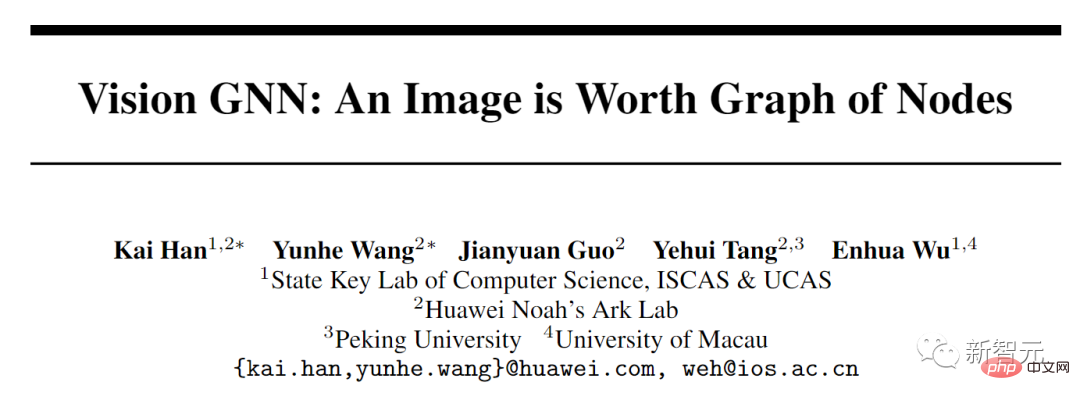

Recently, researchers from the Institute of Software of the Chinese Academy of Sciences, Huawei's Noah's Ark Laboratory, Peking University, and the University of Macau jointly proposed a new model architecture Vision GNN (ViG) can extract graph-level features from images for vision tasks.

Paper link: https://arxiv.org/pdf/2206.00272.pdf

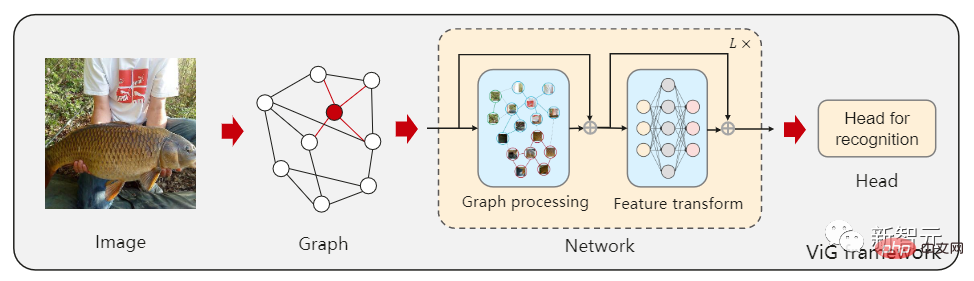

First, the image needs to be divided into several patches as in the figure nodes, and build a graph by connecting nearest neighbor patches, and then use the ViG model to transform and exchange the information of all nodes in the entire graph.

ViG consists of two basic modules. The Grapher module uses graph convolution to aggregate and update graph information, and the FFN module uses two linear layers to transform node features.

Experiments conducted on image recognition and object detection tasks have also proven the superiority of the ViG architecture. The pioneering research of GNN on general vision tasks will provide useful inspiration and experience for future research.

The author of the paper is Professor Wu Enhua, a doctoral supervisor at the Institute of Software, Chinese Academy of Sciences, and an honorary professor at the University of Macau. He graduated from the Department of Engineering Mechanics and Mathematics of Tsinghua University in 1970 and his PhD from the Department of Computer Science at the University of Manchester in the UK in 1980. The main research areas are computer graphics and virtual reality, including: virtual reality, photorealistic graphics generation, physics-based simulation and real-time computing, physics-based modeling and rendering, image and video processing and modeling, visual computing and machines study.

Visual GNN

Network structure is often the most critical factor in improving performance. As long as the quantity and quality of data can be guaranteed, changing the model from CNN to ViT will result in a better performance model.

But different networks treat input images differently. CNN slides the window on the image and introduces translation invariance and local features.

ViT and multi-layer perceptron (MLP) convert the image into a patch sequence, such as dividing a 224×224 image into several 16×16 patches, and finally form an input with a length of 196 sequence.

Graph neural networks are more flexible. For example, in computer vision, a basic task is to identify objects in images. Since objects are usually not quadrilateral and may have irregular shapes, the grid or sequence structures commonly used in previous networks such as ResNet and ViT are redundant and inflexible to handle.

An object can be viewed as consisting of multiple parts. For example, a person can be roughly divided into head, upper body, arms and legs.

These parts connected by joints naturally form a graphic structure. By analyzing the diagram, we can finally identify that the object may be a human.

In addition, graph is a general data structure, and grid and sequence can be regarded as a special case of graph. Thinking of an image as a graph is more flexible and efficient for visual perception.

Using the graph structure requires dividing the input image into several patches and treating each patch as a node. If each pixel is treated as a node, it will lead to too many nodes in the graph ( >10K).

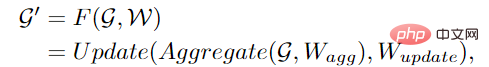

# After establishing the graph, first aggregate the features between adjacent nodes through a graph convolutional neural network (GCN) and extract the representation of the image.

In order to allow GCN to obtain more diverse features, the author applies multi-head operation to graph convolution. The aggregated features are updated by heads with different weights. The final stage The connection is an image representation.

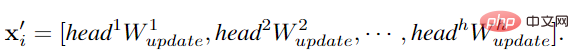

Previous GCNs usually reused several graph convolutional layers to extract aggregate features of graph data, while the over-smoothing phenomenon in deep GCNs will reduce the uniqueness of node features, resulting in The performance of visual recognition is degraded.

# To alleviate this problem, researchers introduced more feature transformations and nonlinear activation functions in the ViG block.

First apply a linear layer before and after graph convolution to project node features into the same domain to increase feature diversity. Inserting a non-linear activation function after graph convolution to avoid layer collapse.

In order to further improve the feature conversion capability and alleviate the over-smoothing phenomenon, it is also necessary to use a feedforward network (FFN) on each node. The FFN module is a simple multi-layer perceptron with two fully connected layers.

In the Grapher and FFN modules, batch normalization is performed after each fully connected layer or graph convolution layer. The stack of the Grapher module and the FFN module constitutes a ViG Blocks are also the basic unit for building large networks.

Compared with the original ResGCN, the newly proposed ViG can maintain the diversity of features. As more layers are added, the network can also learn stronger representations.

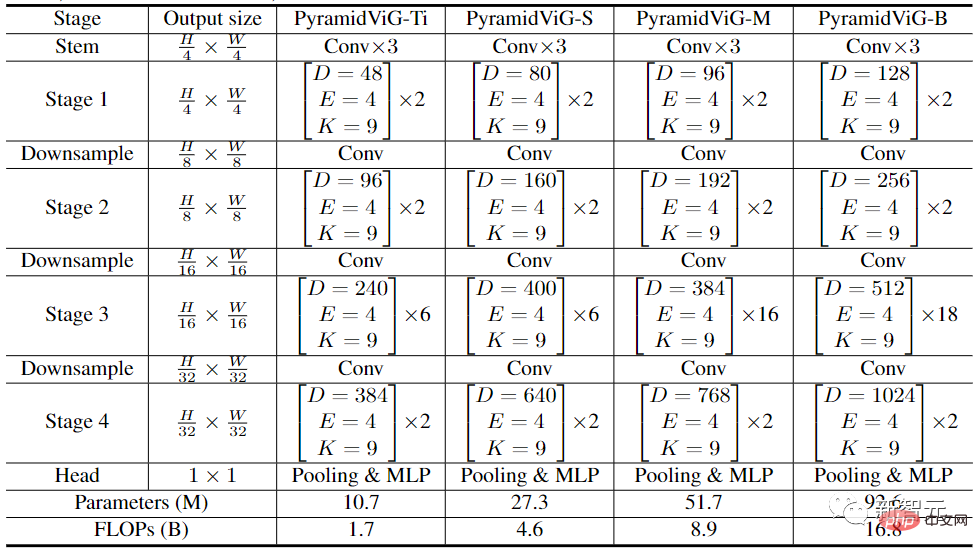

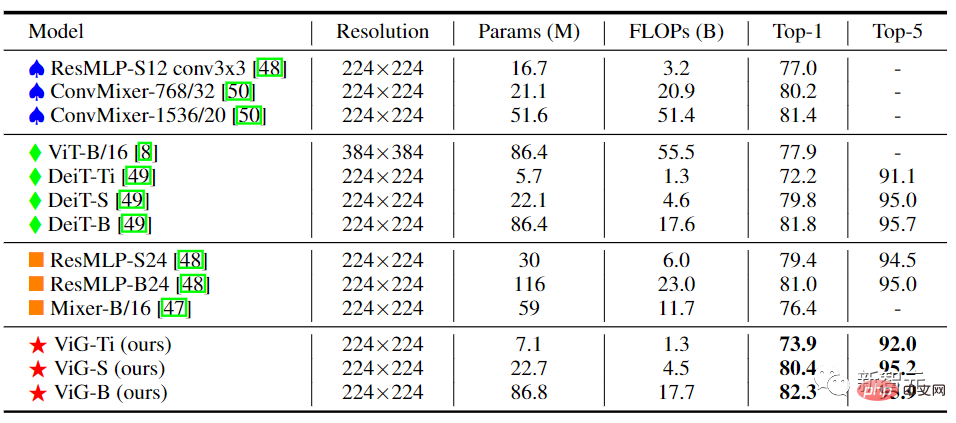

In computer vision network architecture, commonly used Transformer models usually have an isotropic structure (such as ViT), while CNN prefers to use a pyramid structure (such as ResNet).

In order to compare with other types of neural networks, the researchers established two network architectures for ViG: isotropic and pyramid.

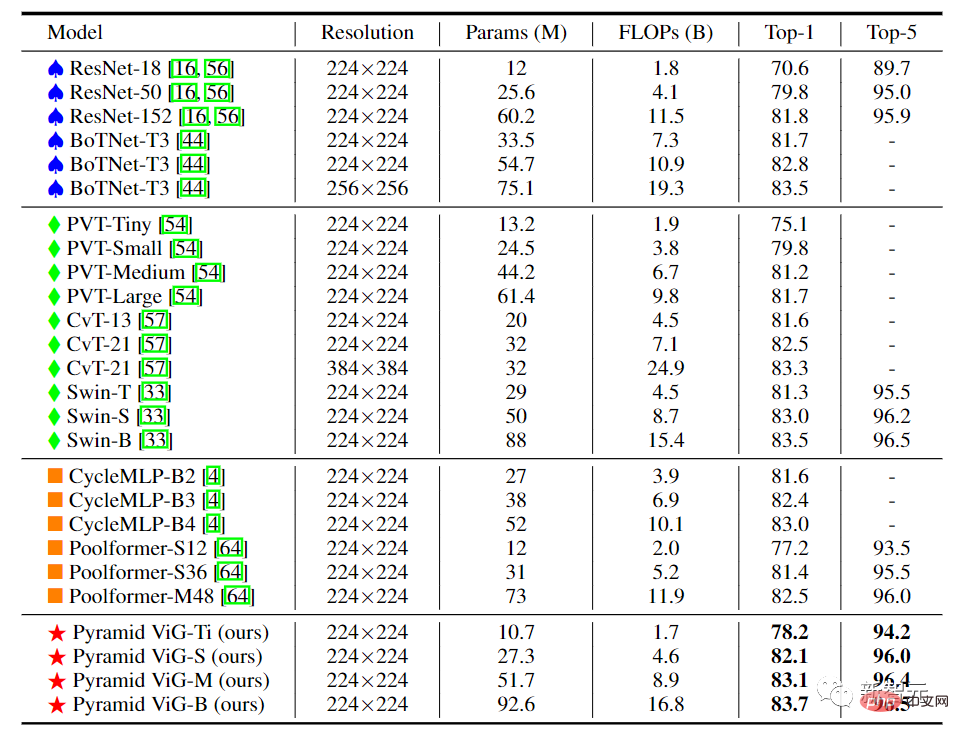

In the experimental comparison stage, the researchers selected the ImageNet ILSVRC 2012 data set in the image classification task, which contains 1000 categories, 120M training images and 50K verification images.

In the target detection task, the COCO 2017 data set with 80 target categories was selected, including 118k training images and 5000 verification set images.

In the isotropic ViG architecture, the feature size can be kept unchanged during its main calculation process, which is easy to expand and friendly to hardware acceleration. After comparing it with existing isotropic CNNs, Transformers, and MLPs, we can see that ViG performs better than other types of networks. Among them, ViG-Ti achieved a top-1 accuracy of 73.9%, which is 1.7% higher than the DeiT-Ti model, while the computational cost is similar.

In the pyramid-structured ViG, as the network deepens, the spatial size of the feature map is gradually reduced, and the scale invariant characteristics of the image are used to generate multi-scale features at the same time.

High-performance networks mostly use pyramid structures, such as ResNet, Swin Transformer and CycleMLP. After comparing Pyramid ViG with these representative pyramid networks, it can be seen that the Pyramid ViG series can surpass or rival the state-of-the-art pyramid networks including CNN, MLP, and Transformer.

The results show that graph neural networks can complete visual tasks well and may become a basic component in computer vision systems.

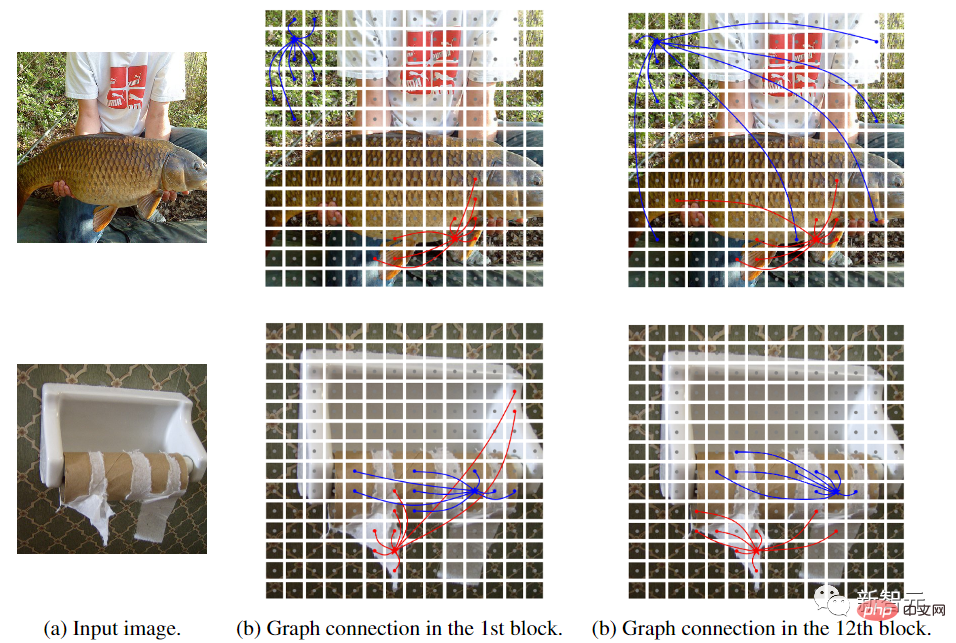

In order to better understand the workflow of the ViG model, the researchers visualized the graph structure built in ViG-S. Plots of samples at two different depths (blocks 1 and 12). The pentagram is the central node and nodes with the same color are its neighbors. Only the two central nodes are visualized because drawing all the edges would look cluttered.

It can be observed that the ViG model can select content-related nodes as first-order neighbors. At shallow levels, neighbor nodes are often selected based on low-level and local features, such as color and texture. At deep levels, the neighbors of the central node are more semantic and belong to the same category. The ViG network can gradually connect nodes through their content and semantic representation, helping to better identify objects.

The above is the detailed content of The Chinese Academy of Sciences Software released a new CV model ViG that surpasses ViT in performance. Will it become a representative of graph neural networks in the future?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative