Backend Development

Backend Development

PHP Tutorial

PHP Tutorial

How to efficiently configure OpCache to improve production environment performance?

How to efficiently configure OpCache to improve production environment performance?

How to efficiently configure OpCache to improve production environment performance?

PHP 7.3 OpCache Performance Tuning: Production Environment Best Practices

In PHP 7.3 production environments, optimizing OpCache configuration for performance is crucial. This article will guide you how to configure OpCache, maximize cache efficiency, reduce server load, and improve application response speed.

Detailed explanation of core configuration parameters:

First, make sure OpCache is enabled:

-

opcache.enable=1: Enable OpCache switch, must be set to 1.

Next, adjust OpCache memory allocation:

-

opcache.memory_consumption=512: OpCache can use memory size (MB). 512MB is a common value, but it needs to be adjusted according to the application scale and code volume. Too small reduces the cache hit rate and too large wastes memory.

Optimize string caching:

-

opcache.interned_strings_buffer=64: OpCache internal string buffer size (MB). Reasonable configuration reduces string duplication and improves performance.

Control the number of cached files:

-

opcache.max_accelerated_files=4000: OpCache caches the maximum number of PHP files. Adjusted according to the project size, too small causes frequent cache failure and excessively large increases memory usage.

Set file re-verification frequency:

-

opcache.revalidate_freq=1000: OpCache checks file modification frequency (seconds). 1000 seconds (about 16 minutes) is a common value, balancing performance and code update timeliness. Excessive checking increases CPU load.

Enable CLI mode OpCache:

-

opcache.enable_cli=1: If you need to use OpCache on the command line, set to 1.

Easy configuration for rapid performance improvement:

In many cases, you only need to configure the following two items to significantly improve performance:

-

opcache.enable=1: Enable OpCache. -

opcache.revalidate_freq=1000: Sets the re-verification frequency.

The configuration of other parameters needs to be adjusted and tested according to actual application conditions (server memory, code size, update frequency, etc.). Continuous monitoring and testing are key to optimizing configuration.

The above is the detailed content of How to efficiently configure OpCache to improve production environment performance?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

What to do if your Huawei phone has insufficient memory (Practical methods to solve the problem of insufficient memory)

Apr 29, 2024 pm 06:34 PM

What to do if your Huawei phone has insufficient memory (Practical methods to solve the problem of insufficient memory)

Apr 29, 2024 pm 06:34 PM

Insufficient memory on Huawei mobile phones has become a common problem faced by many users, with the increase in mobile applications and media files. To help users make full use of the storage space of their mobile phones, this article will introduce some practical methods to solve the problem of insufficient memory on Huawei mobile phones. 1. Clean cache: history records and invalid data to free up memory space and clear temporary files generated by applications. Find "Storage" in the settings of your Huawei phone, click "Clear Cache" and select the "Clear Cache" button to delete the application's cache files. 2. Uninstall infrequently used applications: To free up memory space, delete some infrequently used applications. Drag it to the top of the phone screen, long press the "Uninstall" icon of the application you want to delete, and then click the confirmation button to complete the uninstallation. 3.Mobile application to

Detailed steps for cleaning memory in Xiaohongshu

Apr 26, 2024 am 10:43 AM

Detailed steps for cleaning memory in Xiaohongshu

Apr 26, 2024 am 10:43 AM

1. Open Xiaohongshu, click Me in the lower right corner 2. Click the settings icon, click General 3. Click Clear Cache

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

How to fine-tune deepseek locally

Feb 19, 2025 pm 05:21 PM

Local fine-tuning of DeepSeek class models faces the challenge of insufficient computing resources and expertise. To address these challenges, the following strategies can be adopted: Model quantization: convert model parameters into low-precision integers, reducing memory footprint. Use smaller models: Select a pretrained model with smaller parameters for easier local fine-tuning. Data selection and preprocessing: Select high-quality data and perform appropriate preprocessing to avoid poor data quality affecting model effectiveness. Batch training: For large data sets, load data in batches for training to avoid memory overflow. Acceleration with GPU: Use independent graphics cards to accelerate the training process and shorten the training time.

What to do if the Edge browser takes up too much memory What to do if the Edge browser takes up too much memory

May 09, 2024 am 11:10 AM

What to do if the Edge browser takes up too much memory What to do if the Edge browser takes up too much memory

May 09, 2024 am 11:10 AM

1. First, enter the Edge browser and click the three dots in the upper right corner. 2. Then, select [Extensions] in the taskbar. 3. Next, close or uninstall the plug-ins you do not need.

For only $250, Hugging Face's technical director teaches you how to fine-tune Llama 3 step by step

May 06, 2024 pm 03:52 PM

For only $250, Hugging Face's technical director teaches you how to fine-tune Llama 3 step by step

May 06, 2024 pm 03:52 PM

The familiar open source large language models such as Llama3 launched by Meta, Mistral and Mixtral models launched by MistralAI, and Jamba launched by AI21 Lab have become competitors of OpenAI. In most cases, users need to fine-tune these open source models based on their own data to fully unleash the model's potential. It is not difficult to fine-tune a large language model (such as Mistral) compared to a small one using Q-Learning on a single GPU, but efficient fine-tuning of a large model like Llama370b or Mixtral has remained a challenge until now. Therefore, Philipp Sch, technical director of HuggingFace

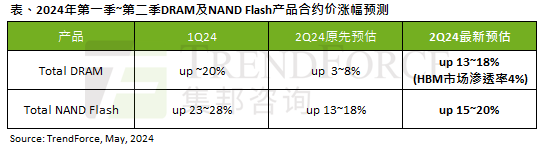

The impact of the AI wave is obvious. TrendForce has revised up its forecast for DRAM memory and NAND flash memory contract price increases this quarter.

May 07, 2024 pm 09:58 PM

The impact of the AI wave is obvious. TrendForce has revised up its forecast for DRAM memory and NAND flash memory contract price increases this quarter.

May 07, 2024 pm 09:58 PM

According to a TrendForce survey report, the AI wave has a significant impact on the DRAM memory and NAND flash memory markets. In this site’s news on May 7, TrendForce said in its latest research report today that the agency has increased the contract price increases for two types of storage products this quarter. Specifically, TrendForce originally estimated that the DRAM memory contract price in the second quarter of 2024 will increase by 3~8%, and now estimates it at 13~18%; in terms of NAND flash memory, the original estimate will increase by 13~18%, and the new estimate is 15%. ~20%, only eMMC/UFS has a lower increase of 10%. ▲Image source TrendForce TrendForce stated that the agency originally expected to continue to

Which one has better web performance, golang or java?

Apr 21, 2024 am 12:49 AM

Which one has better web performance, golang or java?

Apr 21, 2024 am 12:49 AM

Golang is better than Java in terms of web performance for the following reasons: a compiled language, directly compiled into machine code, has higher execution efficiency. Efficient garbage collection mechanism reduces the risk of memory leaks. Fast startup time without loading the runtime interpreter. Request processing performance is similar, and concurrent and asynchronous programming are supported. Lower memory usage, directly compiled into machine code without the need for additional interpreters and virtual machines.

What warnings or caveats should be included in Golang function documentation?

May 04, 2024 am 11:39 AM

What warnings or caveats should be included in Golang function documentation?

May 04, 2024 am 11:39 AM

Go function documentation contains warnings and caveats that are essential for understanding potential problems and avoiding errors. These include: Parameter validation warning: Check parameter validity. Concurrency safety considerations: Indicate the thread safety of a function. Performance considerations: Highlight the high computational cost or memory footprint of a function. Return type annotation: Describes the error type returned by the function. Dependency Note: Lists external libraries or packages required by the function. Deprecation warning: Indicates that a function is deprecated and suggests an alternative.