Backend Development

Backend Development

Python Tutorial

Python Tutorial

I made a token count check app using Streamlit in Snowflake (SiS)

I made a token count check app using Streamlit in Snowflake (SiS)

I made a token count check app using Streamlit in Snowflake (SiS)

Introduction

Hello, I'm a Sales Engineer at Snowflake. I'd like to share some of my experiences and experiments with you through various posts. In this article, I'll show you how to create an app using Streamlit in Snowflake to check token counts and estimate costs for Cortex LLM.

Note: This post represents my personal views and not those of Snowflake.

What is Streamlit in Snowflake (SiS)?

Streamlit is a Python library that allows you to create web UIs with simple Python code, eliminating the need for HTML/CSS/JavaScript. You can see examples in the App Gallery.

Streamlit in Snowflake enables you to develop and run Streamlit web apps directly on Snowflake. It's easy to use with just a Snowflake account and great for integrating Snowflake table data into web apps.

About Streamlit in Snowflake (Official Snowflake Documentation)

What is Snowflake Cortex?

Snowflake Cortex is a suite of generative AI features in Snowflake. Cortex LLM allows you to call large language models running on Snowflake using simple functions in SQL or Python.

Large Language Model (LLM) Functions (Snowflake Cortex) (Official Snowflake Documentation)

Feature Overview

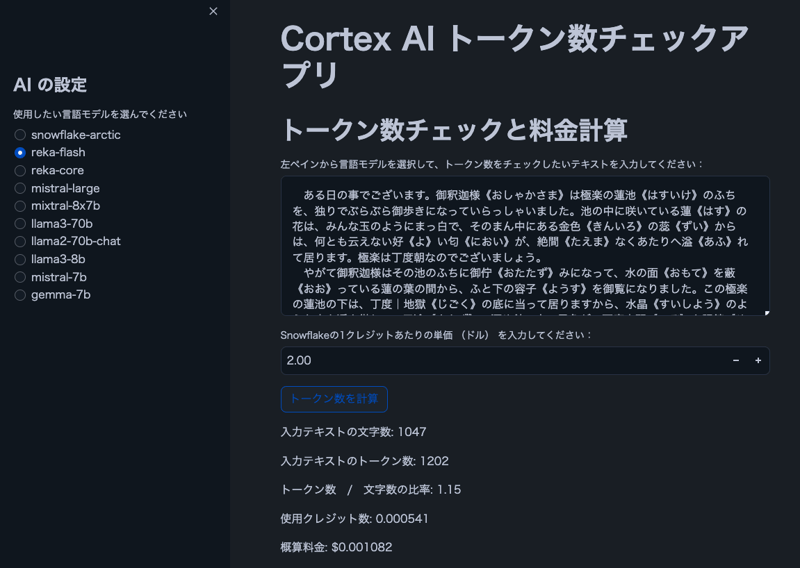

Image

Note: The text in the image is from "The Spider's Thread" by Ryunosuke Akutagawa.

Features

- Users can select a Cortex LLM model

- Display character and token counts for user-input text

- Show the ratio of tokens to characters

- Calculate estimated cost based on Snowflake credit pricing

Note: Cortex LLM pricing table (PDF)

Prerequisites

- Snowflake account with Cortex LLM access

- snowflake-ml-python 1.1.2 or later

Note: Cortex LLM region availability (Official Snowflake Documentation)

Source Code

import streamlit as st

from snowflake.snowpark.context import get_active_session

import snowflake.snowpark.functions as F

# Get current session

session = get_active_session()

# Application title

st.title("Cortex AI Token Count Checker")

# AI settings

st.sidebar.title("AI Settings")

lang_model = st.sidebar.radio("Select the language model you want to use",

("snowflake-arctic", "reka-core", "reka-flash",

"mistral-large2", "mistral-large", "mixtral-8x7b", "mistral-7b",

"llama3.1-405b", "llama3.1-70b", "llama3.1-8b",

"llama3-70b", "llama3-8b", "llama2-70b-chat",

"jamba-instruct", "gemma-7b")

)

# Function to count tokens (using Cortex's token counting function)

def count_tokens(model, text):

result = session.sql(f"SELECT SNOWFLAKE.CORTEX.COUNT_TOKENS('{model}', '{text}') as token_count").collect()

return result[0]['TOKEN_COUNT']

# Token count check and cost calculation

st.header("Token Count Check and Cost Calculation")

input_text = st.text_area("Select a language model from the left pane and enter the text you want to check for token count:", height=200)

# Let user input the price per credit

credit_price = st.number_input("Enter the price per Snowflake credit (in dollars):", min_value=0.0, value=2.0, step=0.01)

# Credits per 1M tokens for each model (as of 2024/8/30, mistral-large2 is not supported)

model_credits = {

"snowflake-arctic": 0.84,

"reka-core": 5.5,

"reka-flash": 0.45,

"mistral-large2": 1.95,

"mistral-large": 5.1,

"mixtral-8x7b": 0.22,

"mistral-7b": 0.12,

"llama3.1-405b": 3,

"llama3.1-70b": 1.21,

"llama3.1-8b": 0.19,

"llama3-70b": 1.21,

"llama3-8b": 0.19,

"llama2-70b-chat": 0.45,

"jamba-instruct": 0.83,

"gemma-7b": 0.12

}

if st.button("Calculate Token Count"):

if input_text:

# Calculate character count

char_count = len(input_text)

st.write(f"Character count of input text: {char_count}")

if lang_model in model_credits:

# Calculate token count

token_count = count_tokens(lang_model, input_text)

st.write(f"Token count of input text: {token_count}")

# Ratio of tokens to characters

ratio = token_count / char_count if char_count > 0 else 0

st.write(f"Token count / Character count ratio: {ratio:.2f}")

# Cost calculation

credits_used = (token_count / 1000000) * model_credits[lang_model]

cost = credits_used * credit_price

st.write(f"Credits used: {credits_used:.6f}")

st.write(f"Estimated cost: ${cost:.6f}")

else:

st.warning("The selected model is not supported by Snowflake's token counting feature.")

else:

st.warning("Please enter some text.")

Conclusion

This app makes it easier to estimate costs for LLM workloads, especially when dealing with languages like Japanese where there's often a gap between character count and token count. I hope you find it useful!

Announcements

Snowflake What's New Updates on X

I'm sharing Snowflake's What's New updates on X. Please feel free to follow if you're interested!

English Version

Snowflake What's New Bot (English Version)

https://x.com/snow_new_en

Japanese Version

Snowflake What's New Bot (Japanese Version)

https://x.com/snow_new_jp

Change History

(20240914) Initial post

Original Japanese Article

https://zenn.dev/tsubasa_tech/articles/4dd80c91508ec4

The above is the detailed content of I made a token count check app using Streamlit in Snowflake (SiS). For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1670

1670

14

14

1428

1428

52

52

1329

1329

25

25

1274

1274

29

29

1256

1256

24

24

Python vs. C : Learning Curves and Ease of Use

Apr 19, 2025 am 12:20 AM

Python vs. C : Learning Curves and Ease of Use

Apr 19, 2025 am 12:20 AM

Python is easier to learn and use, while C is more powerful but complex. 1. Python syntax is concise and suitable for beginners. Dynamic typing and automatic memory management make it easy to use, but may cause runtime errors. 2.C provides low-level control and advanced features, suitable for high-performance applications, but has a high learning threshold and requires manual memory and type safety management.

Python and Time: Making the Most of Your Study Time

Apr 14, 2025 am 12:02 AM

Python and Time: Making the Most of Your Study Time

Apr 14, 2025 am 12:02 AM

To maximize the efficiency of learning Python in a limited time, you can use Python's datetime, time, and schedule modules. 1. The datetime module is used to record and plan learning time. 2. The time module helps to set study and rest time. 3. The schedule module automatically arranges weekly learning tasks.

Python vs. C : Exploring Performance and Efficiency

Apr 18, 2025 am 12:20 AM

Python vs. C : Exploring Performance and Efficiency

Apr 18, 2025 am 12:20 AM

Python is better than C in development efficiency, but C is higher in execution performance. 1. Python's concise syntax and rich libraries improve development efficiency. 2.C's compilation-type characteristics and hardware control improve execution performance. When making a choice, you need to weigh the development speed and execution efficiency based on project needs.

Learning Python: Is 2 Hours of Daily Study Sufficient?

Apr 18, 2025 am 12:22 AM

Learning Python: Is 2 Hours of Daily Study Sufficient?

Apr 18, 2025 am 12:22 AM

Is it enough to learn Python for two hours a day? It depends on your goals and learning methods. 1) Develop a clear learning plan, 2) Select appropriate learning resources and methods, 3) Practice and review and consolidate hands-on practice and review and consolidate, and you can gradually master the basic knowledge and advanced functions of Python during this period.

Python vs. C : Understanding the Key Differences

Apr 21, 2025 am 12:18 AM

Python vs. C : Understanding the Key Differences

Apr 21, 2025 am 12:18 AM

Python and C each have their own advantages, and the choice should be based on project requirements. 1) Python is suitable for rapid development and data processing due to its concise syntax and dynamic typing. 2)C is suitable for high performance and system programming due to its static typing and manual memory management.

Which is part of the Python standard library: lists or arrays?

Apr 27, 2025 am 12:03 AM

Which is part of the Python standard library: lists or arrays?

Apr 27, 2025 am 12:03 AM

Pythonlistsarepartofthestandardlibrary,whilearraysarenot.Listsarebuilt-in,versatile,andusedforstoringcollections,whereasarraysareprovidedbythearraymoduleandlesscommonlyusedduetolimitedfunctionality.

Python: Automation, Scripting, and Task Management

Apr 16, 2025 am 12:14 AM

Python: Automation, Scripting, and Task Management

Apr 16, 2025 am 12:14 AM

Python excels in automation, scripting, and task management. 1) Automation: File backup is realized through standard libraries such as os and shutil. 2) Script writing: Use the psutil library to monitor system resources. 3) Task management: Use the schedule library to schedule tasks. Python's ease of use and rich library support makes it the preferred tool in these areas.

Python for Web Development: Key Applications

Apr 18, 2025 am 12:20 AM

Python for Web Development: Key Applications

Apr 18, 2025 am 12:20 AM

Key applications of Python in web development include the use of Django and Flask frameworks, API development, data analysis and visualization, machine learning and AI, and performance optimization. 1. Django and Flask framework: Django is suitable for rapid development of complex applications, and Flask is suitable for small or highly customized projects. 2. API development: Use Flask or DjangoRESTFramework to build RESTfulAPI. 3. Data analysis and visualization: Use Python to process data and display it through the web interface. 4. Machine Learning and AI: Python is used to build intelligent web applications. 5. Performance optimization: optimized through asynchronous programming, caching and code