Oracle 10g expdp导出报错ORA-4031的解决方法

数据库是10.2.0.4 操作系统是aix,在执行expdp导出多个方案对象时报ORA-39014,ORA-39029,ORA-31671,ORA-39079,ORA-06512,ORA-0403

数据库是10.2.0.4 操作系统是aix,在执行expdp导出多个方案对象时报ORA-39014,ORA-39029,ORA-31671,ORA-39079,ORA-06512,ORA-04031:错误信息如下:

Export: Release 10.2.0.4.0 - 64bit Production on Monday, 17 February, 2014 9:46:52

Copyright (c) 2003, 2007, Oracle. All rights reserved.

;;;

Connected to: Oracle Database 10g Enterprise Edition Release 10.2.0.4.0 - 64bit Production

With the Partitioning, OLAP, Data Mining and Real Application Testing options

Starting "SYSTEM"."SYS_EXPORT_SCHEMA_01": system/******** directory=dump_RLZY dumpfile=ybcwfull_140217_0946.dmp logfile=ybcwfull_140217_0946.log schemas=ZW

1001,ZW1002,ZW1003,ZW1004,ZW1005,ZW1006,ZW1101,ZW1102,ZW1103,ZW1104,ZW1105,ZW1106,ZW1201,ZW1202,ZW1203,ZW1204,ZW1205,ZW1206,ZW1301,ZW1302,ZW1303,ZW1304,ZW13

05,ZW1306,ZW2001,ZW2002,ZW2003,ZW2004,ZW2005,ZW2006,ZW3001,ZW3002,ZW3003,ZW3004,ZW3005,ZW3006,ZW4001,ZW4002,ZW4003,ZW4004,ZW4005,ZW4006,ZW5001,ZW5002,ZW5003

,ZW5004,ZW5005,ZW5006,ZW6001,ZW6002,ZW6003,ZW6004,ZW6005,ZW6006,ZW7001,ZW7002,ZW7003,ZW7004,ZW7005,ZW7006,ZW8001,ZW8002,ZW8003,ZW8004,ZW8005,ZW8006,ZW9001,Z

W9002,ZW9003,ZW9004,ZW9005,ZW9006,ZW9999

Estimate in progress using BLOCKS method...

Processing object type SCHEMA_EXPORT/TABLE/TABLE_DATA

Total estimation using BLOCKS method: 997.3 MB

Processing object type SCHEMA_EXPORT/USER

Processing object type SCHEMA_EXPORT/SYSTEM_GRANT

Processing object type SCHEMA_EXPORT/ROLE_GRANT

Processing object type SCHEMA_EXPORT/DEFAULT_ROLE

Processing object type SCHEMA_EXPORT/PRE_SCHEMA/PROCACT_SCHEMA

Processing object type SCHEMA_EXPORT/SEQUENCE/SEQUENCE

Processing object type SCHEMA_EXPORT/TABLE/TABLE

Processing object type SCHEMA_EXPORT/TABLE/INDEX/INDEX

Processing object type SCHEMA_EXPORT/TABLE/CONSTRAINT/CONSTRAINT

Processing object type SCHEMA_EXPORT/TABLE/INDEX/STATISTICS/INDEX_STATISTICS

Processing object type SCHEMA_EXPORT/PACKAGE/PACKAGE_SPEC

Processing object type SCHEMA_EXPORT/FUNCTION/FUNCTION

Processing object type SCHEMA_EXPORT/PROCEDURE/PROCEDURE

Processing object type SCHEMA_EXPORT/PACKAGE/COMPILE_PACKAGE/PACKAGE_SPEC/ALTER_PACKAGE_SPEC

Processing object type SCHEMA_EXPORT/FUNCTION/ALTER_FUNCTION

Processing object type SCHEMA_EXPORT/PROCEDURE/ALTER_PROCEDURE

Processing object type SCHEMA_EXPORT/VIEW/VIEW

Processing object type SCHEMA_EXPORT/PACKAGE/PACKAGE_BODY

Processing object type SCHEMA_EXPORT/TABLE/CONSTRAINT/REF_CONSTRAINT

Processing object type SCHEMA_EXPORT/TABLE/TRIGGER

Processing object type SCHEMA_EXPORT/TABLE/STATISTICS/TABLE_STATISTICS

..................

. . exported "ZW9005"."GLSP_KMYS_TEMP" 0 KB 0 rows

. . exported "ZW9005"."GLSP_KMZJS_TEMP" 0 KB 0 rows

. . exported "ZW9005"."GLSP_KMZXFX_TEMP" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP1" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP2" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP3" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP4" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP5" 0 KB 0 rows

. . exported "ZW9005"."GLSP_PZYJZ_TEMP6" 0 KB 0 rows

ORA-39014: One or more workers have prematurely exited.

ORA-39029: worker 1 with process name "DW01" prematurely terminated

ORA-31671: Worker process DW01 had an unhandled exception.

ORA-39079: unable to enqueue message DG,KUPC$C_1_20140217094653,KUPC$A_1_20140217094654,MCP,50443,Y

ORA-06512: at "SYS.DBMS_SYS_ERROR", line 86

ORA-06512: at "SYS.KUPC$QUE_INT", line 924

ORA-04031: unable to allocate 2072 bytes of shared memory ("streams pool","unknown object","streams pool","kodpaih3 image")

ORA-06512: at "SYS.KUPW$WORKER", line 1397

ORA-06512: at line 2

Job "SYSTEM"."SYS_EXPORT_SCHEMA_01" stopped due to fatal error at 10:28:18

从错误信息中可以看到ORA-04031: unable to allocate 2072 bytes of shared memory ("streams pool","unknown object","streams pool","kodpaih3 image")

从字面上理解是在给streams pool分配内存时出错造成的,MOS上有一篇文件档

DataPump Export (EXPDP) Fails With Error ORA-4031 ("streams pool", ...) (文档 ID 457724.1)

In this Document

Symptoms

Cause

Solution

References

--------------------------------------------------------------------------------

Applies to:

Oracle Database - Enterprise Edition - Version 10.1.0.2 and later

Information in this document applies to any platform.

***Checked for relevance on 16-MAY-2012***

Symptoms

DataPump export (EXPDP) reports the following errors:

ORA-31626: job does not exist

ORA-31637: cannot create job SYS_EXPORT_FULL_01 for user SYSTEM

ORA-06512: at "SYS.DBMS_SYS_ERROR", line 95

ORA-06512: at "SYS.KUPV$FT_INT", line 600

ORA-39080: failed to create queues "KUPC$C_1_20070823095248" and "KUPC$S_1_20070

823095248" for Data Pump job

ORA-06512: at "SYS.DBMS_SYS_ERROR", line 95

ORA-06512: at "SYS.KUPC$QUE_INT", line 1580

ORA-04031: unable to allocate 4194344 bytes of shared memory ("streams pool","unknown object","streams pool","fixed allocation callback

Cause

The problem seems initially caused by having set the STREAMS_POOL_SIZE instance parameter to 0.

The first argument of the ORA-4031 error message also indicates a problem with the Streams pool.

The streams pool is used exclusively by Oracle Streams, see #CNCPT1235

Also, Data Pump export and import operations initialize the Oracle Streams pool because these operations use buffered queues.

For information about the streams pool, refer to #STREP202

The size of the streams pool grows dynamically as required by Oracle Streams.

The (initial) size also depends on usage of ASMM, AMM or manual (minimum) settings.

That means that the parameter STREAMS_POOL_SIZE=0 is not the real root cause but the memory management cannot provide the automatic increase for the DataPump action at this time.

Setting STREAMS_POOL_SIZE>0 will guarantee a minimum size for the streams pool when using ASMM or AMM, hence avoiding the ORA-4031.

Solution

Set the STREAMS_POOL_SIZE instance parameter to at least 48MB to guarantuee a minimum size using:

SQL>connect / as sysdba

SQL> show parameter stream

NAME TYPE VALUE

------------------------------------ ----------- -----------------------------

streams_pool_size big integer 0

SQL>alter system set streams_pool_size=48m scope=both

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

When might a full table scan be faster than using an index in MySQL?

Apr 09, 2025 am 12:05 AM

When might a full table scan be faster than using an index in MySQL?

Apr 09, 2025 am 12:05 AM

Full table scanning may be faster in MySQL than using indexes. Specific cases include: 1) the data volume is small; 2) when the query returns a large amount of data; 3) when the index column is not highly selective; 4) when the complex query. By analyzing query plans, optimizing indexes, avoiding over-index and regularly maintaining tables, you can make the best choices in practical applications.

Can I install mysql on Windows 7

Apr 08, 2025 pm 03:21 PM

Can I install mysql on Windows 7

Apr 08, 2025 pm 03:21 PM

Yes, MySQL can be installed on Windows 7, and although Microsoft has stopped supporting Windows 7, MySQL is still compatible with it. However, the following points should be noted during the installation process: Download the MySQL installer for Windows. Select the appropriate version of MySQL (community or enterprise). Select the appropriate installation directory and character set during the installation process. Set the root user password and keep it properly. Connect to the database for testing. Note the compatibility and security issues on Windows 7, and it is recommended to upgrade to a supported operating system.

Explain InnoDB Full-Text Search capabilities.

Apr 02, 2025 pm 06:09 PM

Explain InnoDB Full-Text Search capabilities.

Apr 02, 2025 pm 06:09 PM

InnoDB's full-text search capabilities are very powerful, which can significantly improve database query efficiency and ability to process large amounts of text data. 1) InnoDB implements full-text search through inverted indexing, supporting basic and advanced search queries. 2) Use MATCH and AGAINST keywords to search, support Boolean mode and phrase search. 3) Optimization methods include using word segmentation technology, periodic rebuilding of indexes and adjusting cache size to improve performance and accuracy.

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL is an open source relational database management system. 1) Create database and tables: Use the CREATEDATABASE and CREATETABLE commands. 2) Basic operations: INSERT, UPDATE, DELETE and SELECT. 3) Advanced operations: JOIN, subquery and transaction processing. 4) Debugging skills: Check syntax, data type and permissions. 5) Optimization suggestions: Use indexes, avoid SELECT* and use transactions.

Difference between clustered index and non-clustered index (secondary index) in InnoDB.

Apr 02, 2025 pm 06:25 PM

Difference between clustered index and non-clustered index (secondary index) in InnoDB.

Apr 02, 2025 pm 06:25 PM

The difference between clustered index and non-clustered index is: 1. Clustered index stores data rows in the index structure, which is suitable for querying by primary key and range. 2. The non-clustered index stores index key values and pointers to data rows, and is suitable for non-primary key column queries.

Can mysql and mariadb coexist

Apr 08, 2025 pm 02:27 PM

Can mysql and mariadb coexist

Apr 08, 2025 pm 02:27 PM

MySQL and MariaDB can coexist, but need to be configured with caution. The key is to allocate different port numbers and data directories to each database, and adjust parameters such as memory allocation and cache size. Connection pooling, application configuration, and version differences also need to be considered and need to be carefully tested and planned to avoid pitfalls. Running two databases simultaneously can cause performance problems in situations where resources are limited.

The relationship between mysql user and database

Apr 08, 2025 pm 07:15 PM

The relationship between mysql user and database

Apr 08, 2025 pm 07:15 PM

In MySQL database, the relationship between the user and the database is defined by permissions and tables. The user has a username and password to access the database. Permissions are granted through the GRANT command, while the table is created by the CREATE TABLE command. To establish a relationship between a user and a database, you need to create a database, create a user, and then grant permissions.

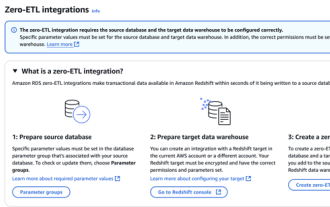

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

RDS MySQL integration with Redshift zero ETL

Apr 08, 2025 pm 07:06 PM

Data Integration Simplification: AmazonRDSMySQL and Redshift's zero ETL integration Efficient data integration is at the heart of a data-driven organization. Traditional ETL (extract, convert, load) processes are complex and time-consuming, especially when integrating databases (such as AmazonRDSMySQL) with data warehouses (such as Redshift). However, AWS provides zero ETL integration solutions that have completely changed this situation, providing a simplified, near-real-time solution for data migration from RDSMySQL to Redshift. This article will dive into RDSMySQL zero ETL integration with Redshift, explaining how it works and the advantages it brings to data engineers and developers.