Hadoop的Secondary Sorting

这几天项目中使用Hadoop遇到一个问题,对于这样key-value的数据集合:id-biz object,对id进行partition(比如根据某特定的hash算法P),分为a份;使用数量为b的reducer,在reducer里面要使用第三方组件进行批量上传;上传成文件,文件数量为c,但是有两个要

这几天项目中使用Hadoop遇到一个问题,对于这样key-value的数据集合:id-biz object,对id进行partition(比如根据某特定的hash算法P),分为a份;使用数量为b的reducer,在reducer里面要使用第三方组件进行批量上传;上传成文件,文件数量为c,但是有两个要求:

这几天项目中使用Hadoop遇到一个问题,对于这样key-value的数据集合:id-biz object,对id进行partition(比如根据某特定的hash算法P),分为a份;使用数量为b的reducer,在reducer里面要使用第三方组件进行批量上传;上传成文件,文件数量为c,但是有两个要求:

- 上述a、b、c都相等,从而使得每个partition的数据最终都通过同一个reducer上传到同一个文件中去;

- 每个reducer中上传的数据要求id必须有序。

最开始,想到的办法是,为了保证reducer中的批量上传,需要使得传入reducer的key变成一个经过hash算法A计算得到的index,这样就使得reducer中的value是一个包含了数个biz boject的集合的iterator,从而实现在一次reducer调用中批量上传并且提交。在批量上传提交的过程中,按照每上限个(例如1000个)文件提交一次的办法进行,以保证内存占用控制在一定范围内。

如何保证有序?

Hadoop在Reduce之前会自动对key排序,但是上述的情况实际是要根据id来给value排序(因为在map之后key已经变成index了),凡是涉及到要给value排序的,都要使用Hadoop的Secondary Sorting(见stackoverflow链接)。

这张图其实已经可以说明,把value要排序的关键属性放到key里面去,这样key就变成了natural key(上述的index)和secondary key(上述的id)这样两部分组成的一个composite key。

1. Partition:Partition的时候仅使用natural key,保证所有index的数据都分在同一个partition;

JobConf.setPartitionClass(...);

2. Sort:真正给key排序的比较算法要对natural key和secondary key两部分进行排序,从而保证了key在id维度上是有序的,而id和value是一一对应的,因此value也就是有序的。

JobConf.setOutputKeyComparatorClass(...);

3. Group:grouping的比较算法忽略掉secondary key,只对natural keygrouping,使得属于同一index的数据都走到同一个reducer中去。

JobConf.setOutputValueGroupingComparatorClass(...);

总结一下,这样一来,在reducer中,input key是上述这样一个composite key对象,包含了index和id,input value是一个可以遍历的元素为原始biz object类型的对象。

后话:这是Secondary Sorting的过程,可以解决我的问题,但是后来发现,实际上,我的问题并不需要要用这样啰嗦的方式来解决:

- 进入reducer的key只需要是id,Hadoop会对key自动排序;

- partition策略不变,但是是在partitioner中计算index并根据它来partition;

- 不需要单独指定Grouping和Sorting的算法;

- 在reducer中建立一个大小为上限(如1000个)的容器对象p。

这样,既然对于每个partition的数据,都在同一个reducer中得到处理,而reducer中每次reduce方法彼此之间是根据id有序进行,那么就可以在每次调用时把数据放到p中,在p放满时提交一次即可。

测试通过。回头看看,真是刚开始的时候把问题想复杂了。

文章未经特殊标明皆为本人原创,未经许可不得用于任何商业用途,转载请保持完整性并注明来源链接《四火的唠叨》

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1671

1671

14

14

1428

1428

52

52

1329

1329

25

25

1276

1276

29

29

1256

1256

24

24

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid When using Hadoop to process big data, you often encounter some Java exception errors, which may affect the execution of tasks and cause data processing to fail. This article will introduce some common Hadoop errors and provide ways to deal with and avoid them. Java.lang.OutOfMemoryErrorOutOfMemoryError is an error caused by insufficient memory of the Java virtual machine. When Hadoop is

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

As the amount of data continues to increase, traditional data processing methods can no longer handle the challenges brought by the big data era. Hadoop is an open source distributed computing framework that solves the performance bottleneck problem caused by single-node servers in big data processing through distributed storage and processing of large amounts of data. PHP is a scripting language that is widely used in web development and has the advantages of rapid development and easy maintenance. This article will introduce how to use PHP and Hadoop for big data processing. What is HadoopHadoop is

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Java big data technology stack: Understand the application of Java in the field of big data, such as Hadoop, Spark, Kafka, etc. As the amount of data continues to increase, big data technology has become a hot topic in today's Internet era. In the field of big data, we often hear the names of Hadoop, Spark, Kafka and other technologies. These technologies play a vital role, and Java, as a widely used programming language, also plays a huge role in the field of big data. This article will focus on the application of Java in large

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

With the advent of the big data era, data processing and storage have become more and more important, and how to efficiently manage and analyze large amounts of data has become a challenge for enterprises. Hadoop and HBase, two projects of the Apache Foundation, provide a solution for big data storage and analysis. This article will introduce how to use Hadoop and HBase in Beego for big data storage and query. 1. Introduction to Hadoop and HBase Hadoop is an open source distributed storage and computing system that can

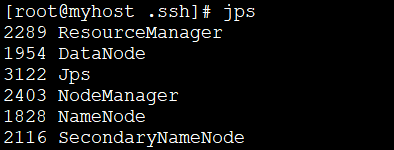

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

1: Install JDK1. Execute the following command to download the JDK1.8 installation package. wget--no-check-certificatehttps://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz2. Execute the following command to decompress the downloaded JDK1.8 installation package. tar-zxvfjdk-8u151-linux-x64.tar.gz3. Move and rename the JDK package. mvjdk1.8.0_151//usr/java84. Configure Java environment variables. echo'

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

In the current Internet era, the processing of massive data is a problem that every enterprise and institution needs to face. As a widely used programming language, PHP also needs to keep up with the times in data processing. In order to process massive data more efficiently, PHP development has introduced some big data processing tools, such as Spark and Hadoop. Spark is an open source data processing engine that can be used for distributed processing of large data sets. The biggest feature of Spark is its fast data processing speed and efficient data storage.

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

As the amount of data continues to increase, large-scale data processing has become a problem that enterprises must face and solve. Traditional relational databases can no longer meet this demand. For the storage and analysis of large-scale data, distributed computing platforms such as Hadoop, Spark, and Flink have become the best choices. In the selection process of data processing tools, PHP is becoming more and more popular among developers as a language that is easy to develop and maintain. In this article, we will explore how to leverage PHP for large-scale data processing and how

Comparison and application scenarios of Redis and Hadoop

Jun 21, 2023 am 08:28 AM

Comparison and application scenarios of Redis and Hadoop

Jun 21, 2023 am 08:28 AM

Redis and Hadoop are both commonly used distributed data storage and processing systems. However, there are obvious differences between the two in terms of design, performance, usage scenarios, etc. In this article, we will compare the differences between Redis and Hadoop in detail and explore their applicable scenarios. Redis Overview Redis is an open source memory-based data storage system that supports multiple data structures and efficient read and write operations. The main features of Redis include: Memory storage: Redis