hadoop mapreduce求平均分

hadoop mapreduce求平均分 求平均分的关键在于,利用mapreduce过程中,一个key聚合在一起,输送到一个reduce的特性。 假设三门课的成绩如下: china.txt [plain] 张三 78 李四 89 王五 96 赵六 67 english.txt [plain] 张三 80 李四 82 王五 84 赵六 86 math

hadoop mapreduce求平均分

求平均分的关键在于,利用mapreduce过程中,一个key聚合在一起,输送到一个reduce的特性。

假设三门课的成绩如下:

china.txt

[plain]

张三 78

李四 89

王五 96

赵六 67

english.txt

[plain]

张三 80

李四 82

王五 84

赵六 86

math.txt

[plain]

张三 88

李四 99

王五 66

赵六 72

mapreduce如下:

[plain]

public static class Map extends Mapper

// 实现map函数

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

// 将输入的纯文本文件的数据转化成String

String line = value.toString();

// 将输入的数据首先按行进行分割

StringTokenizer tokenizerArticle = new StringTokenizer(line, "\n");

// 分别对每一行进行处理

while (tokenizerArticle.hasMoreElements()) {

// 每行按空格划分

StringTokenizer tokenizerLine = new StringTokenizer(tokenizerArticle.nextToken());

String strName = tokenizerLine.nextToken();// 学生姓名部分

String strScore = tokenizerLine.nextToken();// 成绩部分

Text name = new Text(strName);

int scoreInt = Integer.parseInt(strScore);

// 输出姓名和成绩

context.write(name, new IntWritable(scoreInt));

}

}

}

public static class Reduce extends Reducer

// 实现reduce函数

public void reduce(Text key, Iterable

int sum = 0;

int count = 0;

Iterator

while (iterator.hasNext()) {

sum += iterator.next().get();// 计算总分

count++;// 统计总的科目数

}

int average = (int) sum / count;// 计算平均成绩

context.write(key, new IntWritable(average));

}

}

输出如下:

[plain]

张三 82

李四 90

王五 82

赵六 75

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid

Jun 24, 2023 pm 01:06 PM

Java Errors: Hadoop Errors, How to Handle and Avoid When using Hadoop to process big data, you often encounter some Java exception errors, which may affect the execution of tasks and cause data processing to fail. This article will introduce some common Hadoop errors and provide ways to deal with and avoid them. Java.lang.OutOfMemoryErrorOutOfMemoryError is an error caused by insufficient memory of the Java virtual machine. When Hadoop is

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

Using Hadoop and HBase in Beego for big data storage and querying

Jun 22, 2023 am 10:21 AM

With the advent of the big data era, data processing and storage have become more and more important, and how to efficiently manage and analyze large amounts of data has become a challenge for enterprises. Hadoop and HBase, two projects of the Apache Foundation, provide a solution for big data storage and analysis. This article will introduce how to use Hadoop and HBase in Beego for big data storage and query. 1. Introduction to Hadoop and HBase Hadoop is an open source distributed storage and computing system that can

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

How to use PHP and Hadoop for big data processing

Jun 19, 2023 pm 02:24 PM

As the amount of data continues to increase, traditional data processing methods can no longer handle the challenges brought by the big data era. Hadoop is an open source distributed computing framework that solves the performance bottleneck problem caused by single-node servers in big data processing through distributed storage and processing of large amounts of data. PHP is a scripting language that is widely used in web development and has the advantages of rapid development and easy maintenance. This article will introduce how to use PHP and Hadoop for big data processing. What is HadoopHadoop is

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Explore the application of Java in the field of big data: understanding of Hadoop, Spark, Kafka and other technology stacks

Dec 26, 2023 pm 02:57 PM

Java big data technology stack: Understand the application of Java in the field of big data, such as Hadoop, Spark, Kafka, etc. As the amount of data continues to increase, big data technology has become a hot topic in today's Internet era. In the field of big data, we often hear the names of Hadoop, Spark, Kafka and other technologies. These technologies play a vital role, and Java, as a widely used programming language, also plays a huge role in the field of big data. This article will focus on the application of Java in large

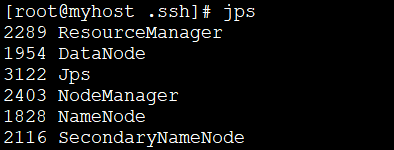

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

How to install Hadoop in linux

May 18, 2023 pm 08:19 PM

1: Install JDK1. Execute the following command to download the JDK1.8 installation package. wget--no-check-certificatehttps://repo.huaweicloud.com/java/jdk/8u151-b12/jdk-8u151-linux-x64.tar.gz2. Execute the following command to decompress the downloaded JDK1.8 installation package. tar-zxvfjdk-8u151-linux-x64.tar.gz3. Move and rename the JDK package. mvjdk1.8.0_151//usr/java84. Configure Java environment variables. echo'

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

Use PHP to achieve large-scale data processing: Hadoop, Spark, Flink, etc.

May 11, 2023 pm 04:13 PM

As the amount of data continues to increase, large-scale data processing has become a problem that enterprises must face and solve. Traditional relational databases can no longer meet this demand. For the storage and analysis of large-scale data, distributed computing platforms such as Hadoop, Spark, and Flink have become the best choices. In the selection process of data processing tools, PHP is becoming more and more popular among developers as a language that is easy to develop and maintain. In this article, we will explore how to leverage PHP for large-scale data processing and how

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

Data processing engines in PHP (Spark, Hadoop, etc.)

Jun 23, 2023 am 09:43 AM

In the current Internet era, the processing of massive data is a problem that every enterprise and institution needs to face. As a widely used programming language, PHP also needs to keep up with the times in data processing. In order to process massive data more efficiently, PHP development has introduced some big data processing tools, such as Spark and Hadoop. Spark is an open source data processing engine that can be used for distributed processing of large data sets. The biggest feature of Spark is its fast data processing speed and efficient data storage.

The practice of using cache to accelerate MapReduce calculation process in Golang.

Jun 21, 2023 pm 03:02 PM

The practice of using cache to accelerate MapReduce calculation process in Golang.

Jun 21, 2023 pm 03:02 PM

The practice of using cache to accelerate MapReduce calculation process in Golang. With the increasing scale of data and the increasing intensity of computing, traditional computing methods are no longer able to meet people's needs for rapid data processing. In this regard, MapReduce technology came into being. However, in the MapReduce calculation process, due to the operations involving a large number of key-value pairs, the calculation speed is slow, so how to optimize the calculation speed has also become an important issue. In recent years, many developers have started to develop Golang language