Technology peripherals

Technology peripherals

AI

AI

Magically modified RNN challenges Transformer, RWKV is new: launching two new architecture models

Magically modified RNN challenges Transformer, RWKV is new: launching two new architecture models

Magically modified RNN challenges Transformer, RWKV is new: launching two new architecture models

Instead of taking the usual path of Transformer, the new domestic architecture of RNN is modified RWKV, and there is new progress:

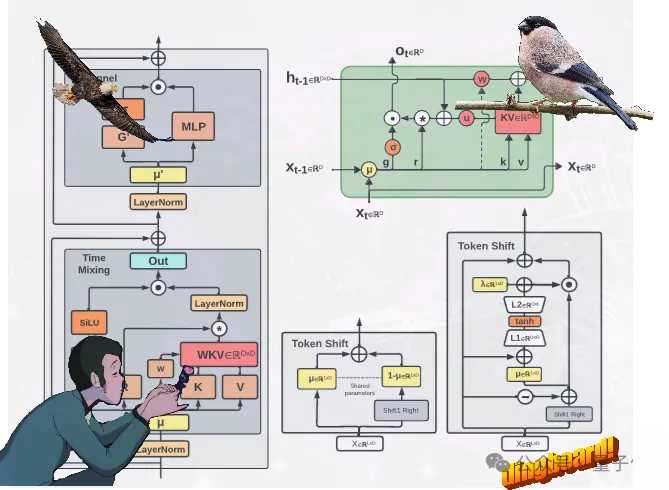

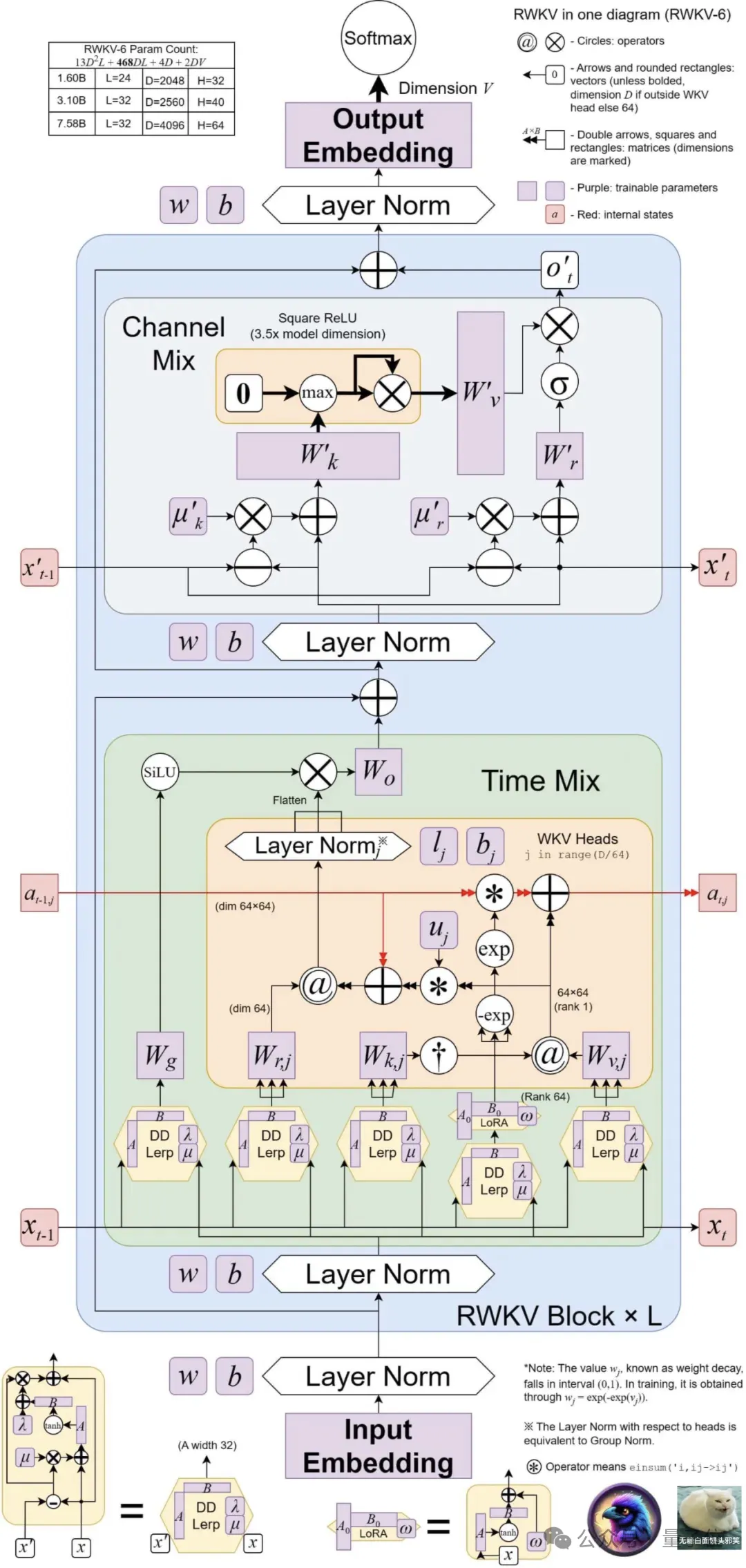

proposes two new RWKV architectures, namely Eagle (RWKV-5) and Finch (RWKV-6).

These two sequence models are based on the RWKV-4 architecture and then improved.

Design advancements in the new architecture include Multi-headed matrix-valued states(multi-headed matrix-valued states)and dynamic recursion mechanism (dynamic recurrence mechanism) , these improvements improve the expressive ability of the RWKV model while maintaining the inference efficiency characteristics of RNN.

At the same time, the new architecture introduces a new multilingual corpus containing 1.12 trillion tokens.

The team also developed a fast word segmenter based on greedy matching (greedy matching) to enhance the multilinguality of RWKV.

Currently, 4 Eagle models and 2 Finch models have been released on Huohuofian~

New models Eagle and Finch

This updated RWKV contains a total of 6 models, namely:

4 Eagle (RWKV-5) Model: 0.4B, 1.5B, 3B, 7B parameter sizes respectively;

2 Finch (RWKV-6) Model: respectively 1.6B, 3B parameter size.

Eagle achieves this by using multiple matrix-valued states (instead of vector-valued states) , reconstructed accepting states, and additional Gating mechanism, improved architecture and learning decay progress learned from RWKV-4.

Finch further improves the performance capabilities and flexibility of the architecture by introducing new data-related functions for time mixing and token shift modules, including Parametric linear interpolation.

In addition, Finch proposes a new use of low-rank adaptive functions to enable trainable weight matrices to effectively enhance the learned data decay vectors in a context-sensitive manner.

Finally, RWKV’s new architecture introduces a new tokenizerRWKV World Tokenizer, and a new data setRWKV World v2, both used to improve the performance of RWKV models on multi-language and code data.

The new tokenizer RWKV World Tokenizer contains words from uncommon languages, and performs rapid word segmentation through Trie-based greedy matching (greedy matching) .

The new data set RWKV World v2 is a new multi-language 1.12T tokens data set, taken from various hand-selected publicly available data sources.

In its data composition, about 70% is English data, 15% is multi-language data, and 15% is code data.

What are the benchmark results?

Architectural innovation alone is not enough, the key lies in the actual performance of the model.

Let’s take a look at the results of the new model on the major authoritative evaluation lists——

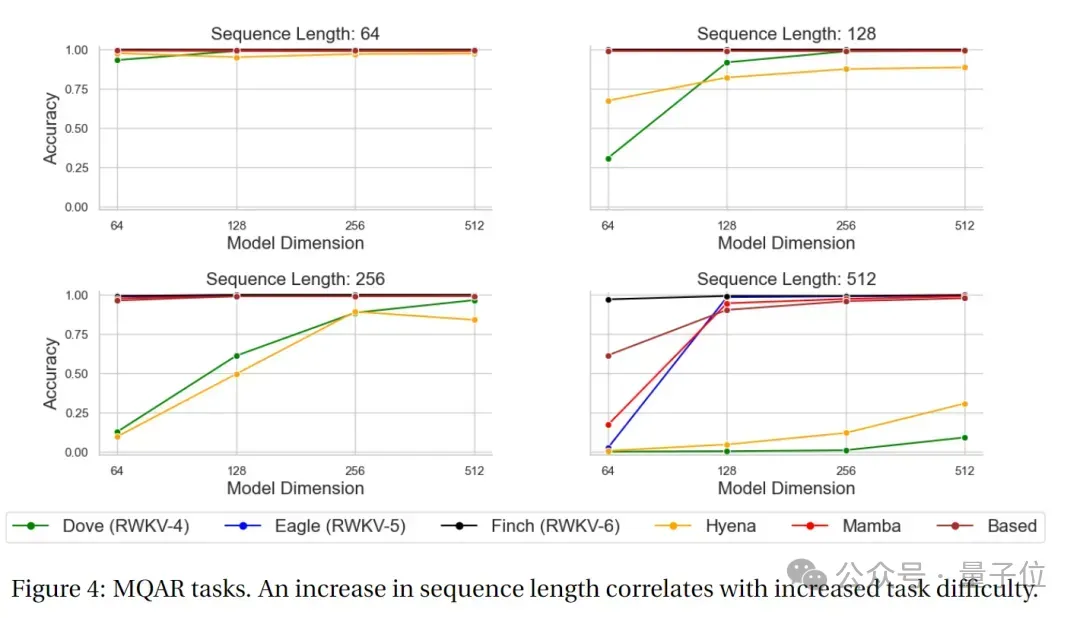

MQAR test results

MQAR (Multiple Query Associative Recall) The task is a task used to evaluate language models and is designed to test the model's associative memory ability under multiple queries.

In this type of task, the model needs to retrieve relevant information given multiple queries.

The goal of the MQAR task is to measure the model's ability to retrieve information under multiple queries, as well as its adaptability and accuracy to different queries.

The following figure shows the MQAR task test results of RWKV-4, Eagle, Finch and other non-Transformer architectures.

It can be seen that in the accuracy test of the MQAR task, Finch's accuracy performance in various sequence length tests is very stable. Compared with RWKV-4 and RWKV -5 and other non-Transformer architecture models have significant performance advantages.

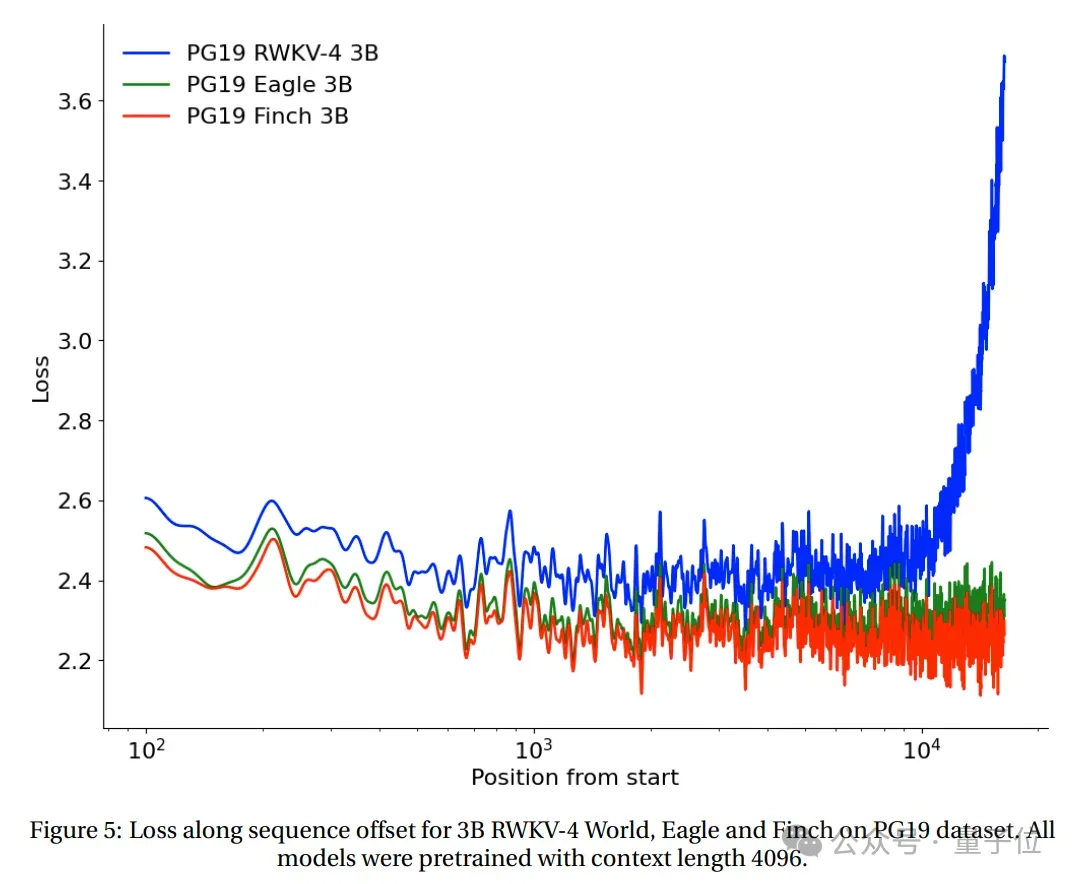

Long context experiment

The loss and sequence position of RWKV-4, Eagle and Finch starting from 2048 tokens were tested on the PG19 test set.

(All models are pre-trained based on context length 4096) .

The test results show that Eagle has significant improvements over RWKV-4 on long sequence tasks, and Finch trained at context length 4096 performs better than Eagle, Can automatically adapt well to context lengths above 20,000.

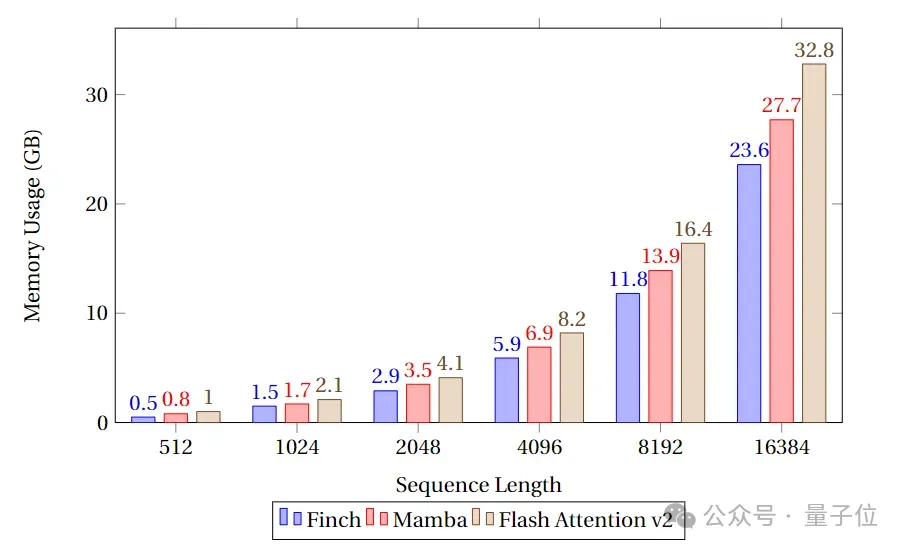

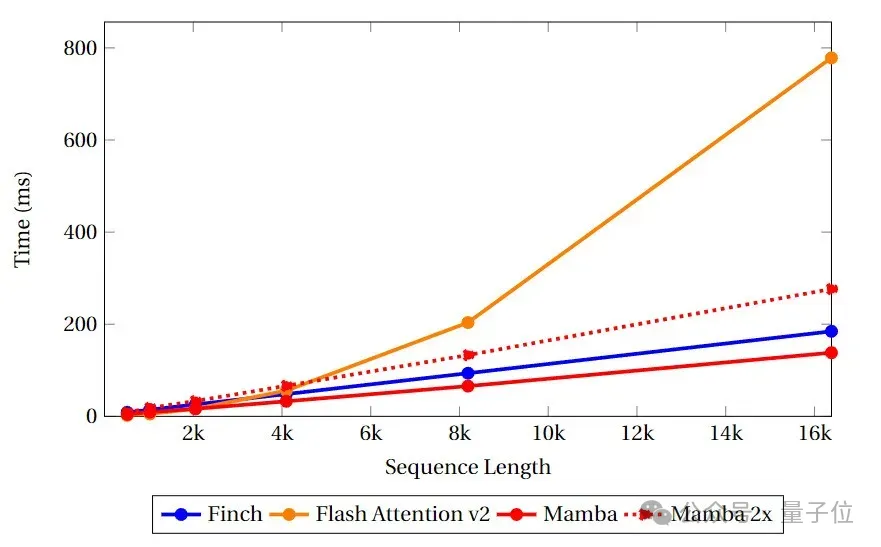

Speed and memory benchmark test

In the speed and memory benchmark test, the team compared the speed and memory utilization of Finch, Mamba and Flash Attention's Attention-like cores.

It can be seen that Finch is always better than Mamba and Flash Attention in terms of memory usage, and the memory usage is 40% and 17% less than Flash Attention and Mamba respectively. %.

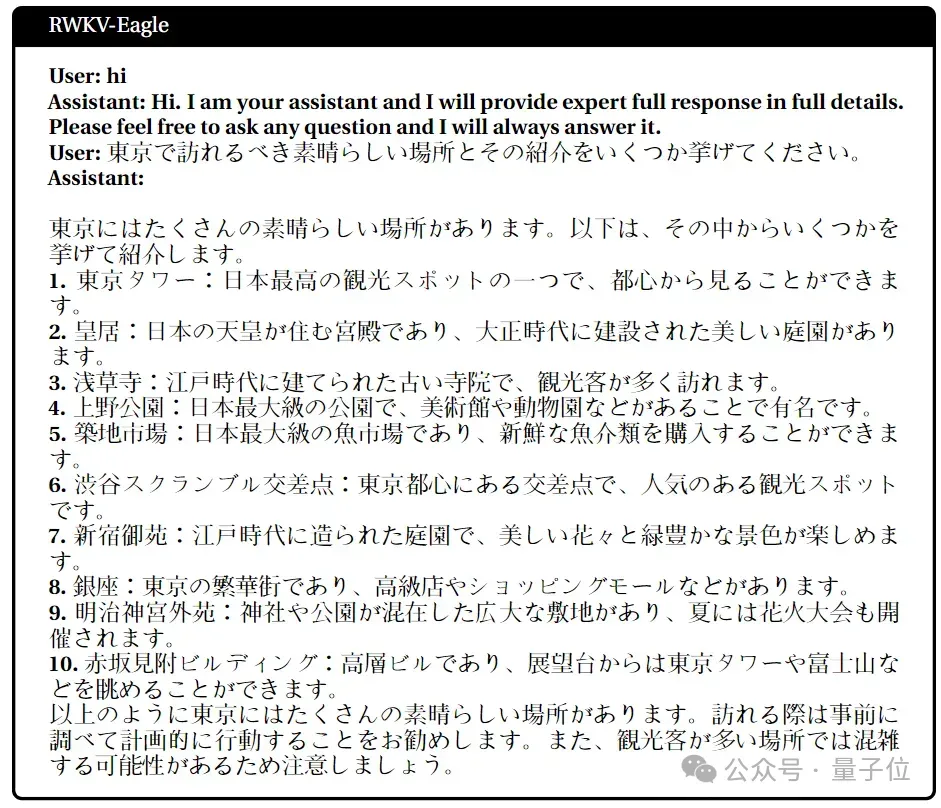

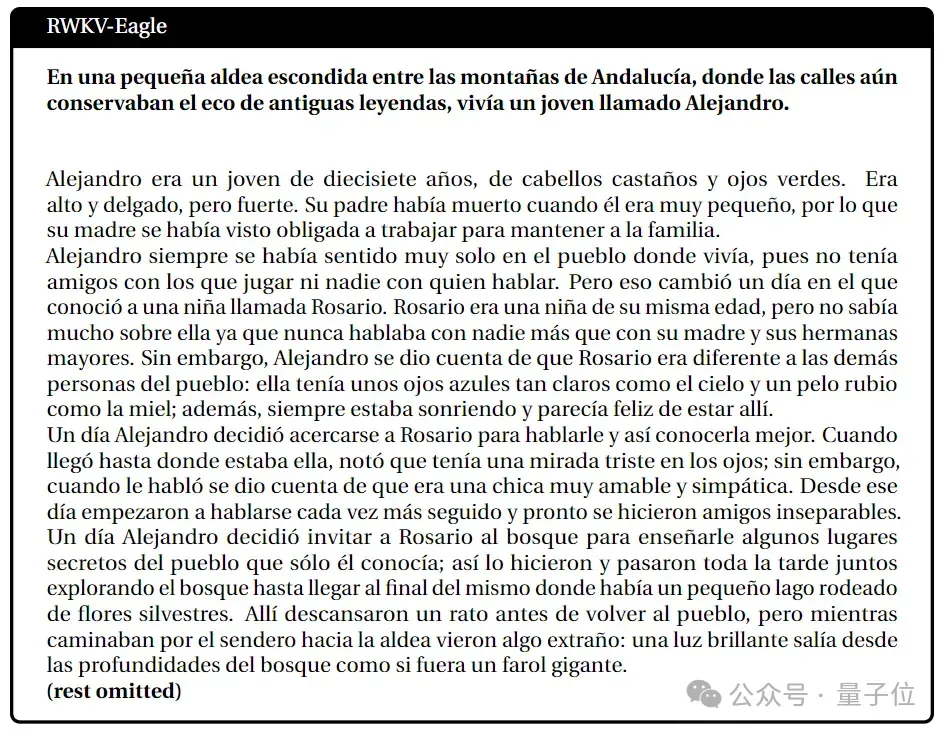

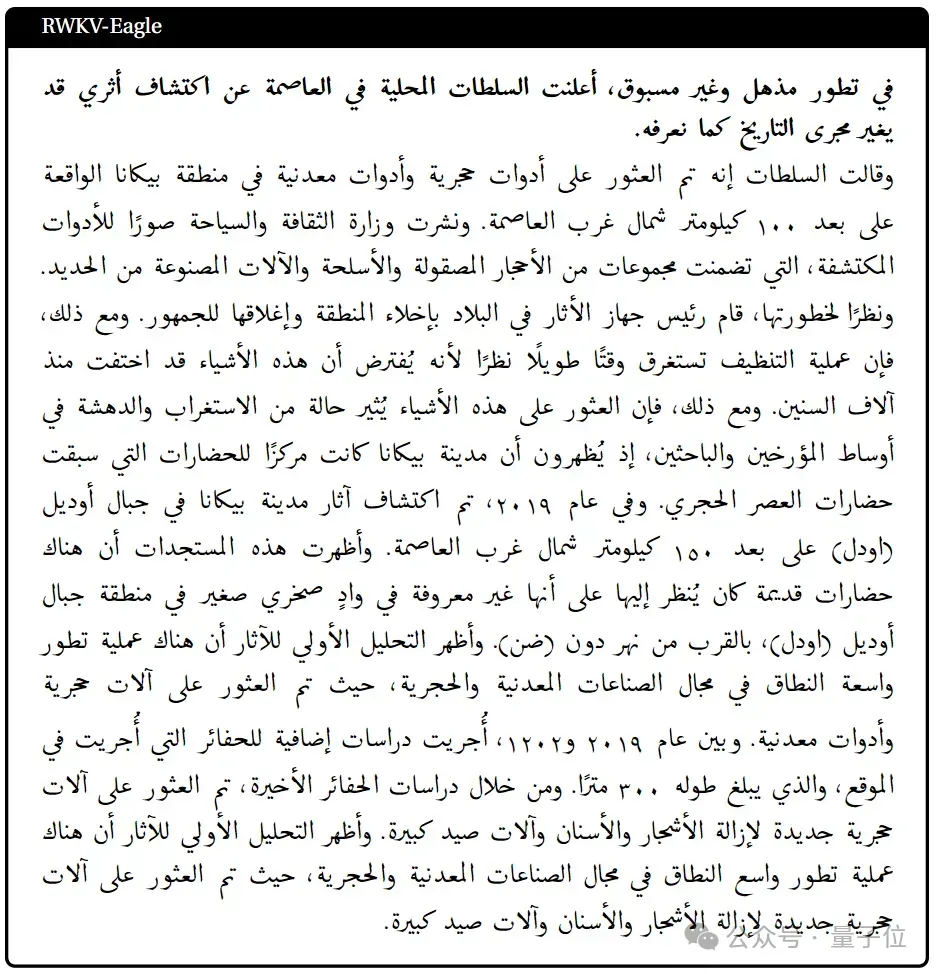

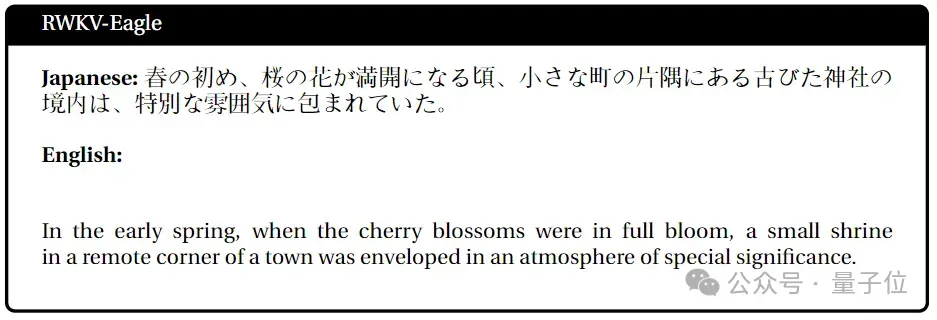

Multi-language task performance

Japanese

Spanish

Arabic Language

Japanese-English

Next step work

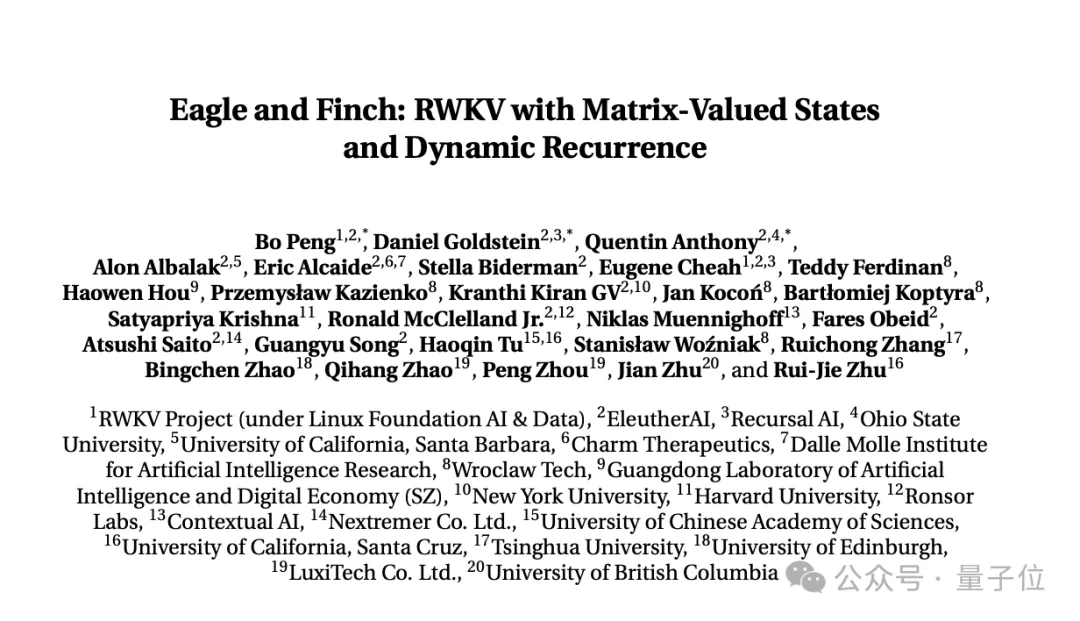

The above research content comes from RWKV Foundation The latest paper released"Eagle and Finch: RWKV with Matrix-Valued States and Dynamic Recurrence".

The paper was jointly completed by RWKV founder Bo PENG (Bloomberg) and members of the RWKV open source community.

Co-authored by Bloomberg, graduated from Hong Kong University Department of Physics, has 20 years of programming experience, and worked at Ortus Capital, one of the world's largest foreign exchange hedge funds , responsible for high-frequency quantitative trading.

also published a book about deep convolutional networks "Deep Convolutional Networks·Principles and Practice".

His main focus and interest are in software and hardware development. In previous public interviews, he has made it clear that AIGC is his interest, especially novel generation.

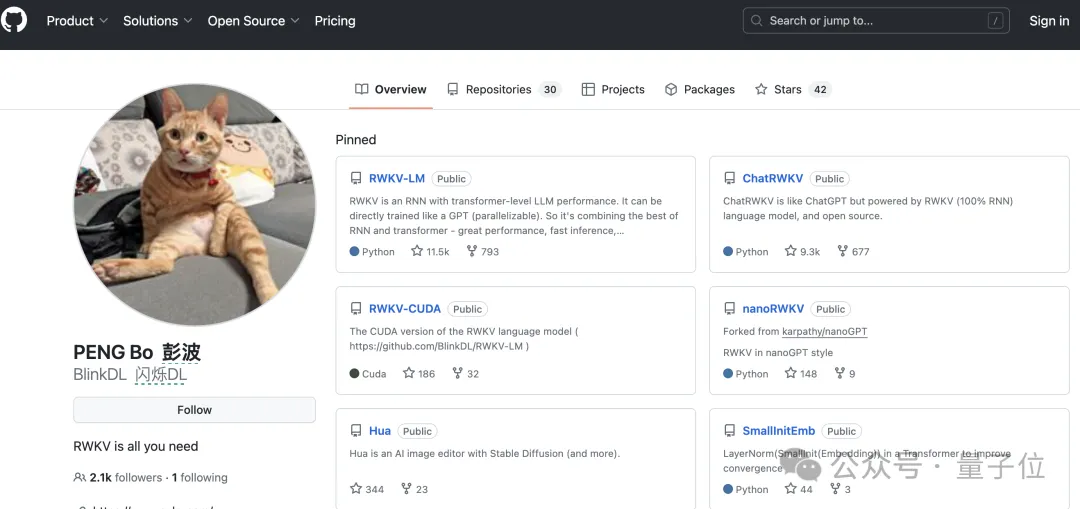

Currently, Bloomberg has 2.1k followers on Github.

But his most important public identity is the co-founder of a lighting company, Linlin Technology, which mainly makes sun lamps, ceiling lamps, portable desk lamps and so on.

And he should be a senior cat lover. There is an orange cat on Github, Zhihu, and WeChat avatars, as well as on the lighting company’s official website homepage and Weibo.

Qubit learned that RWKV’s current multi-modal work includes RWKV Music (music direction) and VisualRWKV (image direction) .

Next, RWKV will focus on the following directions:

- Expand the training corpus, make it more diverse (This is a key thing to improve model performance) ;

- Train and release larger version of Finch, such as 7B and 14B parameters, and reduces inference and training costs through MoE to further expand its performance.

- Further optimize Finch’s CUDA implementation (including algorithm improvements), Bringing speed improvements and greater parallelism.

Paper link:

https://arxiv.org/pdf/2404.05892.pdf

The above is the detailed content of Magically modified RNN challenges Transformer, RWKV is new: launching two new architecture models. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1662

1662

14

14

1419

1419

52

52

1313

1313

25

25

1262

1262

29

29

1235

1235

24

24

Which of the top ten currency trading platforms in the world are among the top ten currency trading platforms in 2025

Apr 28, 2025 pm 08:12 PM

Which of the top ten currency trading platforms in the world are among the top ten currency trading platforms in 2025

Apr 28, 2025 pm 08:12 PM

The top ten cryptocurrency exchanges in the world in 2025 include Binance, OKX, Gate.io, Coinbase, Kraken, Huobi, Bitfinex, KuCoin, Bittrex and Poloniex, all of which are known for their high trading volume and security.

How much is Bitcoin worth

Apr 28, 2025 pm 07:42 PM

How much is Bitcoin worth

Apr 28, 2025 pm 07:42 PM

Bitcoin’s price ranges from $20,000 to $30,000. 1. Bitcoin’s price has fluctuated dramatically since 2009, reaching nearly $20,000 in 2017 and nearly $60,000 in 2021. 2. Prices are affected by factors such as market demand, supply, and macroeconomic environment. 3. Get real-time prices through exchanges, mobile apps and websites. 4. Bitcoin price is highly volatile, driven by market sentiment and external factors. 5. It has a certain relationship with traditional financial markets and is affected by global stock markets, the strength of the US dollar, etc. 6. The long-term trend is bullish, but risks need to be assessed with caution.

Which of the top ten currency trading platforms in the world are the latest version of the top ten currency trading platforms

Apr 28, 2025 pm 08:09 PM

Which of the top ten currency trading platforms in the world are the latest version of the top ten currency trading platforms

Apr 28, 2025 pm 08:09 PM

The top ten cryptocurrency trading platforms in the world include Binance, OKX, Gate.io, Coinbase, Kraken, Huobi Global, Bitfinex, Bittrex, KuCoin and Poloniex, all of which provide a variety of trading methods and powerful security measures.

What are the top currency trading platforms? The top 10 latest virtual currency exchanges

Apr 28, 2025 pm 08:06 PM

What are the top currency trading platforms? The top 10 latest virtual currency exchanges

Apr 28, 2025 pm 08:06 PM

Currently ranked among the top ten virtual currency exchanges: 1. Binance, 2. OKX, 3. Gate.io, 4. Coin library, 5. Siren, 6. Huobi Global Station, 7. Bybit, 8. Kucoin, 9. Bitcoin, 10. bit stamp.

What are the top ten virtual currency trading apps? The latest digital currency exchange rankings

Apr 28, 2025 pm 08:03 PM

What are the top ten virtual currency trading apps? The latest digital currency exchange rankings

Apr 28, 2025 pm 08:03 PM

The top ten digital currency exchanges such as Binance, OKX, gate.io have improved their systems, efficient diversified transactions and strict security measures.

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

Using the chrono library in C can allow you to control time and time intervals more accurately. Let's explore the charm of this library. C's chrono library is part of the standard library, which provides a modern way to deal with time and time intervals. For programmers who have suffered from time.h and ctime, chrono is undoubtedly a boon. It not only improves the readability and maintainability of the code, but also provides higher accuracy and flexibility. Let's start with the basics. The chrono library mainly includes the following key components: std::chrono::system_clock: represents the system clock, used to obtain the current time. std::chron

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

Handling high DPI display in C can be achieved through the following steps: 1) Understand DPI and scaling, use the operating system API to obtain DPI information and adjust the graphics output; 2) Handle cross-platform compatibility, use cross-platform graphics libraries such as SDL or Qt; 3) Perform performance optimization, improve performance through cache, hardware acceleration, and dynamic adjustment of the details level; 4) Solve common problems, such as blurred text and interface elements are too small, and solve by correctly applying DPI scaling.

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

DMA in C refers to DirectMemoryAccess, a direct memory access technology, allowing hardware devices to directly transmit data to memory without CPU intervention. 1) DMA operation is highly dependent on hardware devices and drivers, and the implementation method varies from system to system. 2) Direct access to memory may bring security risks, and the correctness and security of the code must be ensured. 3) DMA can improve performance, but improper use may lead to degradation of system performance. Through practice and learning, we can master the skills of using DMA and maximize its effectiveness in scenarios such as high-speed data transmission and real-time signal processing.