Target detection application of deep learning in computer vision

Object detection is an important task in the field of computer vision. Its goal is to identify specific objects from images or videos and label their locations and categories. Deep learning has achieved great success in object detection, especially methods based on convolutional neural networks (CNN). This article will introduce the concept and implementation steps of computer vision deep learning target detection.

1. Concept

1. Definition of target detection

Target detection is through images or video to identify specific objects and label their location and category. Compared with image classification and object detection, target detection requires locating multiple objects and is therefore more challenging.

2. Application of target detection

Target detection is widely used in many fields, such as smart home, smart transportation, and security monitoring , medical image analysis, etc. Among them, in the field of autonomous driving, target detection is an important basis for environmental perception and decision-making.

3. Evaluation indicators of target detection

The evaluation indicators of target detection mainly include precision, recall rate, accuracy rate, F1 value, etc. Among them, precision refers to the proportion of real objects among detected objects, that is, the proportion of correctly classified objects among detected objects; recall rate refers to the ratio of the number of correctly detected real objects to the number of real objects that actually exist; accuracy rate It refers to the ratio of the number of correctly classified objects to the total number of detected objects; the F1 value is the harmonic mean of precision and recall.

2. Implementation steps

#The implementation steps of target detection mainly include several stages such as data preparation, model construction, model training and model testing. .

1. Data preparation

Data preparation is the first step in target detection, which includes data collection, data cleaning, labeling data, etc. The quality of the data preparation phase directly affects the accuracy and robustness of the model.

2. Model construction

Model construction is the core step of target detection, which includes selecting the appropriate model architecture, designing the loss function, and setting Hyperparameters, etc. Currently, commonly used target detection models in deep learning include Faster R-CNN, YOLO, SSD, etc.

3. Model training

Model training refers to training the model by using annotated data to improve the accuracy and robustness of the model. During the model training process, it is necessary to select appropriate optimization algorithms, set learning rates, perform data enhancement, etc.

4. Model testing

Model testing refers to using test data to evaluate the performance of the model and optimize the model. In model testing, it is necessary to calculate the evaluation indicators of the model, such as precision, recall, accuracy, F1 value, etc. At the same time, the recognition results need to be visualized for manual inspection and error correction.

3. Examples

Take Faster R-CNN as an example to introduce the implementation steps of target detection:

1. Collect labeled data sets, such as PASCAL VOC, COCO, etc. Clean the data set to remove duplication, missing and other bad data. Label the data set, including category, location and other information.

2. Choose an appropriate model architecture, such as Faster R-CNN, which includes two stages: Region Proposal Network (RPN) and target classification network. In the RPN stage, a convolutional neural network is used to extract several candidate regions from the image. In the target classification network, each candidate area is classified and regressed to obtain the final target detection result. At the same time, a loss function, such as a multi-task loss function, is designed to optimize the model.

3. Use the annotated data set to train the model and optimize the loss function. During the training process, optimization algorithms such as stochastic gradient descent are used to adjust model parameters. At the same time, data enhancement, such as random cropping, rotation, etc., is performed to increase data diversity and improve model robustness.

4. Use the test data set to evaluate the model and optimize the model. Calculate model evaluation indicators, such as precision, recall, accuracy, F1 value, etc. Visualize recognition results for manual inspection and error correction.

The above is the detailed content of Target detection application of deep learning in computer vision. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

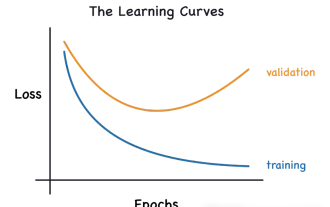

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

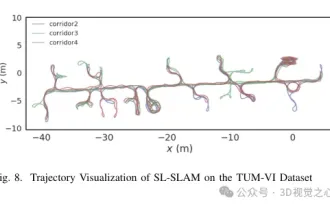

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Written previously, today we discuss how deep learning technology can improve the performance of vision-based SLAM (simultaneous localization and mapping) in complex environments. By combining deep feature extraction and depth matching methods, here we introduce a versatile hybrid visual SLAM system designed to improve adaptation in challenging scenarios such as low-light conditions, dynamic lighting, weakly textured areas, and severe jitter. sex. Our system supports multiple modes, including extended monocular, stereo, monocular-inertial, and stereo-inertial configurations. In addition, it also analyzes how to combine visual SLAM with deep learning methods to inspire other research. Through extensive experiments on public datasets and self-sampled data, we demonstrate the superiority of SL-SLAM in terms of positioning accuracy and tracking robustness.

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

AlphaFold 3 is launched, comprehensively predicting the interactions and structures of proteins and all living molecules, with far greater accuracy than ever before

Jul 16, 2024 am 12:08 AM

AlphaFold 3 is launched, comprehensively predicting the interactions and structures of proteins and all living molecules, with far greater accuracy than ever before

Jul 16, 2024 am 12:08 AM

Editor | Radish Skin Since the release of the powerful AlphaFold2 in 2021, scientists have been using protein structure prediction models to map various protein structures within cells, discover drugs, and draw a "cosmic map" of every known protein interaction. . Just now, Google DeepMind released the AlphaFold3 model, which can perform joint structure predictions for complexes including proteins, nucleic acids, small molecules, ions and modified residues. The accuracy of AlphaFold3 has been significantly improved compared to many dedicated tools in the past (protein-ligand interaction, protein-nucleic acid interaction, antibody-antigen prediction). This shows that within a single unified deep learning framework, it is possible to achieve