Technology peripherals

Technology peripherals

AI

AI

Meta plans to release a new open source version of the GPT-4 level large model next year. Its number of parameters will be several times that of Llama 2. Users can use it for free commercially.

Meta plans to release a new open source version of the GPT-4 level large model next year. Its number of parameters will be several times that of Llama 2. Users can use it for free commercially.

Meta plans to release a new open source version of the GPT-4 level large model next year. Its number of parameters will be several times that of Llama 2. Users can use it for free commercially.

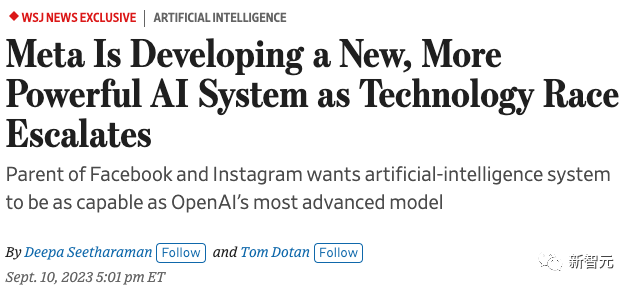

According to the foreign media "Wall Street Journal", Meta is stepping up the development of a new large language model. Its capabilities will be fully aligned with GPT-4 and is expected to be launched next year.

The news also specifically emphasized that Meta’s new large language model will be several times larger than Llama 2, and there is a high probability that it will be open source and free. Commercial.

Since Meta "accidentally" leaked LlaMA at the beginning of the year, to the open source release of Llama 2 in July, Meta has gradually found its unique position in this AI wave—— The flag of the AI open source community.

The personnel are constantly changing, and the model’s capabilities are flawed. We can only rely on open source software to solve the problem

At the beginning of the year, at OpenAI After detonating the technology industry with GPT-4, Google and Microsoft have also launched their own AI products.

In May, U.S. regulators invited CEOs of leading companies that they considered relevant to the AI industry at the time to hold a roundtable meeting to discuss the development of AI technology.

OpenAI, Google and Microsoft were invited to participate, and even the startup Anthropic, but Meta was a no-show. At that time, the official response to the reason for Meta's absence was: "We only invited the top companies in the AI industry."

Good things did not happen to Meta. But trouble kept coming.

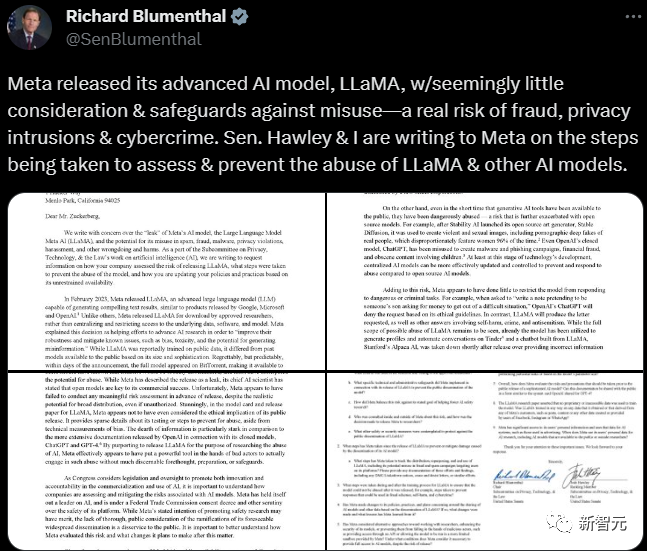

Xiao Zha received a letter of inquiry from Congress in early June, asking him to explain in detail the cause and impact of the LlaMA leak in March. The letter was sternly worded and the requirements were very clear

. In the following months, even after the release of Llama 2, Meta spent The AI team built with a lot of money is still gradually falling apart.

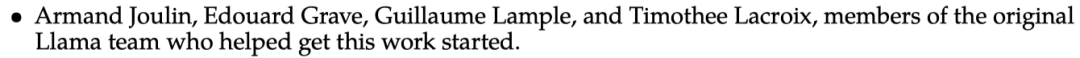

In the acknowledgments of Llama 2, the four team members who first initiated this research were mentioned, three of whom have resigned, and currently only Edouard Grave is still working at Meta Company

Industry Big BullHe Kaiming will also leave Meta and return to academia.

#According to a recent breaking article by The Information, Meta’s AI team has been experiencing constant friction due to competition for internal computing power, and personnel have been leaving one after another.

In this context, Xiao Zha himself should also know very well that Meta’s own large language model is indeed unable to compete with the industry’s most cutting-edge GPT-4.

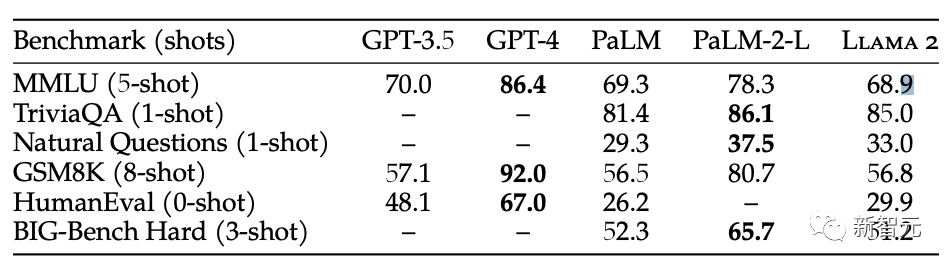

Whether it is viewed from all directions of benchmark testing or user feedback, the gap between Llama 2 and GPT-4 is still huge

In various benchmark tests, there is a considerable gap between the open source Llama 2 and GPT-4

GPT-4 still shows a clear lead over Llama 2 in the actual experience of netizens

Therefore, Xiao Zha decided to let Meta continue to run wildly on the road of model open source

Perhaps Xiao Zha’s thinking behind it is this: Meta model has average capabilities and cannot There’s no point in continuing to keep things secret in competition with the big guys who are closed source. Therefore, let’s simply open source and let the AI community continue to iterate based on their own models to expand the influence of their products in the industry

Xiao Zha has publicly stated many times that the open source community is very important to them. The model iterations played an inspiring role, allowing their technical team to develop more competitive products in the future

Xiao Zha in Fridman’s The podcast emphasized that open source allows Meta to draw inspiration from the community, and that Meta may launch a closed-source model in the future.

See: https://lexfridman.com/mark-zuckerberg-2/

And facts have proven that Meta’s choice is indeed is correct.

Although it is not as good as Google and OpenAI in terms of computing resources and technical strength, open source models such as Meta’s Llama 2 are still second to none in their appeal to the open source community. As Llama 2 slowly becomes the "technical base" of the AI open source community, Meta has also found its own ecological niche in the industry.

The most obvious sign is that in the closed-door meeting of Congress on AI that will be held in September, Xiao Zha finally became a guest of the regulators, working with Google, OpenAI and other industries CEOs of the most cutting-edge companies will act as representatives to express their voices on the regulation of the AI industry.

If the new model launched by Meta next year can continue to make progress and gain the same capabilities as GPT-4, on the one hand, it will enable the open source community Continuing to close the gap with closed source giants has confirmed the statement that "the gap between the open source community and the most advanced level in the industry is about one year."

On the other hand, Xiao Zha also revealed in the interview that if the capabilities of large models are further improved in the future, Meta may launch its own closed-source model. If the new model can further approach the industry SOTA, it may not be far from Meta launching its own closed-source model.

Although Meta seems to be temporarily lagging behind in this AI wave, Xiao Zha is not satisfied with being just a follower

In Yann Under the guidance of Lecun, Meta is also preparing to subvert the entire industry

Meta’s future

So, after this mysterious large model that is legendary to be comparable to GPT-4, Meta What will the future of AI look like?

Because there is no specific information yet, we can only make some guesses, such as starting from the attitude of Meta AI chief scientist LeCun.

The popular GPT has always been the artificial intelligence development route that LeCun criticized and despised.

On February 4 of this year, LeCun bluntly expressed his opinion that large language models are the wrong path on the road to human-level AI

He believes that this large model that generates autoregression based on probability will not survive for at most 5 years, because these artificial intelligences are only trained on a large amount of text, and they cannot Understand the real world.

These models can neither plan nor reason. They only have the ability to learn context.

Seriously speaking, these models are The artificial intelligence trained on LLM has almost no "intelligence" at all.

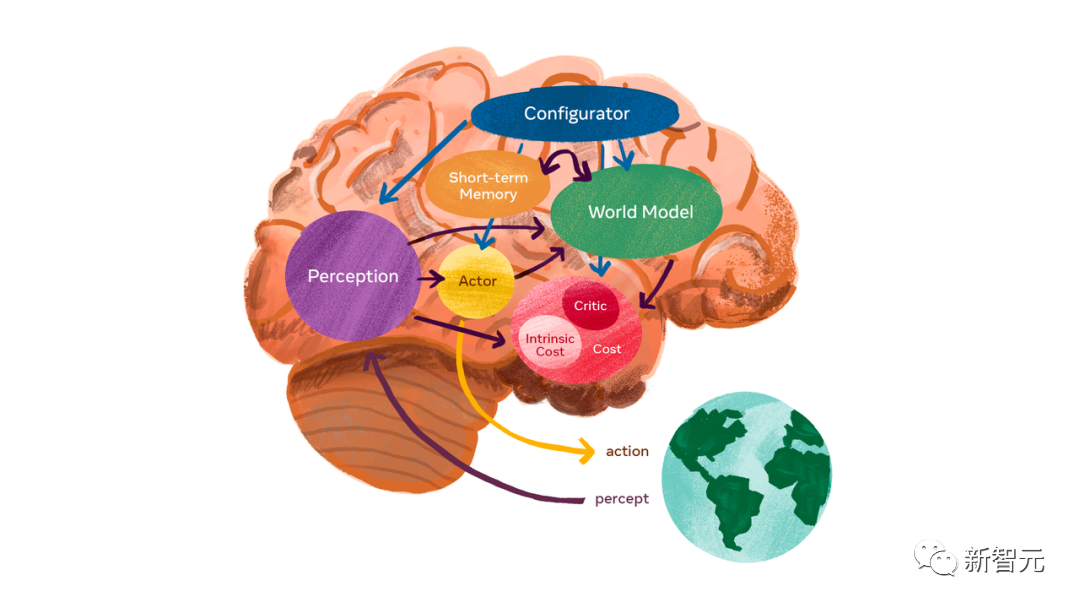

What LeCun is looking forward to is a "world model" that can lead to AGI.

World model can learn how the world works, learn more quickly, plan for completing complex tasks, and respond to unfamiliar new situations at any time Condition.

This is different from LLM that requires a lot of pre-training. The world model can find patterns from observation, adapt to new environments, and master new skills like humans.

Meta strives for diversified model development, compared with OpenAI’s strategy of continuous improvement and deepening in the LLM field

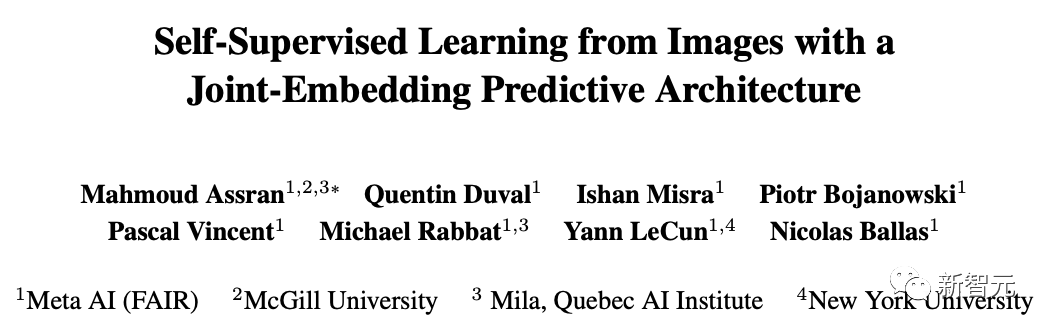

On June 14 this year, Meta has released I-JEPA, a "human-like" artificial intelligence model, which is also the first AI model in history based on key parts of LeCun's world model vision.

Please click the following link to view the paper: https://arxiv.org/abs/2301.08243

I-JEPA is able to understand abstract representations in images and acquire common sense through self-supervised learning

I-JEPA does not require additional artificial knowledge as an aid

Subsequently, Meta launched Voicebox, a new and innovative speech generation system based on a new method proposed by Meta AI - flow matching

It can synthesize speech in six languages, perform operations such as denoising, editing content, and converting audio styles.

Meta also released a universal embodied AI agent

With Language Guided Skills Coordination (LSC), the robot can operate on pre-mapped Free movement and picking up of items in certain environments

In the development of multi-modal models, Meta is unique

ImageBind, first An artificial intelligence model capable of binding information from six different modalities.

It has comprehensive machine understanding capabilities and can connect the objects in the photo to their sounds, three-dimensional shapes, temperatures and movement patterns

The RoboAgent jointly developed by Meta AI and CMU_Robotics allows robots to acquire a variety of non-trivial skills and promote them to hundreds of life scenarios.

The data for all these scenarios is an order of magnitude smaller than previous work in the field

Regarding the model that was revealed this time, some netizens expressed the hope that they will continue to open source code.

However, some netizens said that Meta will not start training until early 2024

But what is gratifying is that Meta still released a signal that it will continue to adhere to its original strategy.

The above is the detailed content of Meta plans to release a new open source version of the GPT-4 level large model next year. Its number of parameters will be several times that of Llama 2. Users can use it for free commercially.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1665

1665

14

14

1424

1424

52

52

1322

1322

25

25

1270

1270

29

29

1249

1249

24

24

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

Using the chrono library in C can allow you to control time and time intervals more accurately. Let's explore the charm of this library. C's chrono library is part of the standard library, which provides a modern way to deal with time and time intervals. For programmers who have suffered from time.h and ctime, chrono is undoubtedly a boon. It not only improves the readability and maintainability of the code, but also provides higher accuracy and flexibility. Let's start with the basics. The chrono library mainly includes the following key components: std::chrono::system_clock: represents the system clock, used to obtain the current time. std::chron

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

DMA in C refers to DirectMemoryAccess, a direct memory access technology, allowing hardware devices to directly transmit data to memory without CPU intervention. 1) DMA operation is highly dependent on hardware devices and drivers, and the implementation method varies from system to system. 2) Direct access to memory may bring security risks, and the correctness and security of the code must be ensured. 3) DMA can improve performance, but improper use may lead to degradation of system performance. Through practice and learning, we can master the skills of using DMA and maximize its effectiveness in scenarios such as high-speed data transmission and real-time signal processing.

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

Handling high DPI display in C can be achieved through the following steps: 1) Understand DPI and scaling, use the operating system API to obtain DPI information and adjust the graphics output; 2) Handle cross-platform compatibility, use cross-platform graphics libraries such as SDL or Qt; 3) Perform performance optimization, improve performance through cache, hardware acceleration, and dynamic adjustment of the details level; 4) Solve common problems, such as blurred text and interface elements are too small, and solve by correctly applying DPI scaling.

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

C performs well in real-time operating system (RTOS) programming, providing efficient execution efficiency and precise time management. 1) C Meet the needs of RTOS through direct operation of hardware resources and efficient memory management. 2) Using object-oriented features, C can design a flexible task scheduling system. 3) C supports efficient interrupt processing, but dynamic memory allocation and exception processing must be avoided to ensure real-time. 4) Template programming and inline functions help in performance optimization. 5) In practical applications, C can be used to implement an efficient logging system.

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

Measuring thread performance in C can use the timing tools, performance analysis tools, and custom timers in the standard library. 1. Use the library to measure execution time. 2. Use gprof for performance analysis. The steps include adding the -pg option during compilation, running the program to generate a gmon.out file, and generating a performance report. 3. Use Valgrind's Callgrind module to perform more detailed analysis. The steps include running the program to generate the callgrind.out file and viewing the results using kcachegrind. 4. Custom timers can flexibly measure the execution time of a specific code segment. These methods help to fully understand thread performance and optimize code.

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

The built-in quantization tools on the exchange include: 1. Binance: Provides Binance Futures quantitative module, low handling fees, and supports AI-assisted transactions. 2. OKX (Ouyi): Supports multi-account management and intelligent order routing, and provides institutional-level risk control. The independent quantitative strategy platforms include: 3. 3Commas: drag-and-drop strategy generator, suitable for multi-platform hedging arbitrage. 4. Quadency: Professional-level algorithm strategy library, supporting customized risk thresholds. 5. Pionex: Built-in 16 preset strategy, low transaction fee. Vertical domain tools include: 6. Cryptohopper: cloud-based quantitative platform, supporting 150 technical indicators. 7. Bitsgap:

Steps to add and delete fields to MySQL tables

Apr 29, 2025 pm 04:15 PM

Steps to add and delete fields to MySQL tables

Apr 29, 2025 pm 04:15 PM

In MySQL, add fields using ALTERTABLEtable_nameADDCOLUMNnew_columnVARCHAR(255)AFTERexisting_column, delete fields using ALTERTABLEtable_nameDROPCOLUMNcolumn_to_drop. When adding fields, you need to specify a location to optimize query performance and data structure; before deleting fields, you need to confirm that the operation is irreversible; modifying table structure using online DDL, backup data, test environment, and low-load time periods is performance optimization and best practice.

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

The main steps and precautions for using string streams in C are as follows: 1. Create an output string stream and convert data, such as converting integers into strings. 2. Apply to serialization of complex data structures, such as converting vector into strings. 3. Pay attention to performance issues and avoid frequent use of string streams when processing large amounts of data. You can consider using the append method of std::string. 4. Pay attention to memory management and avoid frequent creation and destruction of string stream objects. You can reuse or use std::stringstream.