Technology peripherals

Technology peripherals

AI

AI

Year-end review: The ten hottest data science and machine learning startups in 2022

Year-end review: The ten hottest data science and machine learning startups in 2022

Year-end review: The ten hottest data science and machine learning startups in 2022

As businesses deal with ever-increasing amounts of data, both generated internally within the organization and collected from external sources, finding effective ways to analyze and “manipulate” this data for competitive advantage becomes increasingly important. The more challenging it is.

This has also driven the need for new tools and technologies in the field of data science and machine learning. According to the "Fortune Business Insights" report, the global machine learning market will reach US$15.44 billion in 2021 and will reach US$21.17 billion this year. It is expected to grow to US$209.91 billion by 2029, with a compound annual growth rate of 38.8%. .

At the same time, according to a report by Allied Market Research, the global market size of data science platforms in 2020 was US$4.7 billion and is expected to reach US$79.7 billion by 2030, with a compound annual growth rate of 33.6%.

“Data science” and “machine learning” can sometimes feel a little confusing or even used interchangeably. These are actually two different concepts, but they are related because data science practices are key to machine learning projects.

According to the definition of the Master's in Data Science website, data science is a field of study that uses scientific methods to extract meaning and insights from data, including developing data analysis strategies, preparing data for analysis, developing data visualizations, and building data models .

According to the "Fortune Business Insights" report, machine learning is a subsection of the broader field of artificial intelligence and refers to the use of data analysis to teach computers how to learn (that is, to imitate the way humans learn) Use algorithms and data-based models.

The demand for data science and machine learning tools has spawned a number of startups developing cutting-edge technologies in the field of data science or machine learning. Let’s take a look at 10 of them:

- Aporia

- Baseten

- Deci

- Galileo

- Neuton

- Pinecone

- Predibase

- Snorkel AI

- Vectice

- Verta

Aporia

Aporia develops a full-stack, highly customizable machine learning observable A comprehensive platform that data science and machine learning can use to monitor, debug, interpret, and improve machine learning models and data.

Aporia was founded in 2020 and received an additional $25 million in Series A funding in March 2022, 10 months after receiving $5 million in seed funding.

Aporia will use the funding to triple the size of its workforce by early 2023, while also expanding its U.S. presence and expanding the range of use cases its technology covers.

Baseten

Baseten was officially released in April this year. It provides products that can speed up the process from machine learning model development to production-level applications.

According to Baseten, the technology, which has been in internal beta since the summer of 2021, can automate many of the skills required to bring machine learning models into production, helping data science and machine learning teams integrate machine learning into In the business process, no back-end, front-end or MLOps knowledge is required.

Baseten was founded in 2019 by CEO Tuhin Srivastava, CTO Amir Haghighat and chief scientist Philip Howes, who all previously worked at e-commerce platform developer Gumroad. Baseten raised $12 million in Series A funding in April this year, following an earlier $8 million in seed funding.

Deci

Deci has developed a deep learning development platform for building the next generation of artificial intelligence and deep learning applications. Deci’s technology is designed to help address the “AI efficiency gap,” where computer hardware is unable to keep up with the demands of machine learning models that are growing in size and complexity.

Deci’s platform takes production into account early in the development lifecycle, helping data scientists bridge this gap and reduce the time and cost of resolving issues when deploying models in production. According to Deci, the platform incorporates Deci’s proprietary AutoNAC (Automatic Neural Architecture Construction) technology to provide a “more efficient development paradigm” to help AI developers leverage hardware-aware “neural architecture search” to build deep learning models. to meet specific production demand targets.

Deci was founded in 2019 and raised US$25 million in Series B financing led by Insight Partners in July this year. Just 7 months ago, Deci had just received US$2,100 in Series A financing. Ten thousand U.S. dollars.

Galileo

Galileo has developed a machine learning data intelligence platform for unstructured data, allowing data scientists to inspect, discover and fix critical machine learning errors throughout the machine learning lifecycle.

In early November this year, Galileo launched the Galileo Community Edition, a free version of the platform, allowing data scientists engaged in natural language processing to build models more quickly using higher-quality training data.

Galileo came out of stealth mode when it received US$5.1 million in seed funding in May this year, and then on November 1, it received US$18 million in Series A financing led by Battery Ventures. Galileo's co-founders include CEO Vikram Chatterji, who was the head of cloud AI project management at Google; Atindriyo Sanyal, a former software engineer at Apple and Uber; and Yash Sheth, a former software engineer on Google's speech recognition system engineer.

Neuton

Founded in 2021, Neuton develops an automated no-code “tinyML” platform and other tools for developing tiny machine learning models that can be embedded in microcontrollers to enable the edge Devices become smart.

Neuton's technology is being used in a wide range of applications, including predictive maintenance of compressor water pumps, preventing grid overload, room occupancy detection, handwriting recognition on handheld devices, transmission failure prediction and water pollution monitoring equipment.

Pinecone

The vector database and search technology developed by Pinecone mainly provide support for artificial intelligence and machine learning applications. In October 2021, Pinecone launched Pinecone 2.0, taking the software from research laboratories to production applications.

Pinecone was founded in 2019 and was officially launched last year. It received US$10 million in seed round financing in January 2021 and US$28 million in Series A financing in March this year.

In October this year, Pinecone expanded its machine learning search infrastructure portfolio with the launch of a new “vector search” solution that combines semantic and keyword search capabilities.

Gartner named Pinecone a "Cool Vendor" in the field of artificial intelligence and machine learning data in 2021.

Predibase

In May, Predibase came out of stealth mode with a low-code machine learning platform that allows data scientists and non-experts to quickly develop applications with “best-in-class” machine learning infrastructure. Machine learning model. The software is currently in beta use at many Fortune 500 companies.

Predibase offers its technology as an alternative to traditional AutoML to develop machine learning models to solve real-world problems. The platform uses declarative machine learning, which Predibase says allows users to specify machine learning models as "configurations," or simple files, telling the system what the user wants and letting the system figure out the best way to meet that need.

Predibase was co-founded by CEO Piero Molino, Chief Technology Officer Travis Addair, Chief Product Officer Devvret Rishi, and Stanford University Associate Professor Chris Re. Both Molino and Addair once worked at Uber. While at Uber, the two developed the Ludwig open source framework for deep learning models, and the Horovod open source framework for extending and distributing deep learning model training to massive data (Predibase is built on Ludwig and Horovod.)

In May of this year, Predibase received US$16.5 million in seed and series A financing led by Greylock.

Snorkel AI

Snorkel was founded in 2019 and originated from the Stanford University Artificial Intelligence Laboratory, where the company’s five founders worked on solving the problem of lack of labeled training data for machine learning development Methods.

Snorkel developed Snorkel Flow, a data-centric system that became fully commercially available in March this year, by using programmatic tagging, a key step in data preparation and machine learning model development and training. ) to accelerate the development of artificial intelligence and machine learning.

Snorkel's valuation reached $1 billion in August 2021, when the startup secured $85 million in Series C funding, using the funds to grow its engineering and sales teams and accelerate its platform development.

Vectice

Vectice develops an automated data science knowledge capture and sharing solution. Vectice's technology automatically captures the assets data science teams create for projects, including datasets, code, models, notebooks, runs, and illustrations, and generates documentation throughout the project lifecycle, from business requirements to production deployment.

Vectice’s software is said to be designed to help enterprises manage transparency, governance and alignment with AI and machine learning projects and deliver consistent project results.

Vectice, founded in 2020 by CEO Cyril Brignone and CTO Gregory Haardt, raised $12.6 million in Series A funding in January this year, bringing total funding to $15.6 million.

Verta

Verta develops artificial intelligence/machine learning model management and operation software that data science and machine learning teams use to deploy, deploy, and machine learning models throughout the entire artificial intelligence and machine learning model lifecycle. Operations, management, and monitoring inherently complex models.

In August, Verta enhanced the enterprise capabilities of its MLOps platform, including adding a native integration ecosystem, additional capabilities around enterprise security, privacy and access control, and model risk management.

Verta was founded in 2018 and officially released in 2020. This year, it was named a "Cool Vendor" in the core AI technology field by Gartner.

The above is the detailed content of Year-end review: The ten hottest data science and machine learning startups in 2022. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1665

1665

14

14

1424

1424

52

52

1322

1322

25

25

1270

1270

29

29

1250

1250

24

24

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

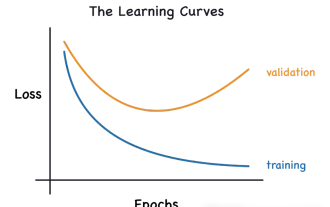

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

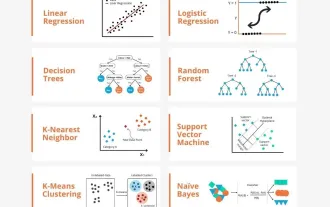

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made