Technology peripherals

Technology peripherals

AI

AI

Has the language model learned to use search engines on its own? Meta AI proposes API call self-supervised learning method Toolformer

Has the language model learned to use search engines on its own? Meta AI proposes API call self-supervised learning method Toolformer

Has the language model learned to use search engines on its own? Meta AI proposes API call self-supervised learning method Toolformer

In natural language processing tasks, large language models have achieved impressive results in zero-shot and few-shot learning. However, all models have inherent limitations that can often only be partially addressed through further extensions. Specifically, the limitations of the model include the inability to access the latest information, the "information hallucination" of facts, the difficulty of understanding low-resource languages, the lack of mathematical skills for precise calculations, etc.

A simple way to solve these problems is to equip the model with external tools, such as a search engine, calculator, or calendar. However, existing methods often rely on extensive manual annotations or limit the use of tools to specific task settings, making the use of language models combined with external tools difficult to generalize.

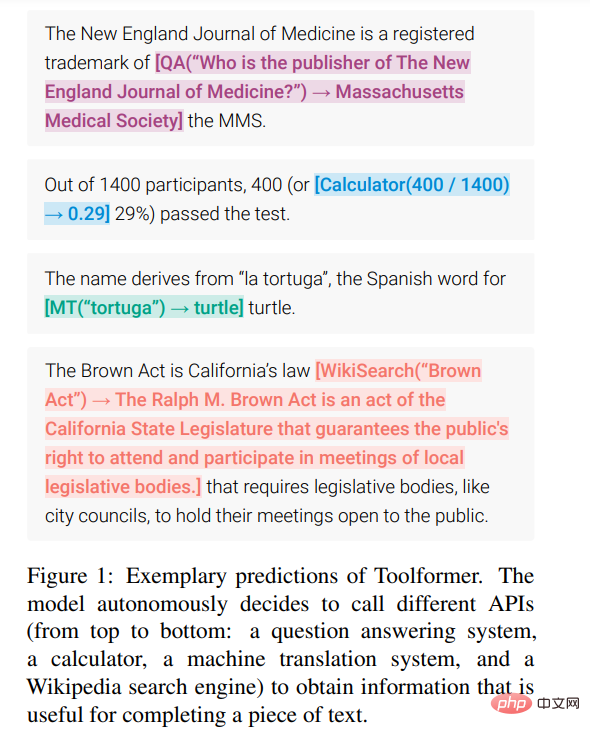

In order to break this bottleneck, Meta AI recently proposed a new method called Toolformer, which allows the language model to learn to "use" various external tools.

##Paper address: https://arxiv.org/pdf/2302.04761v1.pdf

Toolformer quickly attracted great attention. Some people believed that this paper solved many problems of current large language models and praised: "This is the most important article in recent weeks. paper".

Some people pointed out that Toolformer uses self-supervised learning to allow large language models to learn to use some APIs and tools, which are very flexible and efficient:

Some even think that Toolformer will take us away from general artificial intelligence (AGI) One step closer.

Toolformer gets such a high rating because it meets the following practical needs:

- Large language models should learn the use of tools in a self-supervised manner without the need for extensive human annotation. This is critical because the cost of human annotation is high, but more importantly, what humans think is useful may be different from what the model thinks is useful.

- Language models require more comprehensive use of tools that are not bound to a specific task.

This clearly breaks the bottleneck mentioned above. Let’s take a closer look at Toolformer’s methods and experimental results.

MethodToolformer generates the dataset from scratch based on a large language model with in-context learning (ICL) (Schick and Schütze, 2021b; Honovich et al. , 2022; Wang et al., 2022)’s idea: just give a few samples of humans using the API, you can let LM annotate a huge language modeling data set with potential API calls; then use self-supervised loss function to determine which API calls actually help the model predict future tokens; and finally fine-tune based on API calls that are useful to the LM itself.

Since Toolformer is agnostic to the dataset used, it can be used on the exact same dataset as the model was pre-trained on, which ensures that the model does not lose any generality and language Modeling capabilities.

Specifically, the goal of this research is to equip the language model M with the ability to use various tools through API calls. This requires that the input and output of each API can be characterized as a sequence of text. This allows API calls to be seamlessly inserted into any given text, with special tokens used to mark the beginning and end of each such call.

The study represents each API call as a tuple

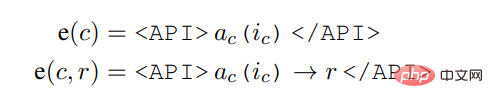

, where a_c is the name of the API and i_c is the corresponding input. Given an API call c with corresponding result r, this study represents the linearized sequence of API calls excluding and including its result as:

Among them,

Given data set

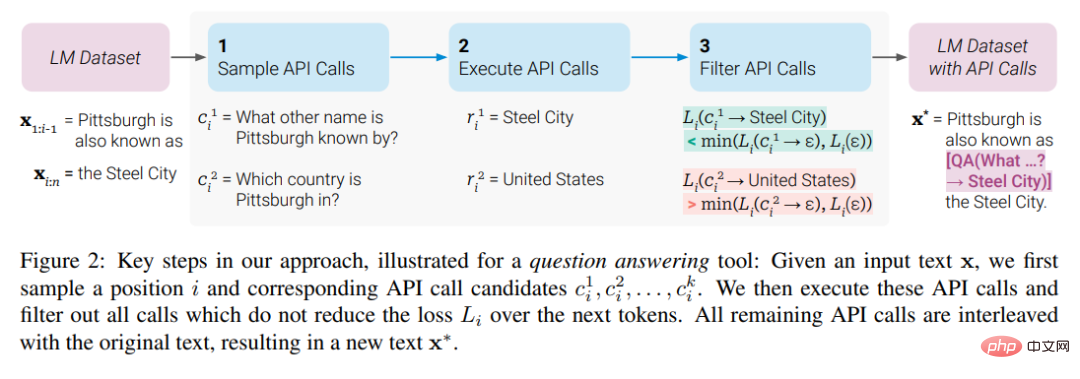

, the study first transformed this data set into a data set C* with added API calls. This is done in three steps, as shown in Figure 2 below: First, the study leverages M's in-context learning capabilities to sample a large number of potential API calls, then executes these API calls, and then checks whether the obtained responses help predictions Future token to be used as filtering criteria. After filtering, the study merges API calls to different tools, ultimately generating dataset C*, and fine-tunes M itself on this dataset.

Experiments and Results

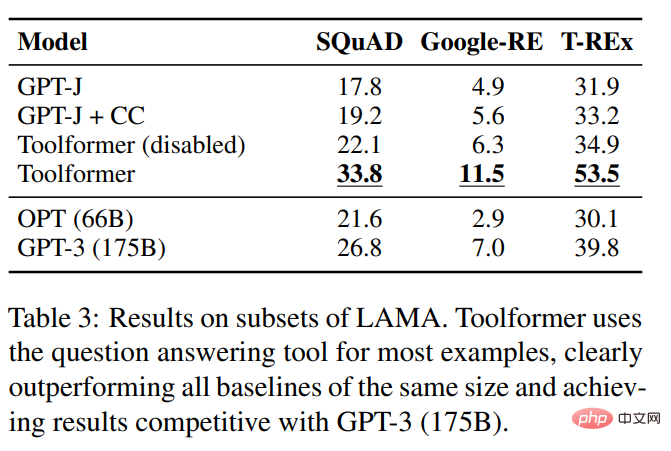

The study was conducted on a variety of different downstream tasks Experimental results show that: Toolformer based on the 6.7B parameter pre-trained GPT-J model (learned to use various APIs and tools) significantly outperforms the larger GPT-3 model and several other baselines on various tasks.

This study evaluated several models on the SQuAD, GoogleRE and T-REx subsets of the LAMA benchmark. The experimental results are shown in Table 3 below:

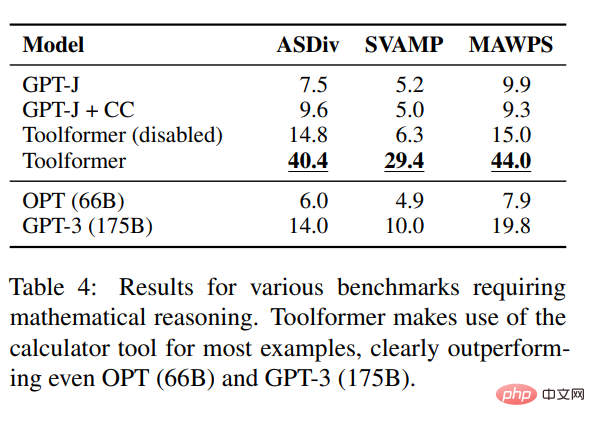

To test the mathematical reasoning capabilities of Toolformer, the study conducted experiments on the ASDiv, SVAMP, and MAWPS benchmarks. Experiments show that Toolformer uses calculator tools in most cases, which is significantly better than OPT (66B) and GPT-3 (175B).

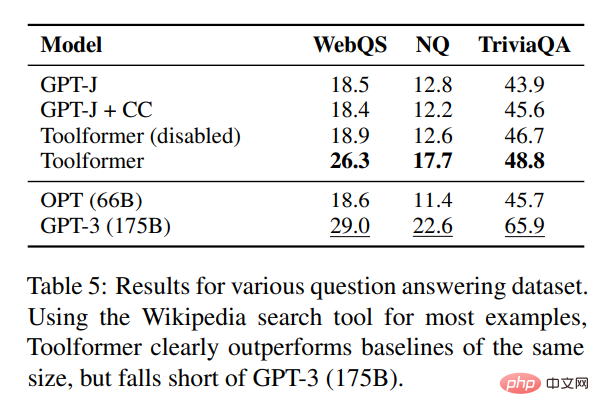

In terms of question answering, the study conducted experiments on three question answering data sets: Web Questions, Natural Questions and TriviaQA . Toolformer significantly outperforms baseline models of the same size, but is inferior to GPT-3 (175B).

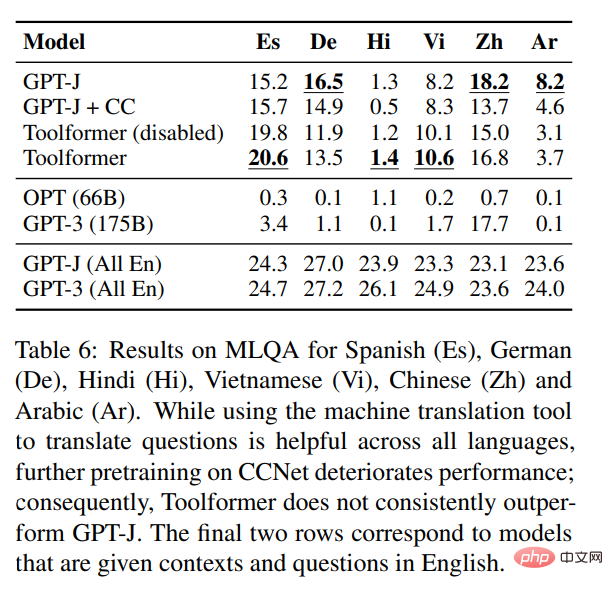

In terms of cross-language tasks, this study compared all baseline models on Toolformer and MLQA, and the results are as follows As shown in Table 6:

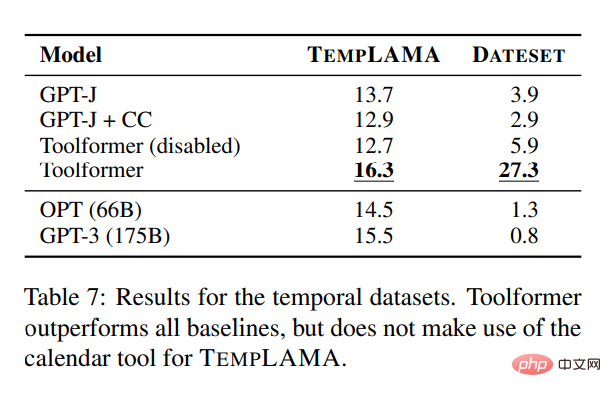

##In order to study the effectiveness of the calendar API, the study was conducted on TEMPLAMA and a new API called DATESET Experiments were conducted on several models on the dataset. Toolformer outperforms all baselines but does not use the TEMPLAMA calendar tool.

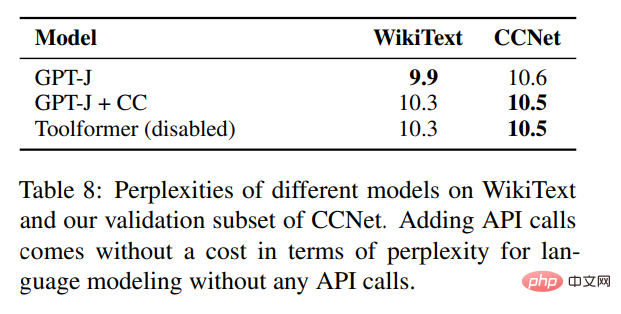

In addition to validating performance improvements on various downstream tasks, the study also hopes to ensure that Toolformer's language modeling performance is not degraded by fine-tuning of API calls. To this end, this study conducts experiments on two language modeling datasets to evaluate, and the perplexity of the model is shown in Table 8 below.

For language modeling without any API calls, there is no cost to add API calls.

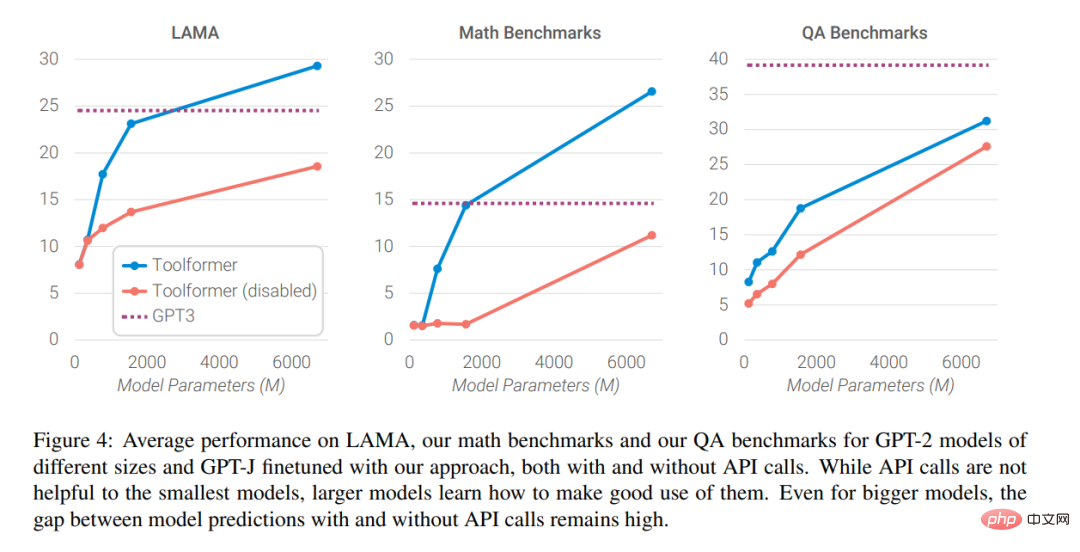

Finally, the researchers analyzed how the ability to seek help from external tools affects the model as the size of the language model increases. The impact of performance, the analysis results are shown in Figure 4 below

##Interested readers can read the original text of the paper to learn more Study the details.

The above is the detailed content of Has the language model learned to use search engines on its own? Meta AI proposes API call self-supervised learning method Toolformer. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1659

1659

14

14

1415

1415

52

52

1309

1309

25

25

1257

1257

29

29

1231

1231

24

24

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

Using the chrono library in C can allow you to control time and time intervals more accurately. Let's explore the charm of this library. C's chrono library is part of the standard library, which provides a modern way to deal with time and time intervals. For programmers who have suffered from time.h and ctime, chrono is undoubtedly a boon. It not only improves the readability and maintainability of the code, but also provides higher accuracy and flexibility. Let's start with the basics. The chrono library mainly includes the following key components: std::chrono::system_clock: represents the system clock, used to obtain the current time. std::chron

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

DMA in C refers to DirectMemoryAccess, a direct memory access technology, allowing hardware devices to directly transmit data to memory without CPU intervention. 1) DMA operation is highly dependent on hardware devices and drivers, and the implementation method varies from system to system. 2) Direct access to memory may bring security risks, and the correctness and security of the code must be ensured. 3) DMA can improve performance, but improper use may lead to degradation of system performance. Through practice and learning, we can master the skills of using DMA and maximize its effectiveness in scenarios such as high-speed data transmission and real-time signal processing.

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

Measuring thread performance in C can use the timing tools, performance analysis tools, and custom timers in the standard library. 1. Use the library to measure execution time. 2. Use gprof for performance analysis. The steps include adding the -pg option during compilation, running the program to generate a gmon.out file, and generating a performance report. 3. Use Valgrind's Callgrind module to perform more detailed analysis. The steps include running the program to generate the callgrind.out file and viewing the results using kcachegrind. 4. Custom timers can flexibly measure the execution time of a specific code segment. These methods help to fully understand thread performance and optimize code.

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

The main steps and precautions for using string streams in C are as follows: 1. Create an output string stream and convert data, such as converting integers into strings. 2. Apply to serialization of complex data structures, such as converting vector into strings. 3. Pay attention to performance issues and avoid frequent use of string streams when processing large amounts of data. You can consider using the append method of std::string. 4. Pay attention to memory management and avoid frequent creation and destruction of string stream objects. You can reuse or use std::stringstream.

What kind of software is a digital currency app? Top 10 Apps for Digital Currencies in the World

Apr 30, 2025 pm 07:06 PM

What kind of software is a digital currency app? Top 10 Apps for Digital Currencies in the World

Apr 30, 2025 pm 07:06 PM

With the popularization and development of digital currency, more and more people are beginning to pay attention to and use digital currency apps. These applications provide users with a convenient way to manage and trade digital assets. So, what kind of software is a digital currency app? Let us have an in-depth understanding and take stock of the top ten digital currency apps in the world.

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

C performs well in real-time operating system (RTOS) programming, providing efficient execution efficiency and precise time management. 1) C Meet the needs of RTOS through direct operation of hardware resources and efficient memory management. 2) Using object-oriented features, C can design a flexible task scheduling system. 3) C supports efficient interrupt processing, but dynamic memory allocation and exception processing must be avoided to ensure real-time. 4) Template programming and inline functions help in performance optimization. 5) In practical applications, C can be used to implement an efficient logging system.

How to optimize code

Apr 28, 2025 pm 10:27 PM

How to optimize code

Apr 28, 2025 pm 10:27 PM

C code optimization can be achieved through the following strategies: 1. Manually manage memory for optimization use; 2. Write code that complies with compiler optimization rules; 3. Select appropriate algorithms and data structures; 4. Use inline functions to reduce call overhead; 5. Apply template metaprogramming to optimize at compile time; 6. Avoid unnecessary copying, use moving semantics and reference parameters; 7. Use const correctly to help compiler optimization; 8. Select appropriate data structures, such as std::vector.

How to uninstall MySQL and clean residual files

Apr 29, 2025 pm 04:03 PM

How to uninstall MySQL and clean residual files

Apr 29, 2025 pm 04:03 PM

To safely and thoroughly uninstall MySQL and clean all residual files, follow the following steps: 1. Stop MySQL service; 2. Uninstall MySQL packages; 3. Clean configuration files and data directories; 4. Verify that the uninstallation is thorough.