Technology peripherals

Technology peripherals

AI

AI

When GPT-4 reflected on its mistake: performance increased by nearly 30%, and programming ability increased by 21%

When GPT-4 reflected on its mistake: performance increased by nearly 30%, and programming ability increased by 21%

When GPT-4 reflected on its mistake: performance increased by nearly 30%, and programming ability increased by 21%

GPT-4’s way of thinking is becoming more and more human-like.

When humans make mistakes, they will reflect on their behavior to avoid making mistakes again. If large language models such as GPT-4 also have the ability to reflect, the performance will be improved by how much.

It is well known that large language models (LLMs) have demonstrated unprecedented performance on a variety of tasks. However, these SOTA methods usually require model fine-tuning, policy optimization and other operations on the defined state space. Due to the lack of high-quality training data and well-defined state space, it is still difficult to implement an optimized model. Furthermore, models do not yet possess certain qualities inherent to the human decision-making process, particularly the ability to learn from mistakes.

But now, in a recent paper, researchers from Northeastern University, MIT and other institutions proposed Reflexion, which gives the agent the ability to dynamically remember and self-reflect.

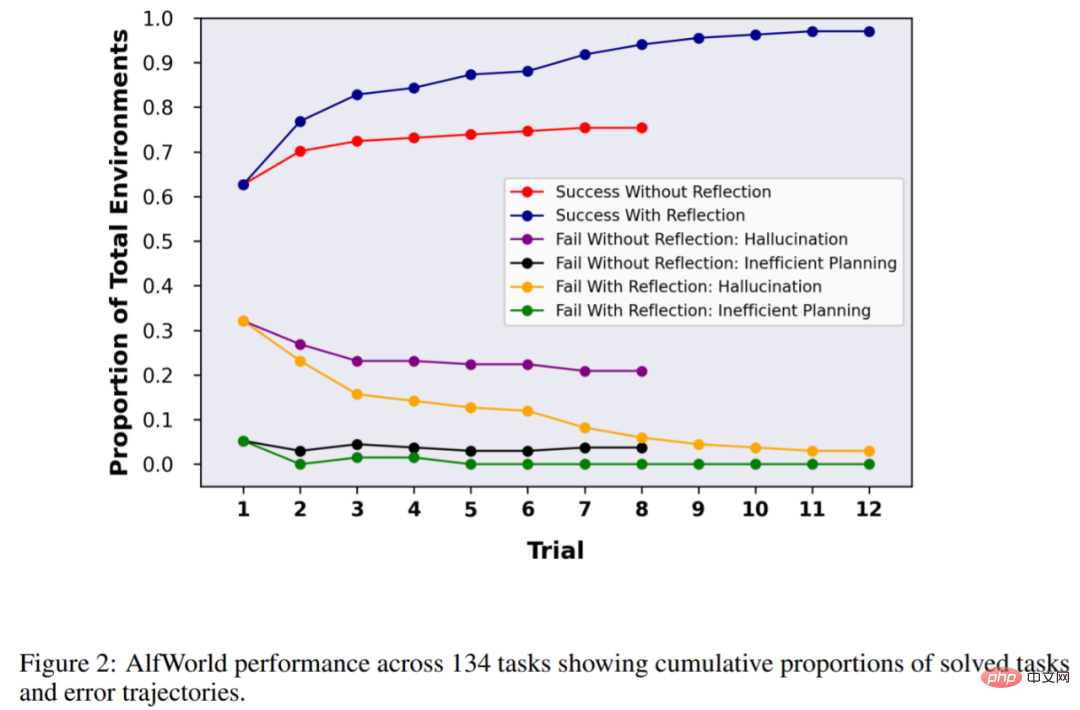

In order to verify the effectiveness of the method, this study evaluated the agent's ability to complete decision-making tasks in the AlfWorld environment, and to complete knowledge-intensive, search-based question and answer tasks in the HotPotQA environment. The mission success rates were 97% and 51% respectively.

Paper address: https://arxiv.org/pdf/2303.11366.pdf

Project address: https://github.com/GammaTauAI/ reflexion-human-eval

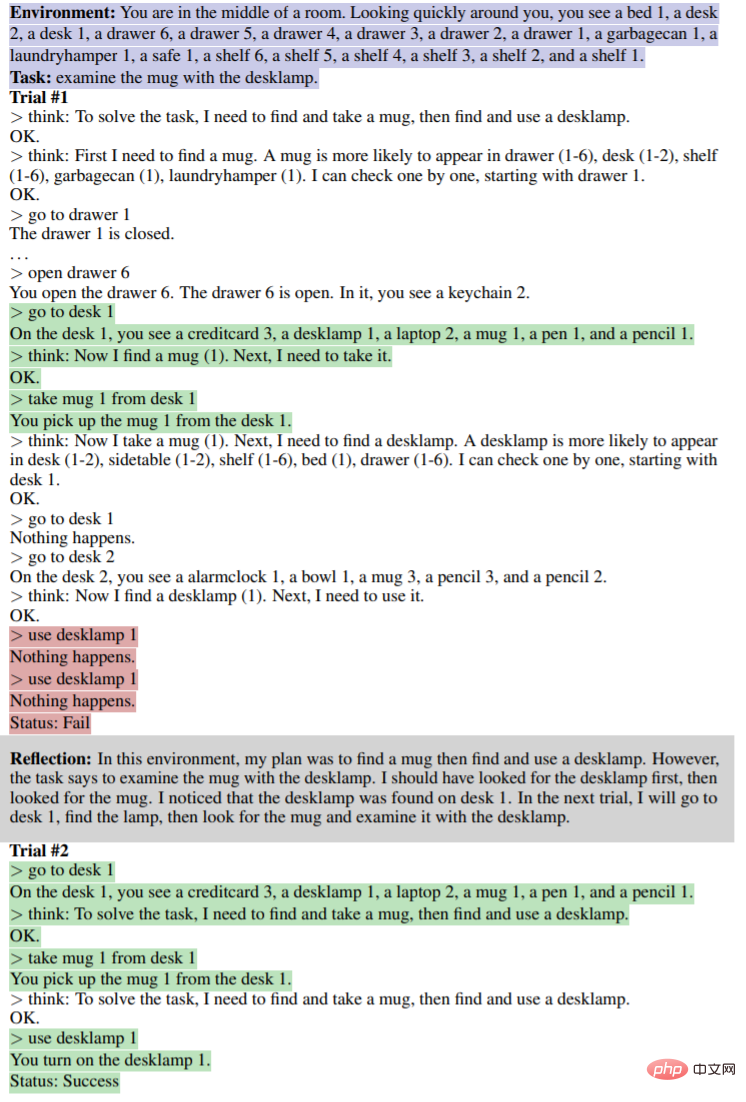

As shown in the figure below, in the AlfWorld environment, various items are placed in the room, and the agent is required to give a reasoning plan to get a certain object. The upper part of the figure below is due to The agent failed due to inefficient planning. After reflection, the agent realizes the error, corrects the reasoning trajectory, and gives a concise trajectory method (lower part of the figure).

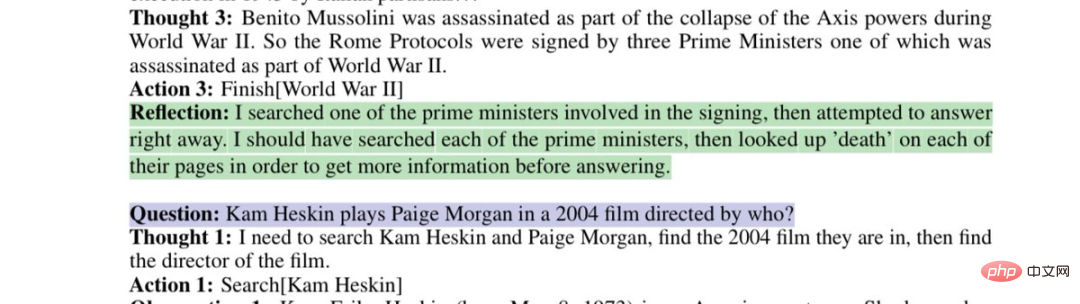

Model Rethinking Flawed Search Strategies:

This paper shows that you can do this by requiring GPT-4 Reflect on "Why were you wrong?" and generate a new prompt for yourself, taking the reason for the error into account until the result is correct, thereby improving the performance of GPT-4 by an astonishing 30%.

Netizens can’t help but sigh: The development speed of artificial intelligence has exceeded our ability to adapt.

Method Introduction

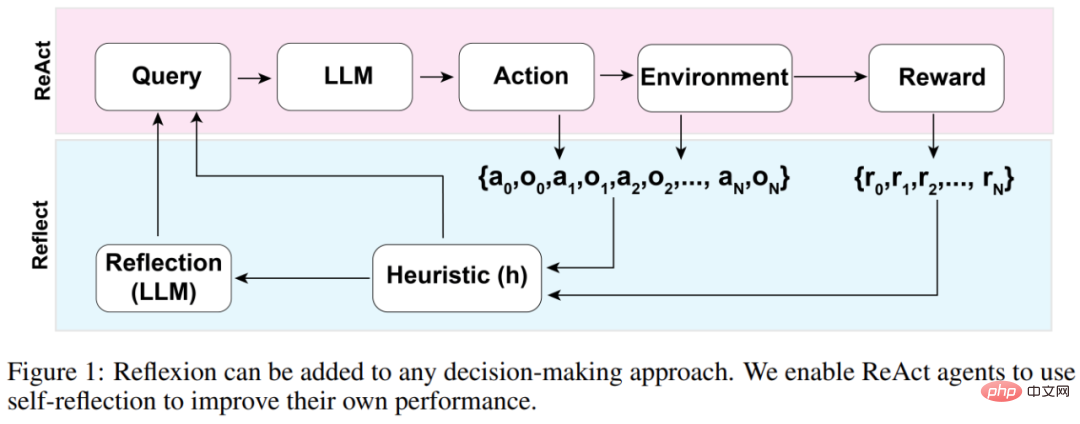

The overall architecture of the Reflexion agent is shown in Figure 1 below, where Reflexion utilizes ReAct (Yao et al., 2023). In the first trial, the agent is given a task from the environment that constitutes the initial query, and then the agent performs a sequence of actions generated by the LLM and receives observations and rewards from the environment. For environments that offer descriptive or continuous rewards, the study limits the output to simple binary success states to ensure applicability.

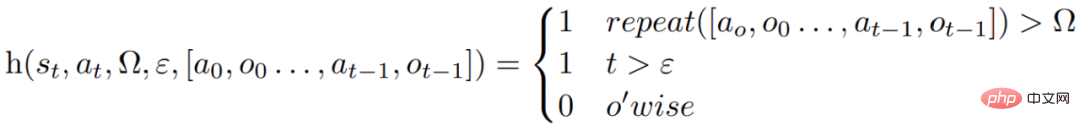

After each action a_t, the agent will calculate a heuristic function h, as shown in the figure below

This heuristic function is designed to detect information illusion (i.e. false or wrong information) or inefficiency of the agent, and "tell" the agent when it needs to reflect (reflexion), where t is the time step, s_t is the current state, Ω represents the number of repeated action cycles, ε represents the maximum total number of executed actions, [a_o, o_0 . . . , a_(t−1), o_(t−1)] represents the trajectory history. repeat is a simple function that determines the number of times a loop of repeated actions produces the same result.

If function h tells the agent that reflection is needed, the agent queries the LLM to reflect its current task, trajectory history, and last reward, and then the agent resets the environment and tries again on subsequent trials. If function h does not tell the agent that reflection is needed, then the agent adds a_t and o_t to its trajectory history and queries the LLM for the next action.

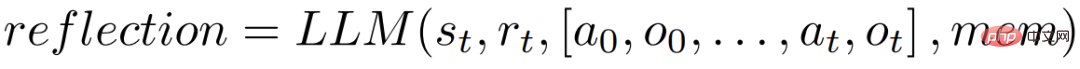

If heuristic h recommends reflection at time step t, the agent will reflect based on its current state s_t, last reward r_t, previous actions and observations [a_0, o_0, . . . , a_t, o_t], and the agent's existing work storage mem, start a reflection process.

The purpose of reflection is to help the agent correct "illusions" and inefficiencies through repeated trials. The model used for reflection is an LLM that uses specific failure trajectories and ideal reflection examples to prompt.

The agent will iteratively carry out the above reflection process. In the experiments, the study set the number of reflections stored in the agent's memory to a maximum of 3 times in order to avoid queries exceeding the limits of the LLM. The run will terminate in the following situations:

- The maximum number of trials is exceeded;

- Failure to improve performance between two consecutive trials;

- Complete the task.

Experiments and results

AlfWorld provides six different tasks and more than 3,000 environments. These tasks require the agent to understand the target task, formulate a sequential plan for subtasks, and perform Perform operations in a given environment.

The study tested the agent in 134 AlfWorld environments, with tasks including finding hidden objects (for example, finding a fruit knife in a drawer), moving objects (for example, moving a knife to a cutting board), and using other object to manipulate another object (for example, refrigerating tomatoes in a refrigerator).

Without reflection, the accuracy of the agent was 63%, and Reflexion was added for comparison. The results showed that the agent was able to handle 97% of the environment in 12 trials and failed to solve only 4 out of 134 tasks.

The next experiment was conducted in HotPotQA, which is a data set based on Wikipedia and contains 113k question and answer pairs. It is mainly used to challenge the agent to parse content. and the ability to reason.

On HotpotQA's 100 question-answer pair tests, the study compared the base agent and the Reflexion-based agent until they failed to improve accuracy over successive trials. The results show that the performance of the basic agent has not improved. In the first trial, the accuracy of the basic agent was 34% and the accuracy of the Reflexion agent was 32%. However, after 7 trials, the performance of the Reflexion agent improved significantly. The improvement is close to 30%, which is much better than the basic agent.

Similarly, when testing the model's ability to write code, GPT-4 with Reflexion is also significantly better than regular GPT-4:

The above is the detailed content of When GPT-4 reflected on its mistake: performance increased by nearly 30%, and programming ability increased by 21%. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1318

1318

25

25

1269

1269

29

29

1248

1248

24

24

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

How to understand DMA operations in C?

Apr 28, 2025 pm 10:09 PM

DMA in C refers to DirectMemoryAccess, a direct memory access technology, allowing hardware devices to directly transmit data to memory without CPU intervention. 1) DMA operation is highly dependent on hardware devices and drivers, and the implementation method varies from system to system. 2) Direct access to memory may bring security risks, and the correctness and security of the code must be ensured. 3) DMA can improve performance, but improper use may lead to degradation of system performance. Through practice and learning, we can master the skills of using DMA and maximize its effectiveness in scenarios such as high-speed data transmission and real-time signal processing.

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

How to use the chrono library in C?

Apr 28, 2025 pm 10:18 PM

Using the chrono library in C can allow you to control time and time intervals more accurately. Let's explore the charm of this library. C's chrono library is part of the standard library, which provides a modern way to deal with time and time intervals. For programmers who have suffered from time.h and ctime, chrono is undoubtedly a boon. It not only improves the readability and maintainability of the code, but also provides higher accuracy and flexibility. Let's start with the basics. The chrono library mainly includes the following key components: std::chrono::system_clock: represents the system clock, used to obtain the current time. std::chron

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

How to measure thread performance in C?

Apr 28, 2025 pm 10:21 PM

Measuring thread performance in C can use the timing tools, performance analysis tools, and custom timers in the standard library. 1. Use the library to measure execution time. 2. Use gprof for performance analysis. The steps include adding the -pg option during compilation, running the program to generate a gmon.out file, and generating a performance report. 3. Use Valgrind's Callgrind module to perform more detailed analysis. The steps include running the program to generate the callgrind.out file and viewing the results using kcachegrind. 4. Custom timers can flexibly measure the execution time of a specific code segment. These methods help to fully understand thread performance and optimize code.

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

Quantitative Exchange Ranking 2025 Top 10 Recommendations for Digital Currency Quantitative Trading APPs

Apr 30, 2025 pm 07:24 PM

The built-in quantization tools on the exchange include: 1. Binance: Provides Binance Futures quantitative module, low handling fees, and supports AI-assisted transactions. 2. OKX (Ouyi): Supports multi-account management and intelligent order routing, and provides institutional-level risk control. The independent quantitative strategy platforms include: 3. 3Commas: drag-and-drop strategy generator, suitable for multi-platform hedging arbitrage. 4. Quadency: Professional-level algorithm strategy library, supporting customized risk thresholds. 5. Pionex: Built-in 16 preset strategy, low transaction fee. Vertical domain tools include: 6. Cryptohopper: cloud-based quantitative platform, supporting 150 technical indicators. 7. Bitsgap:

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

How to handle high DPI display in C?

Apr 28, 2025 pm 09:57 PM

Handling high DPI display in C can be achieved through the following steps: 1) Understand DPI and scaling, use the operating system API to obtain DPI information and adjust the graphics output; 2) Handle cross-platform compatibility, use cross-platform graphics libraries such as SDL or Qt; 3) Perform performance optimization, improve performance through cache, hardware acceleration, and dynamic adjustment of the details level; 4) Solve common problems, such as blurred text and interface elements are too small, and solve by correctly applying DPI scaling.

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

What is real-time operating system programming in C?

Apr 28, 2025 pm 10:15 PM

C performs well in real-time operating system (RTOS) programming, providing efficient execution efficiency and precise time management. 1) C Meet the needs of RTOS through direct operation of hardware resources and efficient memory management. 2) Using object-oriented features, C can design a flexible task scheduling system. 3) C supports efficient interrupt processing, but dynamic memory allocation and exception processing must be avoided to ensure real-time. 4) Template programming and inline functions help in performance optimization. 5) In practical applications, C can be used to implement an efficient logging system.

Steps to add and delete fields to MySQL tables

Apr 29, 2025 pm 04:15 PM

Steps to add and delete fields to MySQL tables

Apr 29, 2025 pm 04:15 PM

In MySQL, add fields using ALTERTABLEtable_nameADDCOLUMNnew_columnVARCHAR(255)AFTERexisting_column, delete fields using ALTERTABLEtable_nameDROPCOLUMNcolumn_to_drop. When adding fields, you need to specify a location to optimize query performance and data structure; before deleting fields, you need to confirm that the operation is irreversible; modifying table structure using online DDL, backup data, test environment, and low-load time periods is performance optimization and best practice.

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

How to use string streams in C?

Apr 28, 2025 pm 09:12 PM

The main steps and precautions for using string streams in C are as follows: 1. Create an output string stream and convert data, such as converting integers into strings. 2. Apply to serialization of complex data structures, such as converting vector into strings. 3. Pay attention to performance issues and avoid frequent use of string streams when processing large amounts of data. You can consider using the append method of std::string. 4. Pay attention to memory management and avoid frequent creation and destruction of string stream objects. You can reuse or use std::stringstream.