Database

Database

Mysql Tutorial

Mysql Tutorial

Share an example of data monitoring using the oplog mechanism in MongoDB

Share an example of data monitoring using the oplog mechanism in MongoDB

Share an example of data monitoring using the oplog mechanism in MongoDB

MongoDB's Replication stores write operations through a log. This log is called oplog. The following article mainly introduces you to the relevant information on using the oplog mechanism in MongoDB to achieve quasi-real-time data operation monitoring. What is needed Friends can refer to it, let’s take a look below.

Preface

Recently there is a need to obtain newly inserted data into MongoDB in real time, and the insertion program itself already has a set of processing logic , so it is inconvenient to write related programs directly in the insertion program. Most traditional databases come with this trigger mechanism, but Mongo does not have related functions to use (maybe I don’t know too much, please Correction), of course, there is another point that needs to be implemented in python, so I collected and compiled a corresponding implementation method.

1. Introduction

#First of all, it can be thought that this requirement is actually very similar to the master-slave backup mechanism of the database. Therefore, the main database can be synchronized because there are certain indicators for control. We know that although MongoDB does not have ready-made triggers, it can realize master-slave backup, so we start with its master-slave backup mechanism.

2. OPLOG

First of all, you need to open the mongod daemon in master mode. Use the command line –master, or Configuration fileAdd the master key to true.

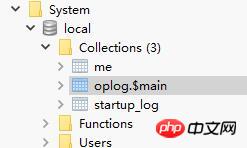

At this time, we can see the new collection-oplog in the local system library of Mongo. At this time, the oplog information will be stored in oplog.$main. If this If Mongo exists as a slave database, there will also be some slave information. Since we are not master-slave synchronization here, these sets do not exist.

Let’s take a look at the oplog structure:

"ts" : Timestamp(6417682881216249, 1), 时间戳

"h" : NumberLong(0), 长度

"v" : 2,

"op" : "n", 操作类型

"ns" : "", 操作的库和集合

"o2" : "_id" update条件

"o" : {} 操作值,即documentYou need to know the op here Several attributes:

insert,'i' update, 'u' remove(delete), 'd' cmd, 'c' noop, 'n' 空操作

As can be seen from the above information, we only need to continuously read ts for comparison, and then judge the current situation based on the op What operation occurs is equivalent to using a program to implement a receiving end from the database.

3. CODE

I found someone else’s implementation on Github, but its function library is too old, so Make modifications based on his work.

Github address: github.com/RedBeard0531/mongo-oplog-watcher

mongo_oplog_watcher.py is as follows:

#!/usr/bin/python

import pymongo

import re

import time

from pprint import pprint # pretty printer

from pymongo.errors import AutoReconnect

class OplogWatcher(object):

def init(self, db=None, collection=None, poll_time=1.0, connection=None, start_now=True):

if collection is not None:

if db is None:

raise ValueError('must specify db if you specify a collection')

self._ns_filter = db + '.' + collection

elif db is not None:

self._ns_filter = re.compile(r'^%s\.' % db)

else:

self._ns_filter = None

self.poll_time = poll_time

self.connection = connection or pymongo.Connection()

if start_now:

self.start()

@staticmethod

def get_id(op):

id = None

o2 = op.get('o2')

if o2 is not None:

id = o2.get('_id')

if id is None:

id = op['o'].get('_id')

return id

def start(self):

oplog = self.connection.local['oplog.$main']

ts = oplog.find().sort('$natural', -1)[0]['ts']

while True:

if self._ns_filter is None:

filter = {}

else:

filter = {'ns': self._ns_filter}

filter['ts'] = {'$gt': ts}

try:

cursor = oplog.find(filter, tailable=True)

while True:

for op in cursor:

ts = op['ts']

id = self.get_id(op)

self.all_with_noop(ns=op['ns'], ts=ts, op=op['op'], id=id, raw=op)

time.sleep(self.poll_time)

if not cursor.alive:

break

except AutoReconnect:

time.sleep(self.poll_time)

def all_with_noop(self, ns, ts, op, id, raw):

if op == 'n':

self.noop(ts=ts)

else:

self.all(ns=ns, ts=ts, op=op, id=id, raw=raw)

def all(self, ns, ts, op, id, raw):

if op == 'i':

self.insert(ns=ns, ts=ts, id=id, obj=raw['o'], raw=raw)

elif op == 'u':

self.update(ns=ns, ts=ts, id=id, mod=raw['o'], raw=raw)

elif op == 'd':

self.delete(ns=ns, ts=ts, id=id, raw=raw)

elif op == 'c':

self.command(ns=ns, ts=ts, cmd=raw['o'], raw=raw)

elif op == 'db':

self.db_declare(ns=ns, ts=ts, raw=raw)

def noop(self, ts):

pass

def insert(self, ns, ts, id, obj, raw, **kw):

pass

def update(self, ns, ts, id, mod, raw, **kw):

pass

def delete(self, ns, ts, id, raw, **kw):

pass

def command(self, ns, ts, cmd, raw, **kw):

pass

def db_declare(self, ns, ts, **kw):

pass

class OplogPrinter(OplogWatcher):

def all(self, **kw):

pprint (kw)

print #newline

if name == 'main':

OplogPrinter()First, implement a database Initialization, set a delay time (quasi real-time):

self.poll_time = poll_time self.connection = connection or pymongo.MongoClient()

The main function is start() , to achieve a time comparison and perform Processing of corresponding fields:

def start(self):

oplog = self.connection.local['oplog.$main']

#读取之前提到的库

ts = oplog.find().sort('$natural', -1)[0]['ts']

#获取一个时间边际

while True:

if self._ns_filter is None:

filter = {}

else:

filter = {'ns': self._ns_filter}

filter['ts'] = {'$gt': ts}

try:

cursor = oplog.find(filter)

#对此时间之后的进行处理

while True:

for op in cursor:

ts = op['ts']

id = self.get_id(op)

self.all_with_noop(ns=op['ns'], ts=ts, op=op['op'], id=id, raw=op)

#可以指定处理插入监控,更新监控或者删除监控等

time.sleep(self.poll_time)

if not cursor.alive:

break

except AutoReconnect:

time.sleep(self.poll_time)Loop this start function, and write the corresponding monitoring and processing logic here in all_with_noop.

In this way, a simple quasi-real-time Mongodatabase operationmonitor can be implemented. In the next step, the newly entered program can be processed accordingly in conjunction with other operations.

The above is the detailed content of Share an example of data monitoring using the oplog mechanism in MongoDB. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1423

1423

52

52

1317

1317

25

25

1268

1268

29

29

1246

1246

24

24

Use Composer to solve the dilemma of recommendation systems: andres-montanez/recommendations-bundle

Apr 18, 2025 am 11:48 AM

Use Composer to solve the dilemma of recommendation systems: andres-montanez/recommendations-bundle

Apr 18, 2025 am 11:48 AM

When developing an e-commerce website, I encountered a difficult problem: how to provide users with personalized product recommendations. Initially, I tried some simple recommendation algorithms, but the results were not ideal, and user satisfaction was also affected. In order to improve the accuracy and efficiency of the recommendation system, I decided to adopt a more professional solution. Finally, I installed andres-montanez/recommendations-bundle through Composer, which not only solved my problem, but also greatly improved the performance of the recommendation system. You can learn composer through the following address:

How to configure MongoDB automatic expansion on Debian

Apr 02, 2025 am 07:36 AM

How to configure MongoDB automatic expansion on Debian

Apr 02, 2025 am 07:36 AM

This article introduces how to configure MongoDB on Debian system to achieve automatic expansion. The main steps include setting up the MongoDB replica set and disk space monitoring. 1. MongoDB installation First, make sure that MongoDB is installed on the Debian system. Install using the following command: sudoaptupdatesudoaptinstall-ymongodb-org 2. Configuring MongoDB replica set MongoDB replica set ensures high availability and data redundancy, which is the basis for achieving automatic capacity expansion. Start MongoDB service: sudosystemctlstartmongodsudosys

How to ensure high availability of MongoDB on Debian

Apr 02, 2025 am 07:21 AM

How to ensure high availability of MongoDB on Debian

Apr 02, 2025 am 07:21 AM

This article describes how to build a highly available MongoDB database on a Debian system. We will explore multiple ways to ensure data security and services continue to operate. Key strategy: ReplicaSet: ReplicaSet: Use replicasets to achieve data redundancy and automatic failover. When a master node fails, the replica set will automatically elect a new master node to ensure the continuous availability of the service. Data backup and recovery: Regularly use the mongodump command to backup the database and formulate effective recovery strategies to deal with the risk of data loss. Monitoring and Alarms: Deploy monitoring tools (such as Prometheus, Grafana) to monitor the running status of MongoDB in real time, and

Navicat's method to view MongoDB database password

Apr 08, 2025 pm 09:39 PM

Navicat's method to view MongoDB database password

Apr 08, 2025 pm 09:39 PM

It is impossible to view MongoDB password directly through Navicat because it is stored as hash values. How to retrieve lost passwords: 1. Reset passwords; 2. Check configuration files (may contain hash values); 3. Check codes (may hardcode passwords).

What is the CentOS MongoDB backup strategy?

Apr 14, 2025 pm 04:51 PM

What is the CentOS MongoDB backup strategy?

Apr 14, 2025 pm 04:51 PM

Detailed explanation of MongoDB efficient backup strategy under CentOS system This article will introduce in detail the various strategies for implementing MongoDB backup on CentOS system to ensure data security and business continuity. We will cover manual backups, timed backups, automated script backups, and backup methods in Docker container environments, and provide best practices for backup file management. Manual backup: Use the mongodump command to perform manual full backup, for example: mongodump-hlocalhost:27017-u username-p password-d database name-o/backup directory This command will export the data and metadata of the specified database to the specified backup directory.

How to choose a database for GitLab on CentOS

Apr 14, 2025 pm 04:48 PM

How to choose a database for GitLab on CentOS

Apr 14, 2025 pm 04:48 PM

GitLab Database Deployment Guide on CentOS System Selecting the right database is a key step in successfully deploying GitLab. GitLab is compatible with a variety of databases, including MySQL, PostgreSQL, and MongoDB. This article will explain in detail how to select and configure these databases. Database selection recommendation MySQL: a widely used relational database management system (RDBMS), with stable performance and suitable for most GitLab deployment scenarios. PostgreSQL: Powerful open source RDBMS, supports complex queries and advanced features, suitable for handling large data sets. MongoDB: Popular NoSQL database, good at handling sea

How to encrypt data in Debian MongoDB

Apr 12, 2025 pm 08:03 PM

How to encrypt data in Debian MongoDB

Apr 12, 2025 pm 08:03 PM

Encrypting MongoDB database on a Debian system requires following the following steps: Step 1: Install MongoDB First, make sure your Debian system has MongoDB installed. If not, please refer to the official MongoDB document for installation: https://docs.mongodb.com/manual/tutorial/install-mongodb-on-debian/Step 2: Generate the encryption key file Create a file containing the encryption key and set the correct permissions: ddif=/dev/urandomof=/etc/mongodb-keyfilebs=512

How to set up users in mongodb

Apr 12, 2025 am 08:51 AM

How to set up users in mongodb

Apr 12, 2025 am 08:51 AM

To set up a MongoDB user, follow these steps: 1. Connect to the server and create an administrator user. 2. Create a database to grant users access. 3. Use the createUser command to create a user and specify their role and database access rights. 4. Use the getUsers command to check the created user. 5. Optionally set other permissions or grant users permissions to a specific collection.