PHP5 面向对象程序设计

PHP5有一个单重继承的,限制访问的,可以重载的对象模型. 本章稍后会详细讨论的”继承”,包含类间的父-子关系. 另外,PHP支持对属性和方法的限制性访问. 你可以声明成员为private,不允许外部类访问. 最后,PHP允许一个子类从它的父类中重载成员.

PHP5的对象模型把对象看成与任何其它数据类型不同,通过引用来传递. PHP不要求你通过引用(reference)显性传递和返回对象. 在本章的最后将会详细阐述基于引用的对象模型. 它是PHP5中最重要的新特性.

有了更直接的对象模型,就拥有了附加的优势: 效率提高, 占用内存少,并且具有更大的灵活性.

在PHP的前几个版本中,脚本默认复制对象.现在PHP5只移动句柄,需要更少的时间. 脚本执行效率的提升是由于避免了不必要的复制. 在对象体系带来复杂性的同时,也带来了执行效率上的收益. 同时,减少复制意味着占用更少的内存,可以留出更多内存给其它操作,这也使效率提高.

Zand引擎2具有更大的灵活性. 一个令人高兴的发展是允许析构--在对象销毁之前执行一个类方法. 这对于利用内存也很有好处,让PHP清楚地知道什么时候没有对象的引用,把空出的内存分配到其它用途.

补充:

PHP5的内存管理

对象传递

PHP5使用了Zend引擎II,对象被储存于独立的结构Object Store中,而不像其它一般变量那样储存于Zval中(在PHP4中对象和一般变量一样存储于Zval)。在Zval中仅存储对象的指针而不是内容(value)。当我们复制一个对象或者将一个对象当作参数传递给一个函数时,我们不需要复制数据。仅仅保持相同的对象指针并由另一个zval通知现在这个特定的对象指向的Object Store。由于对象本身位于Object Store,我们对它所作的任何改变将影响到所有持有该对象指针的zval结构----表现在程序中就是目标对象的任何改变都会影响到源对象。.这使PHP对象看起来就像总是通过引用(reference)来传递,因此PHP中对象默认为通过“引用”传递,你不再需要像在PHP4中那样使用&来声明。

垃圾回收机制

某些语言,最典型的如C,需要你显式地要求分配内存当你创建数据结构。一旦你分配到内存,就可以在变量中存储信息。同时你也需要在结束使用变量时释放内存,这使机器可以空出内存给其它变量,避免耗光内存。

PHP可以自动进行内存管理,清除不再需要的对象。PHP使用了引用计数(reference counting)这种单纯的垃圾回收(garbage collection)机制。每个对象都内含一个引用计数器,每个reference连接到对象,计数器加1。当reference离开生存空间或被设为NULL,计数器减1。当某个对象的引用计数器为零时,PHP知道你将不再需要使用这个对象,释放其所占的内存空间。

例如:

复制代码 代码如下:

class Person{

}

function sendEmailTo(){

}

$haohappy = new Person( );

// 建立一个新对象: 引用计数 Reference count = 1

$haohappy2 = $haohappy;

// 通过引用复制: Reference count = 2

unset($haohappy);

// 删除一个引用: Reference count = 1

sendEmailTo($haohappy2);

// 通过引用传递对象:

// 在函数执行期间:

// Reference count = 2

// 执行结束后:

// Reference count = 1

unset($haohappy2);

// 删除引用: Reference count = 0 自动释放内存空间

?>

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Detailed explanation of C++ function inheritance: How to use 'base class pointer' and 'derived class pointer' in inheritance?

May 01, 2024 pm 10:27 PM

Detailed explanation of C++ function inheritance: How to use 'base class pointer' and 'derived class pointer' in inheritance?

May 01, 2024 pm 10:27 PM

In function inheritance, use "base class pointer" and "derived class pointer" to understand the inheritance mechanism: when the base class pointer points to the derived class object, upward transformation is performed and only the base class members are accessed. When a derived class pointer points to a base class object, a downward cast is performed (unsafe) and must be used with caution.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

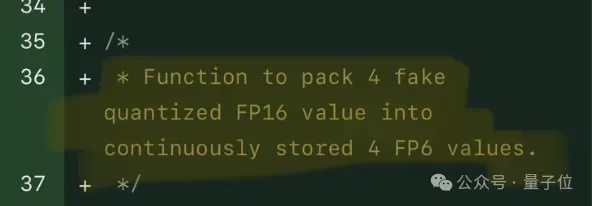

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

Comprehensively surpassing DPO: Chen Danqi's team proposed simple preference optimization SimPO, and also refined the strongest 8B open source model

Jun 01, 2024 pm 04:41 PM

In order to align large language models (LLMs) with human values and intentions, it is critical to learn human feedback to ensure that they are useful, honest, and harmless. In terms of aligning LLM, an effective method is reinforcement learning based on human feedback (RLHF). Although the results of the RLHF method are excellent, there are some optimization challenges involved. This involves training a reward model and then optimizing a policy model to maximize that reward. Recently, some researchers have explored simpler offline algorithms, one of which is direct preference optimization (DPO). DPO learns the policy model directly based on preference data by parameterizing the reward function in RLHF, thus eliminating the need for an explicit reward model. This method is simple and stable

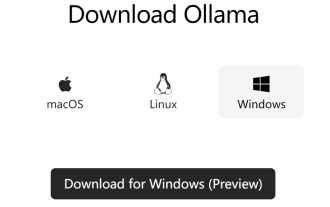

Docker completes local deployment of LLama3 open source large model in three minutes

Apr 26, 2024 am 10:19 AM

Docker completes local deployment of LLama3 open source large model in three minutes

Apr 26, 2024 am 10:19 AM

Overview LLaMA-3 (LargeLanguageModelMetaAI3) is a large-scale open source generative artificial intelligence model developed by Meta Company. It has no major changes in model structure compared with the previous generation LLaMA-2. The LLaMA-3 model is divided into different scale versions, including small, medium and large, to suit different application needs and computing resources. The parameter size of small models is 8B, the parameter size of medium models is 70B, and the parameter size of large models reaches 400B. However, during training, the goal is to achieve multi-modal and multi-language functionality, and the results are expected to be comparable to GPT4/GPT4V. Install OllamaOllama is an open source large language model (LL