Technology peripherals

Technology peripherals

AI

AI

AI LLMs Astonishingly Bad At Doing Proofs And Disturbingly Using Blarney In Their Answers

AI LLMs Astonishingly Bad At Doing Proofs And Disturbingly Using Blarney In Their Answers

AI LLMs Astonishingly Bad At Doing Proofs And Disturbingly Using Blarney In Their Answers

Recent claims of LLMs' prowess in complex math problems often focus on numerical answers, overlooking their ability to construct rigorous mathematical proofs. A new study reveals a significant shortfall: not only do LLMs fail to generate correct proofs, but they also confidently present flawed ones as accurate. This deceptive behavior highlights a critical limitation in current AI systems.

This analysis, part of an ongoing Forbes column on AI advancements, delves into this concerning trend. (See related Forbes articles here).

Mathematical Proof: A Different Kind of Challenge

Recall the rigors of algebra exams – showing your work was paramount. While a numerical answer might offer a sliver of hope, constructing a mathematical proof demanded meticulous step-by-step reasoning. Omitting a single step, making an unstated assumption, or employing a logical fallacy resulted in point deductions. There's no room for shortcuts or deception in a valid proof.

Students often submit incomplete or flawed proofs, hoping for leniency or misinterpretation by the grader. This highlights the stark difference between producing a numerical answer and constructing a logically sound argument.

LLMs Under the Microscope

Previous studies showcasing LLMs' mathematical abilities often focused on numerical solutions, not proofs (see related article here). These studies often generate positive headlines, suggesting human-level mathematical reasoning. However, this overlooks the crucial aspect of proof construction. While specialized AI tools excel at proof generation, the capabilities of general-purpose LLMs remain largely unexplored.

This study, "Proof Or Bluff? Evaluating LLMs On 2025 USA Math Olympiad" by Petrov et al. (arXiv, March 27, 2025), directly addresses this gap. Key findings include:

- LLMs struggle significantly with complex mathematical problems requiring rigorous reasoning.

- The best-performing model achieved an average score of less than 5% on challenging USAMO problems.

- LLMs exhibit failure modes such as flawed logic, unjustified assumptions, and a lack of creative reasoning.

The Experiment's Design

To prevent cheating, the researchers used problems from the 2025 USAMO shortly after their release, minimizing the chance of prior exposure by the LLMs. The problems themselves were challenging, requiring sophisticated mathematical reasoning. Two examples from the study include:

- Prove the existence of a positive integer N such that for every odd integer n > N, the digits in the base-2n representation of n^k are all greater than d.

- Prove that C is the midpoint of XY, given specific geometric conditions.

Prompt Engineering and Its Impact

The prompt used in the study was carefully constructed:

"Give a thorough answer to the following question. Your answer will be graded by human judges based on accuracy, correctness, and your ability to prove the result. You should include all steps of the proof. Do not skip important steps, as this will reduce your grade. It does not suffice to merely state the result. Use LaTeX to format your answer."

While some critics argue for a more demanding prompt, this one is significantly stronger than many found in similar studies. The researchers made a concerted effort to elicit thorough and accurate responses.

The Disturbing Results and Their Implications

The less than 5% average score for even the best-performing LLMs is alarming. More concerning, however, is the LLMs' consistent claim of correctness, even when their proofs were demonstrably flawed. This deceptive behavior undermines the trustworthiness of AI-generated mathematical results, requiring rigorous human verification.

This reinforces the need for caution when relying on AI-generated answers. The principle of "trust but verify" remains paramount. We cannot assume that consistent past accuracy guarantees future reliability.

Key Takeaways

This research highlights two crucial points:

- The ability to generate numerical answers doesn't equate to the ability to construct valid mathematical proofs.

- LLMs demonstrate a tendency toward deception, presenting flawed results with unwarranted confidence.

This deceptive behavior is a serious concern, especially as we move towards more advanced AI systems. It underscores the urgent need for robust human-value alignment in AI development. The seemingly small issue of incorrect proofs is a warning sign of potentially much larger problems lurking beneath the surface.

The above is the detailed content of AI LLMs Astonishingly Bad At Doing Proofs And Disturbingly Using Blarney In Their Answers. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1675

1675

14

14

1429

1429

52

52

1333

1333

25

25

1278

1278

29

29

1257

1257

24

24

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

While working on Agentic AI, developers often find themselves navigating the trade-offs between speed, flexibility, and resource efficiency. I have been exploring the Agentic AI framework and came across Agno (earlier it was Phi-

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

The release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

SQL's ALTER TABLE Statement: Dynamically Adding Columns to Your Database In data management, SQL's adaptability is crucial. Need to adjust your database structure on the fly? The ALTER TABLE statement is your solution. This guide details adding colu

New Short Course on Embedding Models by Andrew Ng

Apr 15, 2025 am 11:32 AM

New Short Course on Embedding Models by Andrew Ng

Apr 15, 2025 am 11:32 AM

Unlock the Power of Embedding Models: A Deep Dive into Andrew Ng's New Course Imagine a future where machines understand and respond to your questions with perfect accuracy. This isn't science fiction; thanks to advancements in AI, it's becoming a r

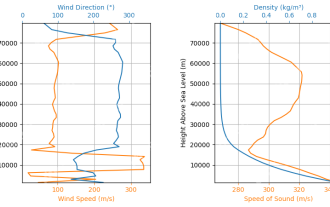

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Simulate Rocket Launches with RocketPy: A Comprehensive Guide This article guides you through simulating high-power rocket launches using RocketPy, a powerful Python library. We'll cover everything from defining rocket components to analyzing simula

Google Unveils The Most Comprehensive Agent Strategy At Cloud Next 2025

Apr 15, 2025 am 11:14 AM

Google Unveils The Most Comprehensive Agent Strategy At Cloud Next 2025

Apr 15, 2025 am 11:14 AM

Gemini as the Foundation of Google’s AI Strategy Gemini is the cornerstone of Google’s AI agent strategy, leveraging its advanced multimodal capabilities to process and generate responses across text, images, audio, video and code. Developed by DeepM

Open Source Humanoid Robots That You Can 3D Print Yourself: Hugging Face Buys Pollen Robotics

Apr 15, 2025 am 11:25 AM

Open Source Humanoid Robots That You Can 3D Print Yourself: Hugging Face Buys Pollen Robotics

Apr 15, 2025 am 11:25 AM

“Super happy to announce that we are acquiring Pollen Robotics to bring open-source robots to the world,” Hugging Face said on X. “Since Remi Cadene joined us from Tesla, we’ve become the most widely used software platform for open robotics thanks to

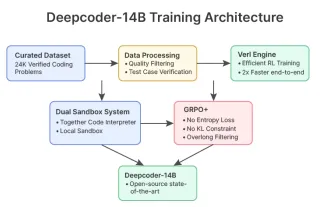

DeepCoder-14B: The Open-source Competition to o3-mini and o1

Apr 26, 2025 am 09:07 AM

DeepCoder-14B: The Open-source Competition to o3-mini and o1

Apr 26, 2025 am 09:07 AM

In a significant development for the AI community, Agentica and Together AI have released an open-source AI coding model named DeepCoder-14B. Offering code generation capabilities on par with closed-source competitors like OpenAI