Complex Reasoning in LLMs: Why do Smaller Models Struggle?

This research paper, "Not All LLM Reasoners Are Created Equal," explores the limitations of large language models (LLMs) in complex reasoning tasks, particularly those requiring multi-step problem-solving. While LLMs excel at challenging mathematical problems, their performance significantly degrades when faced with interconnected questions where the solution to one problem informs the next – a concept termed "compositional reasoning."

The study, conducted by researchers from Mila, Google DeepMind, and Microsoft Research, reveals a surprising weakness in smaller, more cost-efficient LLMs. These models, while proficient at simpler tasks, struggle with the "second-hop reasoning" needed to solve chained problems. This isn't due to issues like data leakage; rather, it stems from an inability to maintain context and logically connect problem parts. Instruction tuning, a common performance-enhancing technique, provides inconsistent benefits for smaller models, sometimes leading to overfitting.

Key Findings:

- Smaller LLMs exhibit a significant "reasoning gap" when tackling compositional problems.

- Performance drops dramatically when solving interconnected questions.

- Instruction tuning yields inconsistent improvements in smaller models.

- This reasoning limitation restricts the reliability of smaller LLMs in real-world applications.

- Even specialized math models struggle with compositional reasoning.

- More effective training methods are needed to enhance multi-step reasoning capabilities.

The paper uses a compositional Grade-School Math (GSM) test to illustrate this gap. The test involves two linked questions, where the answer to the first (Q1) becomes a variable (X) in the second (Q2). The results show that most models perform far worse on the compositional task than predicted by their performance on individual questions. Larger, more powerful models like GPT-4o demonstrate superior reasoning abilities, while smaller, cost-effective models, even those specialized in math, show a substantial performance decline.

A graph comparing open-source and closed-source LLMs highlights this reasoning gap. Smaller, cost-effective models consistently exhibit larger negative reasoning gaps, indicating poorer performance on compositional tasks compared to larger models. GPT-4o, for example, shows minimal gap, while others like Phi 3-mini-4k-IT demonstrate significant shortcomings.

Further analysis reveals that the reasoning gap is not solely due to benchmark leakage. The issues stem from overfitting to benchmarks, distraction by irrelevant context, and a failure to transfer information effectively between subtasks.

The study concludes that improving compositional reasoning requires innovative training approaches. While techniques like instruction tuning and math specialization offer some benefits, they are insufficient to bridge the reasoning gap. Exploring alternative methods, such as code-based reasoning, may be necessary to enhance the ability of LLMs to handle complex, multi-step reasoning tasks. The research emphasizes the need for improved training techniques to enable smaller, more cost-effective LLMs to reliably perform complex reasoning tasks.

The above is the detailed content of Complex Reasoning in LLMs: Why do Smaller Models Struggle?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1670

1670

14

14

1428

1428

52

52

1329

1329

25

25

1274

1274

29

29

1256

1256

24

24

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

While working on Agentic AI, developers often find themselves navigating the trade-offs between speed, flexibility, and resource efficiency. I have been exploring the Agentic AI framework and came across Agno (earlier it was Phi-

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

SQL's ALTER TABLE Statement: Dynamically Adding Columns to Your Database In data management, SQL's adaptability is crucial. Need to adjust your database structure on the fly? The ALTER TABLE statement is your solution. This guide details adding colu

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

The release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

Beyond The Llama Drama: 4 New Benchmarks For Large Language Models

Apr 14, 2025 am 11:09 AM

Beyond The Llama Drama: 4 New Benchmarks For Large Language Models

Apr 14, 2025 am 11:09 AM

Troubled Benchmarks: A Llama Case Study In early April 2025, Meta unveiled its Llama 4 suite of models, boasting impressive performance metrics that positioned them favorably against competitors like GPT-4o and Claude 3.5 Sonnet. Central to the launc

New Short Course on Embedding Models by Andrew Ng

Apr 15, 2025 am 11:32 AM

New Short Course on Embedding Models by Andrew Ng

Apr 15, 2025 am 11:32 AM

Unlock the Power of Embedding Models: A Deep Dive into Andrew Ng's New Course Imagine a future where machines understand and respond to your questions with perfect accuracy. This isn't science fiction; thanks to advancements in AI, it's becoming a r

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global Health

Apr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global Health

Apr 14, 2025 am 11:27 AM

Can a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

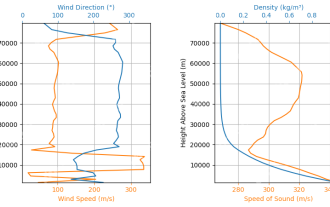

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Simulate Rocket Launches with RocketPy: A Comprehensive Guide This article guides you through simulating high-power rocket launches using RocketPy, a powerful Python library. We'll cover everything from defining rocket components to analyzing simula

Google Unveils The Most Comprehensive Agent Strategy At Cloud Next 2025

Apr 15, 2025 am 11:14 AM

Google Unveils The Most Comprehensive Agent Strategy At Cloud Next 2025

Apr 15, 2025 am 11:14 AM

Gemini as the Foundation of Google’s AI Strategy Gemini is the cornerstone of Google’s AI agent strategy, leveraging its advanced multimodal capabilities to process and generate responses across text, images, audio, video and code. Developed by DeepM