Are You Still Using LoRA to Fine-Tune Your LLM?

LoRA (Low Rank Adaptive - arxiv.org/abs/2106.09685) is a popular technology that is cost-effective and fine-tuned large language models (LLM). But in 2024, a large number of new parameter efficient fine-tuning technologies emerged, and various LoRA alternatives emerged one after another: SVF, SVFT, MiLoRA, PiSSA, LoRA-XS?... Most of them are based on a matrix technology that I like very much: Singular Value Decomposition (SVD). Let's dive into it in depth.

LoRA

The initial insight from LoRA is that all weights of fine-tuning models are over-operated. Instead, LoRA freezes the model and trains only a pair of small low-rank "adapters" matrices. See the illustration below (where W is any weight matrix in Transformer LLM).

Since there are much less gradients to be computed and stored, memory and computation cycles can be saved. For example, this is a Gemma 8B model that uses LoRA fine-tuning to simulate how pirates speak: only 22 million parameters can be trained, and 8.5 billion parameters remain frozen.

Since there are much less gradients to be computed and stored, memory and computation cycles can be saved. For example, this is a Gemma 8B model that uses LoRA fine-tuning to simulate how pirates speak: only 22 million parameters can be trained, and 8.5 billion parameters remain frozen.

LoRA is very popular. It has even entered mainstream ML frameworks such as Keras as a single-line API:

LoRA is very popular. It has even entered mainstream ML frameworks such as Keras as a single-line API:

<code>gemma.backbone.enable_lora(rank=8)</code>

But is LoRA the best? Researchers have been working to improve the formula. In fact, there are many ways to choose a smaller “adapter” matrix. Since most of them cleverly utilize the Singular Value Decomposition (SVD) of the matrix, let's pause for a little bit of math.

SVD: Simple Mathematics

SVD is a good tool for understanding matrix structure. This technique decomposes the matrix into three: W = USV T , where U and V are orthogonal (i.e., basis transform), and S is a diagonal matrix of sorted singular values. This decomposition always exists.

In the "textbook" SVD, U and V are square matrixes, while S is a rectangular matrix with singular values on the diagonal and zeros followed by. In fact, you can use square matrix S and rectangles U or V - see picture - the truncated part is just multiplied by zero. This "economy" SVD is used in common libraries such as numpy.linalg.svd.

In the "textbook" SVD, U and V are square matrixes, while S is a rectangular matrix with singular values on the diagonal and zeros followed by. In fact, you can use square matrix S and rectangles U or V - see picture - the truncated part is just multiplied by zero. This "economy" SVD is used in common libraries such as numpy.linalg.svd.

So how do we use this to more effectively choose the weights to train? Let's take a quick look at five recent SVD-based low-rank fine-tuning techniques with commentary instructions.

SVF

The easiest alternative to LoRA is to use SVD on the weight matrix of the model and then fine-tune the singular values directly. Strangely, this is the latest technology, called SVF, published in the Transformers² paper (arxiv.org/abs/2501.06252v2).

SVF is much economical in terms of parameters than LoRA. Furthermore, it makes the fine-tuning model composable. For more information on this, see my Transformers² description here, but combining two SVF fine-tuning models is just an addition operation:

SVF is much economical in terms of parameters than LoRA. Furthermore, it makes the fine-tuning model composable. For more information on this, see my Transformers² description here, but combining two SVF fine-tuning models is just an addition operation:

SVFT

SVFT

If you need more trainable parameters, the SVFT paper (arxiv.org/abs/2405.19597) explores a variety of methods, first by adding more trainable weights on the diagonal.

It also evaluates a variety of other alternatives, such as randomly scattering them into the "M" matrix.

It also evaluates a variety of other alternatives, such as randomly scattering them into the "M" matrix.

More importantly, the SVFT paper confirms that having more trainable values than diagonals is useful. See the fine-tuning results below.

More importantly, the SVFT paper confirms that having more trainable values than diagonals is useful. See the fine-tuning results below.

Next are several techniques to divide singular values into two groups of "big" and "small". But before we go on, let's pause for a little more SVD math.

Next are several techniques to divide singular values into two groups of "big" and "small". But before we go on, let's pause for a little more SVD math.

More SVD Mathematics

SVD is usually considered to be decomposed into three matrices W=USV T , but it can also be considered as a weighted sum of many rank 1 matrices, weighted by singular values:

If you want to prove this, use the formula of USV T form and matrix multiplication to express a single matrix element W jk on the one hand, and use the Σ s i u i v i T form on the other hand, and simplify the fact that S is a diagonal, and note that it is the same.

If you want to prove this, use the formula of USV T form and matrix multiplication to express a single matrix element W jk on the one hand, and use the Σ s i u i v i T form on the other hand, and simplify the fact that S is a diagonal, and note that it is the same.

In this representation, it's easy to see that you can split the sum into two parts. And since you can always sort singular values, you can divide them into "big" and "small" singular values.

Going back to the three matrix form W=USV T , this is what segmentation looks like:

Based on this formula, two papers explore what happens if you only adjust large singular values or only small singular values, i.e. PiSSA and MiLoRA.

Based on this formula, two papers explore what happens if you only adjust large singular values or only small singular values, i.e. PiSSA and MiLoRA.

PiSSA

PiSSA (main singular values and singular vector adaptation, arxiv.org/abs/2404.02948) claims that you should only adjust the large master values. The mechanism is as follows:

Excerpted from the paper: "PiSSA aims to approximate the complete fine-tuning by adjusting the main singular components that are believed to capture the nature of the weight matrix. Instead, MiLoRA is designed to adapt to new tasks while maximizing the knowledge of the underlying model."

Excerpted from the paper: "PiSSA aims to approximate the complete fine-tuning by adjusting the main singular components that are believed to capture the nature of the weight matrix. Instead, MiLoRA is designed to adapt to new tasks while maximizing the knowledge of the underlying model."

There is also an interesting discovery on the PiSSA paper: Complete fine-tuning is prone to overfitting. With low rank fine-tuning techniques, you may get better results on absolute values.

MiLoRA

MiLoRA

MiLoRA, on the other hand, claims that you should only adjust the small master value. It uses a similar mechanism to PiSSA:

Surprisingly, MiLoRA seems to have the upper hand, at least when fine-tuning the mathematical datasets, which may be quite consistent with the original pre-training. It can be argued that PiSSA should be more suitable to further bend the behavior of LLM from its pre-training.

Surprisingly, MiLoRA seems to have the upper hand, at least when fine-tuning the mathematical datasets, which may be quite consistent with the original pre-training. It can be argued that PiSSA should be more suitable to further bend the behavior of LLM from its pre-training.

LoRA-XS

LoRA-XS

Finally, I want to mention LoRA-XS (arxiv.org/abs/2405.17604). Very similar to PiSSA, but the mechanism is slightly different. It also shows that much fewer parameters than LoRA also yield good results.

The paper provides a mathematical explanation that this setup is "ideal" in two cases:

The paper provides a mathematical explanation that this setup is "ideal" in two cases:

- Cutting the bottom main value from SVD still approximates the weight matrix well

- Fine-tuning data distribution is close to pre-training data distribution

Both seem to me to doubt, so I won't go into the math in detail. Some results:

The fundamental assumption seems to be that singular values are divided into "big" and "small", but is that true? I quickly checked the Gemma2 9B on Colab. Bottom line: 99% of the singular values are in the range of 0.1 – 1.1. I'm not sure if it makes sense to divide them into "big" and "small".

The fundamental assumption seems to be that singular values are divided into "big" and "small", but is that true? I quickly checked the Gemma2 9B on Colab. Bottom line: 99% of the singular values are in the range of 0.1 – 1.1. I'm not sure if it makes sense to divide them into "big" and "small".

in conclusion

in conclusion

There are many other fine-tuning techniques for efficient parameterization. It is worth mentioning:

- DoRA (arxiv.org/abs/2402.09353), which divides the weights into size and orientation, and then adjusts those weights.

- AdaLoRA (arxiv.org/abs/2303.10512), which has a complex mechanism to find the best adjustment rank for a given trainingable weight budget.

My conclusion: To surpass the LoRA standard with 10x parameters, I like the simplicity of the SVF of Transformers². If you need more trainable weights, SVFT is a simple extension. Both use all singular values (full rank, no singular values pruning) and are still cheap?. I wish you a happy fine-tuning!

Note: All illustrations are created by the author or extracted from arxiv.org papers for comments and discussions.

The above is the detailed content of Are You Still Using LoRA to Fine-Tune Your LLM?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1677

1677

14

14

1430

1430

52

52

1333

1333

25

25

1278

1278

29

29

1257

1257

24

24

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

How to Build MultiModal AI Agents Using Agno Framework?

Apr 23, 2025 am 11:30 AM

While working on Agentic AI, developers often find themselves navigating the trade-offs between speed, flexibility, and resource efficiency. I have been exploring the Agentic AI framework and came across Agno (earlier it was Phi-

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost Efficiency

Apr 16, 2025 am 11:37 AM

The release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

SQL's ALTER TABLE Statement: Dynamically Adding Columns to Your Database In data management, SQL's adaptability is crucial. Need to adjust your database structure on the fly? The ALTER TABLE statement is your solution. This guide details adding colu

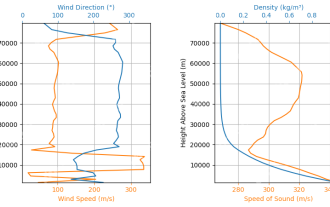

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Rocket Launch Simulation and Analysis using RocketPy - Analytics Vidhya

Apr 19, 2025 am 11:12 AM

Simulate Rocket Launches with RocketPy: A Comprehensive Guide This article guides you through simulating high-power rocket launches using RocketPy, a powerful Python library. We'll cover everything from defining rocket components to analyzing simula

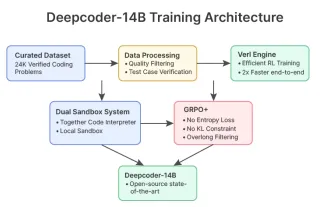

DeepCoder-14B: The Open-source Competition to o3-mini and o1

Apr 26, 2025 am 09:07 AM

DeepCoder-14B: The Open-source Competition to o3-mini and o1

Apr 26, 2025 am 09:07 AM

In a significant development for the AI community, Agentica and Together AI have released an open-source AI coding model named DeepCoder-14B. Offering code generation capabilities on par with closed-source competitors like OpenAI

The Prompt: ChatGPT Generates Fake Passports

Apr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake Passports

Apr 16, 2025 am 11:35 AM

Chip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Guy Peri Helps Flavor McCormick's Future Through Data Transformation

Apr 19, 2025 am 11:35 AM

Guy Peri Helps Flavor McCormick's Future Through Data Transformation

Apr 19, 2025 am 11:35 AM

Guy Peri is McCormick’s Chief Information and Digital Officer. Though only seven months into his role, Peri is rapidly advancing a comprehensive transformation of the company’s digital capabilities. His career-long focus on data and analytics informs

Runway AI's Gen-4: How Can AI Montage Go Beyond Absurdity

Apr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond Absurdity

Apr 16, 2025 am 11:45 AM

The film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

SVFT

SVFT MiLoRA

MiLoRA LoRA-XS

LoRA-XS in conclusion

in conclusion