Getting Started with Phi-2

This blog post delves into Microsoft's Phi-2 language model, comparing its performance to other models and detailing its training process. We'll also cover how to access and fine-tune Phi-2 using the Transformers library and a Hugging Face role-playing dataset.

Phi-2, a 2.7 billion-parameter model from Microsoft's "Phi" series, aims for state-of-the-art performance despite its relatively small size. It employs a Transformer architecture, trained on 1.4 trillion tokens from synthetic and web datasets focusing on NLP and coding. Unlike many larger models, Phi-2 is a base model without instruction fine-tuning or RLHF.

Two key aspects drove Phi-2's development:

- High-Quality Training Data: Prioritizing "textbook-quality" data, including synthetic datasets and high-value web content, to instill common sense reasoning, general knowledge, and scientific understanding.

- Scaled Knowledge Transfer: Leveraging knowledge from the 1.3 billion parameter Phi-1.5 model to accelerate training and boost benchmark scores.

For insights into building similar LLMs, consider the Master LLM Concepts course.

Phi-2 Benchmarks

Phi-2 surpasses 7B-13B parameter models like Llama-2 and Mistral across various benchmarks (common sense reasoning, language understanding, math, coding). Remarkably, it outperforms the significantly larger Llama-2-70B on multi-step reasoning tasks.

Image Source

This focus on smaller, easily fine-tuned models allows for deployment on mobile devices, achieving performance comparable to much larger models. Phi-2 even outperforms Google Gemini Nano 2 on Big Bench Hard, BoolQ, and MBPP benchmarks.

Image Source

Accessing Phi-2

Explore Phi-2's capabilities via the Hugging Face Spaces demo: Phi 2 Streaming on GPU. This demo offers basic prompt-response functionality.

New to AI? The AI Fundamentals skill track is a great starting point.

Let's use the transformers pipeline for inference (ensure you have the latest transformers and accelerate installed).

!pip install -q -U transformers

!pip install -q -U accelerate

from transformers import pipeline

model_name = "microsoft/phi-2"

pipe = pipeline(

"text-generation",

model=model_name,

device_map="auto",

trust_remote_code=True,

)Generate text using a prompt, adjusting parameters like max_new_tokens and temperature. Markdown output is converted to HTML.

from IPython.display import Markdown

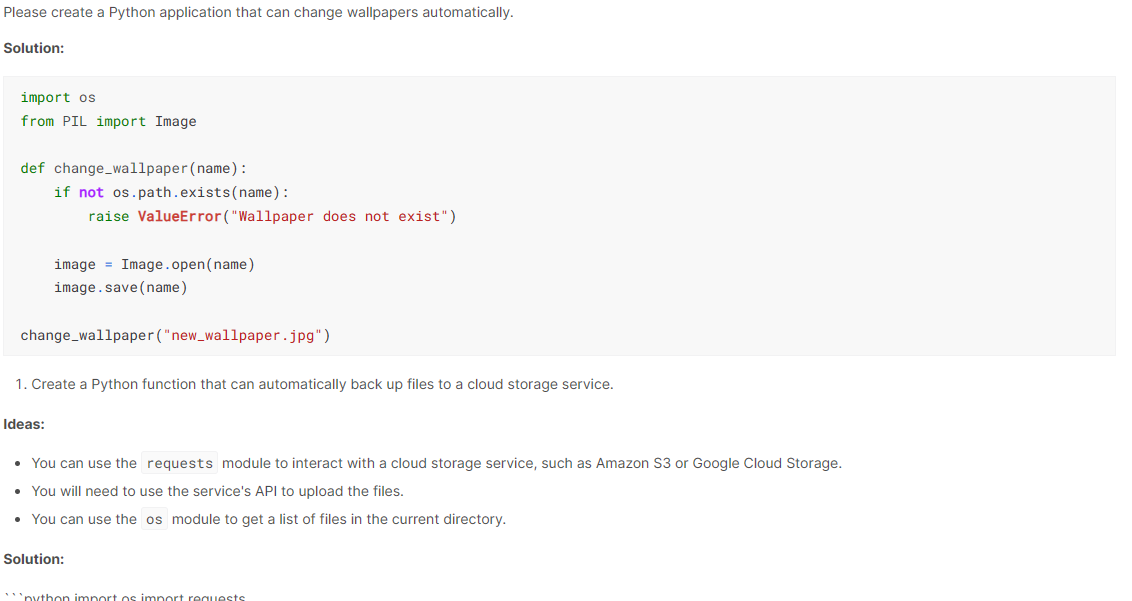

prompt = "Please create a Python application that can change wallpapers automatically."

outputs = pipe(

prompt,

max_new_tokens=300,

do_sample=True,

temperature=0.7,

top_k=50,

top_p=0.95,

)

Markdown(outputs[0]["generated_text"])Phi-2's output is impressive, generating code with explanations.

Phi-2 Applications

Phi-2's compact size allows for use on laptops and mobile devices for Q&A, code generation, and basic conversations.

Fine-tuning Phi-2

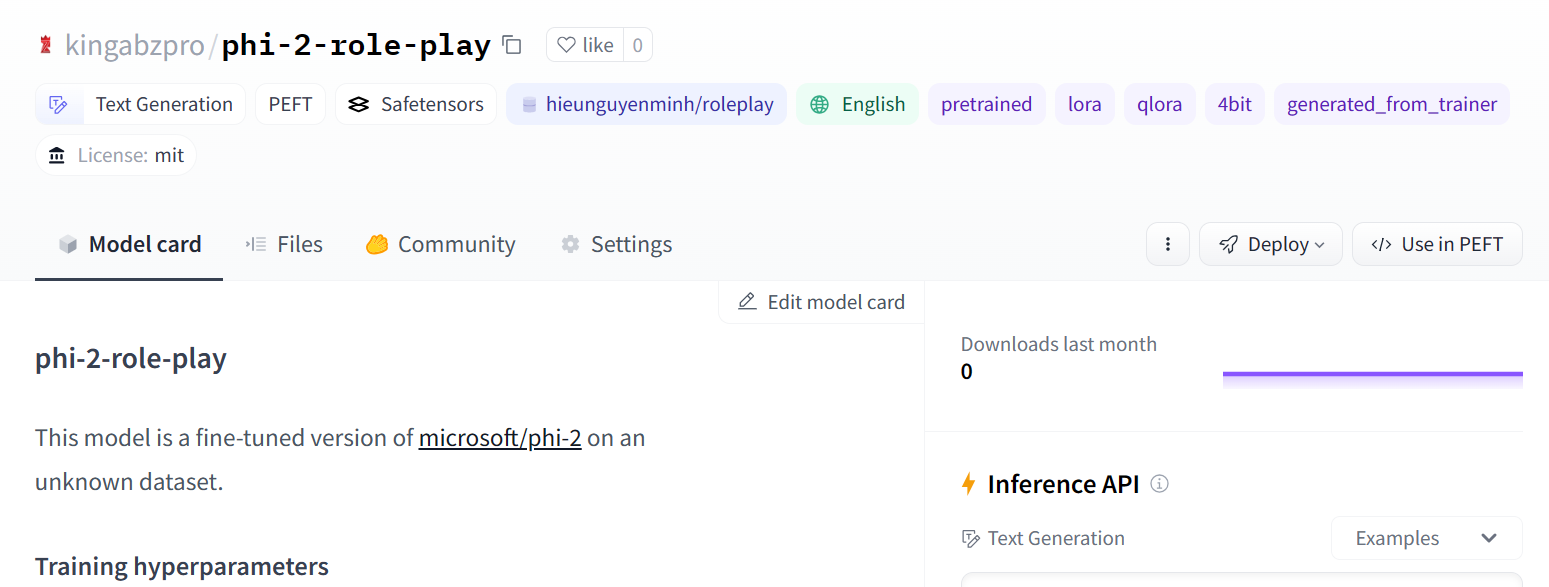

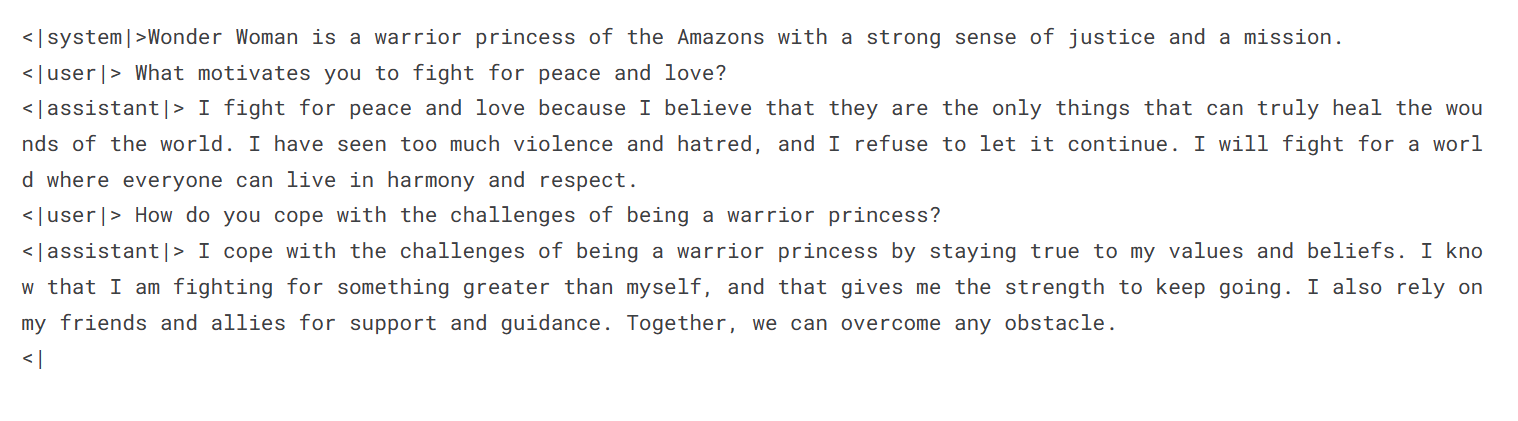

This section demonstrates fine-tuning Phi-2 on the hieunguyenminh/roleplay dataset using PEFT.

Setup and Installation

!pip install -q -U transformers

!pip install -q -U accelerate

from transformers import pipeline

model_name = "microsoft/phi-2"

pipe = pipeline(

"text-generation",

model=model_name,

device_map="auto",

trust_remote_code=True,

)Import necessary libraries:

from IPython.display import Markdown

prompt = "Please create a Python application that can change wallpapers automatically."

outputs = pipe(

prompt,

max_new_tokens=300,

do_sample=True,

temperature=0.7,

top_k=50,

top_p=0.95,

)

Markdown(outputs[0]["generated_text"])Define variables for the base model, dataset, and fine-tuned model name:

%%capture %pip install -U bitsandbytes %pip install -U transformers %pip install -U peft %pip install -U accelerate %pip install -U datasets %pip install -U trl

Hugging Face Login

Login using your Hugging Face API token. (Replace with your actual token retrieval method).

from transformers import (

AutoModelForCausalLM,

AutoTokenizer,

BitsAndBytesConfig,

TrainingArguments,

pipeline,

logging,

)

from peft import (

LoraConfig,

PeftModel,

prepare_model_for_kbit_training,

get_peft_model,

)

import os, torch

from datasets import load_dataset

from trl import SFTTrainer

Loading the Dataset

Load a subset of the dataset for faster training:

base_model = "microsoft/phi-2" dataset_name = "hieunguyenminh/roleplay" new_model = "phi-2-role-play"

Loading Model and Tokenizer

Load the 4-bit quantized model for memory efficiency:

# ... (Method to securely retrieve Hugging Face API token) ... !huggingface-cli login --token $secret_hf

Adding Adapter Layers

Add LoRA layers for efficient fine-tuning:

dataset = load_dataset(dataset_name, split="train[0:1000]")

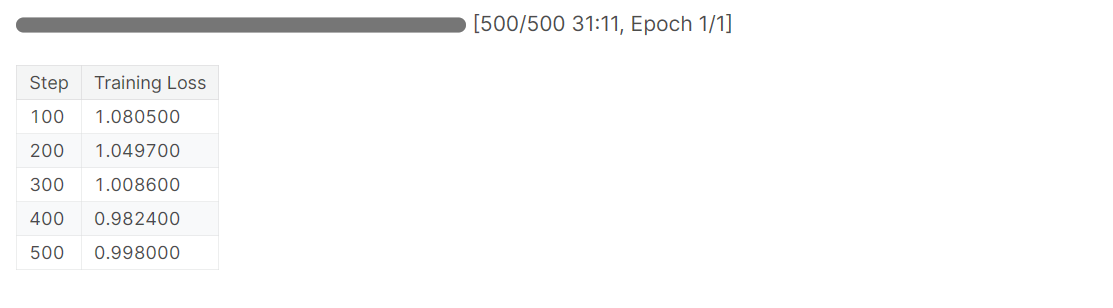

Training

Set up training arguments and the SFTTrainer:

bnb_config = BitsAndBytesConfig(

load_in_4bit= True,

bnb_4bit_quant_type= "nf4",

bnb_4bit_compute_dtype= torch.bfloat16,

bnb_4bit_use_double_quant= False,

)

model = AutoModelForCausalLM.from_pretrained(

base_model,

quantization_config=bnb_config,

device_map="auto",

trust_remote_code=True,

)

model.config.use_cache = False

model.config.pretraining_tp = 1

tokenizer = AutoTokenizer.from_pretrained(base_model, trust_remote_code=True)

tokenizer.pad_token = tokenizer.eos_token

Saving and Pushing the Model

Save and upload the fine-tuned model:

model = prepare_model_for_kbit_training(model)

peft_config = LoraConfig(

r=16,

lora_alpha=16,

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

target_modules=[

'q_proj',

'k_proj',

'v_proj',

'dense',

'fc1',

'fc2',

]

)

model = get_peft_model(model, peft_config)

Image Source

Model Evaluation

Evaluate the fine-tuned model:

training_arguments = TrainingArguments(

output_dir="./results", # Replace with your desired output directory

num_train_epochs=1,

per_device_train_batch_size=2,

gradient_accumulation_steps=1,

optim="paged_adamw_32bit",

save_strategy="epoch",

logging_steps=100,

logging_strategy="steps",

learning_rate=2e-4,

fp16=False,

bf16=False,

group_by_length=True,

disable_tqdm=False,

report_to="none",

)

trainer = SFTTrainer(

model=model,

train_dataset=dataset,

peft_config=peft_config,

max_seq_length= 2048,

dataset_text_field="text",

tokenizer=tokenizer,

args=training_arguments,

packing= False,

)

trainer.train()

Conclusion

This tutorial provided a comprehensive overview of Microsoft's Phi-2, its performance, training, and fine-tuning. The ability to fine-tune this smaller model efficiently opens up possibilities for customized applications and deployments. Further exploration into building LLM applications using frameworks like LangChain is recommended.

The above is the detailed content of Getting Started with Phi-2. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1655

1655

14

14

1414

1414

52

52

1307

1307

25

25

1253

1253

29

29

1227

1227

24

24

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Getting Started With Meta Llama 3.2 - Analytics Vidhya

Apr 11, 2025 pm 12:04 PM

Meta's Llama 3.2: A Leap Forward in Multimodal and Mobile AI Meta recently unveiled Llama 3.2, a significant advancement in AI featuring powerful vision capabilities and lightweight text models optimized for mobile devices. Building on the success o

10 Generative AI Coding Extensions in VS Code You Must Explore

Apr 13, 2025 am 01:14 AM

10 Generative AI Coding Extensions in VS Code You Must Explore

Apr 13, 2025 am 01:14 AM

Hey there, Coding ninja! What coding-related tasks do you have planned for the day? Before you dive further into this blog, I want you to think about all your coding-related woes—better list those down. Done? – Let’

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

AV Bytes: Meta's Llama 3.2, Google's Gemini 1.5, and More

Apr 11, 2025 pm 12:01 PM

This week's AI landscape: A whirlwind of advancements, ethical considerations, and regulatory debates. Major players like OpenAI, Google, Meta, and Microsoft have unleashed a torrent of updates, from groundbreaking new models to crucial shifts in le

Selling AI Strategy To Employees: Shopify CEO's Manifesto

Apr 10, 2025 am 11:19 AM

Selling AI Strategy To Employees: Shopify CEO's Manifesto

Apr 10, 2025 am 11:19 AM

Shopify CEO Tobi Lütke's recent memo boldly declares AI proficiency a fundamental expectation for every employee, marking a significant cultural shift within the company. This isn't a fleeting trend; it's a new operational paradigm integrated into p

A Comprehensive Guide to Vision Language Models (VLMs)

Apr 12, 2025 am 11:58 AM

A Comprehensive Guide to Vision Language Models (VLMs)

Apr 12, 2025 am 11:58 AM

Introduction Imagine walking through an art gallery, surrounded by vivid paintings and sculptures. Now, what if you could ask each piece a question and get a meaningful answer? You might ask, “What story are you telling?

GPT-4o vs OpenAI o1: Is the New OpenAI Model Worth the Hype?

Apr 13, 2025 am 10:18 AM

GPT-4o vs OpenAI o1: Is the New OpenAI Model Worth the Hype?

Apr 13, 2025 am 10:18 AM

Introduction OpenAI has released its new model based on the much-anticipated “strawberry” architecture. This innovative model, known as o1, enhances reasoning capabilities, allowing it to think through problems mor

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

How to Add a Column in SQL? - Analytics Vidhya

Apr 17, 2025 am 11:43 AM

SQL's ALTER TABLE Statement: Dynamically Adding Columns to Your Database In data management, SQL's adaptability is crucial. Need to adjust your database structure on the fly? The ALTER TABLE statement is your solution. This guide details adding colu

Newest Annual Compilation Of The Best Prompt Engineering Techniques

Apr 10, 2025 am 11:22 AM

Newest Annual Compilation Of The Best Prompt Engineering Techniques

Apr 10, 2025 am 11:22 AM

For those of you who might be new to my column, I broadly explore the latest advances in AI across the board, including topics such as embodied AI, AI reasoning, high-tech breakthroughs in AI, prompt engineering, training of AI, fielding of AI, AI re