Web Front-end

Web Front-end

JS Tutorial

JS Tutorial

Building a realtime eye tracking experience with Supabase and WebGazer.js

Building a realtime eye tracking experience with Supabase and WebGazer.js

Building a realtime eye tracking experience with Supabase and WebGazer.js

TL;DR:

- Built with Supabase, React, WebGazer.js, Motion One, anime.js, Stable Audio

- Leverages Supabase Realtime Presence & Broadcast (no database tables used at all!)

- GitHub repo

- Website

- Demo video

Yet another Supabase Launch Week Hackathon and yet another experimental project, called Gaze into the Abyss. This ended up being both one of the simplest and complex projects at the same time. Luckily I've been enjoying Cursor quite a bit recently, so I had some helping hands to make it through! I also wanted to validate a question in my mind: is it possible to use just the realtime features from Supabase without any database tables? The (maybe somewhat obvious) answer is: yes, yes it is (love you, Realtime team ♥️). So let's dive a bit deeper into the implementation.

The idea

I was just one day randomly just thinking about Nietzsche's quote about the abyss and that it would be nice (and cool) to actually visualize it somehow: you stare into a dark screen and something stares back at you. Nothing much more to it!

Building the project

Initially I had the idea that I would use Three.js to make this project, however I realized it'd mean that I'd need to create or find some free assets for the 3D eye(s). I decided that it's a bit too much, especially since I didn't have too much time to work on the project itself, and decided to do it in 2D with SVGs instead.

I also did not want it to be only visual: it would be a better experience with some audio too. So I had an idea that it would be awesome if the participants could talk to a microphone and others could hear it as ineligible whispers or winds passing by. This, however, turned out very challenging and decided to drop it completely as I wasn't able to hook up WebAudio and WebRTC together well. I do have a leftover component in the codebase which listens to the local microphone and triggers "wind sounds" for the current user if you want to take a look. Maybe something to add in the future?

Realtime rooms

Before working on any visual stuff, I wanted to test out the realtime setup I had in mind. Since there are some limitations in the realtime feature I wanted it work so that:

- There are max. 10 participants in one channel at a time

- meaning you'd need to join a new channel if one is full

- You should only see other participants' eyes

For this I came up with an useEffect setup where it recursively joins to a realtime channel like so:

1 |

|

This joinRoom lives inside the useEffect hook and is called when the room component is mounted. One caveat I found out when working on this feature was that the currentPresences param does not contain any values in the join event even though it is available. I'm not sure if it's a bug in the implementation or working as intended. Hence needing to do a manual room.presenceState fetch to get the number of participants in the room whenever the user joins.

We check the participant count and either unsubscribe from the current room and try to join another room, or then proceed with the current room. We do this in the join event as sync would be too late (it triggers after join or leave events).

I tested this implementation by opening a whole lot of tabs in my browser and all seemed swell!

After that I wanted to debug the solution with mouse position updates and quickly ran into some issues of sending too many messages in the channel! The solution: throttle the calls.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

|

Cursor came up with this little throttle function creator and I used it with the eye tracking broadcasts like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

|

This helped a lot! Also, in the initial versions I had the eye tracking messages sent with presence however broadcast allows more messages per second, so I switched the implementation to that instead. It's especially crucial in eye tracking since the camera will record everything all the time.

Eye tracking

I had ran into WebGazer.js a while back when I first had the idea for this project. It's a very interesting project and works suprisingly well!

The whole eye tracking capabilities are done in one function in a useEffect hook:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 |

|

Getting the information where the user is looking at is simple and works like getting mouse positions on the screen. However, I also wanted to add blink detection as (a cool) feature, which required jumping through some hoops.

When you google information about WebGazer and blink detection, you can see some remanents of an initial implementation. Like there are commented out code in the source even. Unfortunately these kind of capabilities do not exit in the library. You'll need to do it manually.

After a lot of trial and error, Cursor and I were able to come up with a solution that calculates pixels & brightness levels from the eye patch data to determine when user is blinking. It also has some dynamic lighting adjustments as I noticed that (at least for me) the web cam doesn't always recognize when you are blinking depending on your lighting. For me it worked worse the lighter my picture/room was, and better in darker lighting (go figure).

While debugging the eye tracking capabilities (WebGazer has a very nice .setPredictionPoints call that displays a red dot on the screen to visualize where you are looking at), I noticed that the tracking is not very accurate unless you calibrate it. Which is what the project asks you to do before joining any rooms.

1 |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

|

It was a very cool experience to see this in action! I applied the same approach to the surrounding lines and instructed Cursor to "collapse" them towards the center: which it did pretty much with one go!

The eyes would then be rendered inside a simple CSS grid with cells aligned so that a full room looks like a big eye.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

|

Final touches

Then slap some nice intro screen and background music and the project is good to go!

Audio always improves the experience when you are working on things like this, so I used Stable Audio to generate a background music when the user "enters the abyss." The prompt I used for the music was the following:

Ambient, creepy, background music, whispering sounds, winds, slow tempo, eerie, abyss

I also thought that just plain black screen is a bit boring, so I added some animated SVG filter stuff on the background. Additionally I added a dark, blurred circle in the center of the screen to have some nice fading effect. I probably could've done this with SVG filters, however I didn't want to spend too much time on this. Then to have some more movement, I made the background rotate on its axis. Sometimes doing animations with the SVG filters is a bit wonky, so I decided to do it this way instead.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

|

The above is the detailed content of Building a realtime eye tracking experience with Supabase and WebGazer.js. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1663

1663

14

14

1419

1419

52

52

1313

1313

25

25

1263

1263

29

29

1236

1236

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

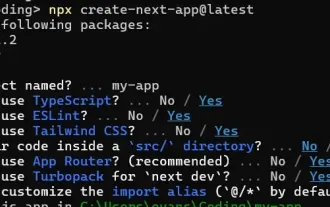

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing