How Saved the Day - Handling Large Images in the Browser

Saved the Day - Handling Large Images in the Browser" />

Saved the Day - Handling Large Images in the Browser" />

A client uploaded 28 images, each around 5800 x 9500 pixels and 28 MB in size. When attempting to display these images in the browser, the entire tab froze - even refusing to close - for a solid 10 minutes. And this was on a high-end machine with a Ryzen 9 CPU and an RTX 4080 GPU, not a tiny laptop.

Here’s The Situation

In our CMS (Grace Web), when an admin uploads a file - be it a picture or a video - we display it immediately after the upload finishes. It's great for UX - it confirms the upload was successful, they uploaded what they intended to, and it shows responsiveness of the UI for better feel.

But with these massive images, the browser could barely display them and froze up.

It's important to note that the issue wasn't with the upload itself but with displaying these large images. Rendering 28 huge images simultaneously and one by one was just too much. Even on a blank page, it's just... nope.

Why Did This Happen (Deep Dive)?

Anytime you display a picture in an tag, the browser goes through an entire process that employs the CPU, RAM, GPU, and sometimes the drive - sometimes even cycling through this process multiple times before the picture is displayed during image loading and layout recalculations. It goes through calculating pixels, transferring data among hardware, interpolating algorithms, etc. For a browser, it’s a significant process.

The Brainstormed Solutions

We considered several options:

-

Resize Immediately After Upload and Display Thumbnail

- Issue: Resizing could take too long and isn't guaranteed to be instantaneous, especially if the resizing queue isn't empty - results in not-a-responsive system.

-

Check File Sizes and Display Only Their Names for Large Files

- Issue: This would detract from the user experience, especially for those who require greater control and checks of any media material (incidentally, those are often the same ones with large files).

Neither of these solutions felt usable. We didn't want to limit our admins' ability to upload whatever they needed, nor did we want to compromise on the user experience.

Lightbulb! Using

I recalled that the

Testing the Theory

We decided to replace the elements with

const canvas = document.createElement('canvas');

const ctx = canvas.getContext('2d');

const img = new Image();

// img.src = URL.createObjectURL(file); // Use this if you're working with uploaded files

img.src = filesroot + data.file_path; // Our existing file path

img.onload = function() {

// Resize canvas to desired size (for preview)

canvas.width = 80 * (img.width / img.height); // Adjust scaling as needed

canvas.height = 80;

// Draw the image onto the canvas, resizing it in the process

ctx.drawImage(img, 0, 0, canvas.width, canvas.height);

file_div.innerHTML = ''; // Prepared <div> in UI to display the thumbnail

file_div.appendChild(canvas);

};

The Results

The improvement was immediate and dramatic! Not only did the browser handle the 28 large images without any hiccups, but we also pushed it further by loading 120 MB images measuring 28,000 x 17,000 pixels, and it still worked as if it was a tiny icon.

Where Is the Difference?

Using the

-

still attempts to render 28,000 * 17,000 = 476,000,000 pixels.

- This

draws only 80 * 80 = 6,400 pixels.

By resizing the image within the

Next Steps

Given this success, we're now considering replacing with

We'll be conducting testing and benchmarking to measure any performance gains. I'll be sure to share our findings in a future article.

Important Caveats

While using

-

Accessibility:

elements are readable by screen readers, aiding visually impaired users.

-

SEO: Search engines index images from

tags, which can improve your site's visibility. (In the same way, AI-training scrapers "index" the images, too. Well, what can you do...)

-

with

can be used to automatically detect pixel density and show bigger or smaller pictures for a perfect fit— can’t. I wrote an article on how to build your .

For public websites, it's best to properly resize images on the server side before serving them to users. If you're interested in how to automate image resizing and optimization, I wrote an article on that topic you might find useful.

Conclusion

I love optimization! Even though we are moving towards local high-performance hardware each day, by optimizing - even when "it's good, but can be better" - we could prevent issues like this from the beginning.

This approach is not standard. And I don’t care for standards very much. They are often treated as hard rules, but they are just guidelines. This solution fits. We do not have clients with ancient devices without solid

Do you have some similar experiences? How did you handle it? Feel free to share your experiences or ask questions in the comments below!

The above is the detailed content of How Saved the Day - Handling Large Images in the Browser. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1421

1421

52

52

1315

1315

25

25

1266

1266

29

29

1239

1239

24

24

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

Demystifying JavaScript: What It Does and Why It Matters

Apr 09, 2025 am 12:07 AM

JavaScript is the cornerstone of modern web development, and its main functions include event-driven programming, dynamic content generation and asynchronous programming. 1) Event-driven programming allows web pages to change dynamically according to user operations. 2) Dynamic content generation allows page content to be adjusted according to conditions. 3) Asynchronous programming ensures that the user interface is not blocked. JavaScript is widely used in web interaction, single-page application and server-side development, greatly improving the flexibility of user experience and cross-platform development.

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The Evolution of JavaScript: Current Trends and Future Prospects

Apr 10, 2025 am 09:33 AM

The latest trends in JavaScript include the rise of TypeScript, the popularity of modern frameworks and libraries, and the application of WebAssembly. Future prospects cover more powerful type systems, the development of server-side JavaScript, the expansion of artificial intelligence and machine learning, and the potential of IoT and edge computing.

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

JavaScript Engines: Comparing Implementations

Apr 13, 2025 am 12:05 AM

Different JavaScript engines have different effects when parsing and executing JavaScript code, because the implementation principles and optimization strategies of each engine differ. 1. Lexical analysis: convert source code into lexical unit. 2. Grammar analysis: Generate an abstract syntax tree. 3. Optimization and compilation: Generate machine code through the JIT compiler. 4. Execute: Run the machine code. V8 engine optimizes through instant compilation and hidden class, SpiderMonkey uses a type inference system, resulting in different performance performance on the same code.

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python vs. JavaScript: The Learning Curve and Ease of Use

Apr 16, 2025 am 12:12 AM

Python is more suitable for beginners, with a smooth learning curve and concise syntax; JavaScript is suitable for front-end development, with a steep learning curve and flexible syntax. 1. Python syntax is intuitive and suitable for data science and back-end development. 2. JavaScript is flexible and widely used in front-end and server-side programming.

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript: Exploring the Versatility of a Web Language

Apr 11, 2025 am 12:01 AM

JavaScript is the core language of modern web development and is widely used for its diversity and flexibility. 1) Front-end development: build dynamic web pages and single-page applications through DOM operations and modern frameworks (such as React, Vue.js, Angular). 2) Server-side development: Node.js uses a non-blocking I/O model to handle high concurrency and real-time applications. 3) Mobile and desktop application development: cross-platform development is realized through ReactNative and Electron to improve development efficiency.

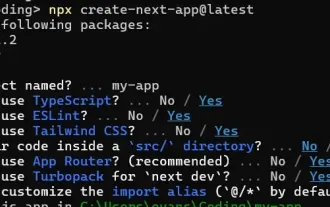

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

How to Build a Multi-Tenant SaaS Application with Next.js (Frontend Integration)

Apr 11, 2025 am 08:22 AM

This article demonstrates frontend integration with a backend secured by Permit, building a functional EdTech SaaS application using Next.js. The frontend fetches user permissions to control UI visibility and ensures API requests adhere to role-base

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

From C/C to JavaScript: How It All Works

Apr 14, 2025 am 12:05 AM

The shift from C/C to JavaScript requires adapting to dynamic typing, garbage collection and asynchronous programming. 1) C/C is a statically typed language that requires manual memory management, while JavaScript is dynamically typed and garbage collection is automatically processed. 2) C/C needs to be compiled into machine code, while JavaScript is an interpreted language. 3) JavaScript introduces concepts such as closures, prototype chains and Promise, which enhances flexibility and asynchronous programming capabilities.

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

Building a Multi-Tenant SaaS Application with Next.js (Backend Integration)

Apr 11, 2025 am 08:23 AM

I built a functional multi-tenant SaaS application (an EdTech app) with your everyday tech tool and you can do the same. First, what’s a multi-tenant SaaS application? Multi-tenant SaaS applications let you serve multiple customers from a sing